Before working at Zapier, I didn't have first-hand experience with UX (user experience) research. Largely based on instinct or a few Google Analytics reports, those experiences put decision-making in a black box for me. I never really understood the "why" behind design decisions.

But a simple idea completely changed how I thought of research: Research is a team sport. It’s the idea that you bring everyone along on an all-hands research extravaganza. Everyone helps, everyone gains empathy, and everyone knows why a project takes a certain path.

Here’s how our team makes it easy for everyone to participate in UX research efforts. Bonus: We've provided templates and other resources at the end of this article so you can apply these methods yourself to get to know better how your users experience your product.

Join our Design Feedback Group to try new and upcoming features on Zapier before anyone else and help guide the future of our products to make work easier for everyone

Form Questions and Methods

When we kick off a research project, our team comes up with a list of the right questions to ask, which is one of the hardest parts of research. Rather than focus on the final questions you’ll ask, first think about what your team doesn’t know: Have group discussions, go through thought exercises (start with Questions and Assumptions), try to uncover any knowledge gaps your team has. When you have a healthy list of unknowns, you’ll be in better shape to frame your final questions.

In addition to knowledge gaps, you also have anecdotal beliefs of what a customer will do with or how they’ll think about your feature—we call these assumptions. These assumptions are likely phrased in an observable way (e.g., new users will think this is intuitive); if they aren't, convert them so they are.

Build an Interview Script

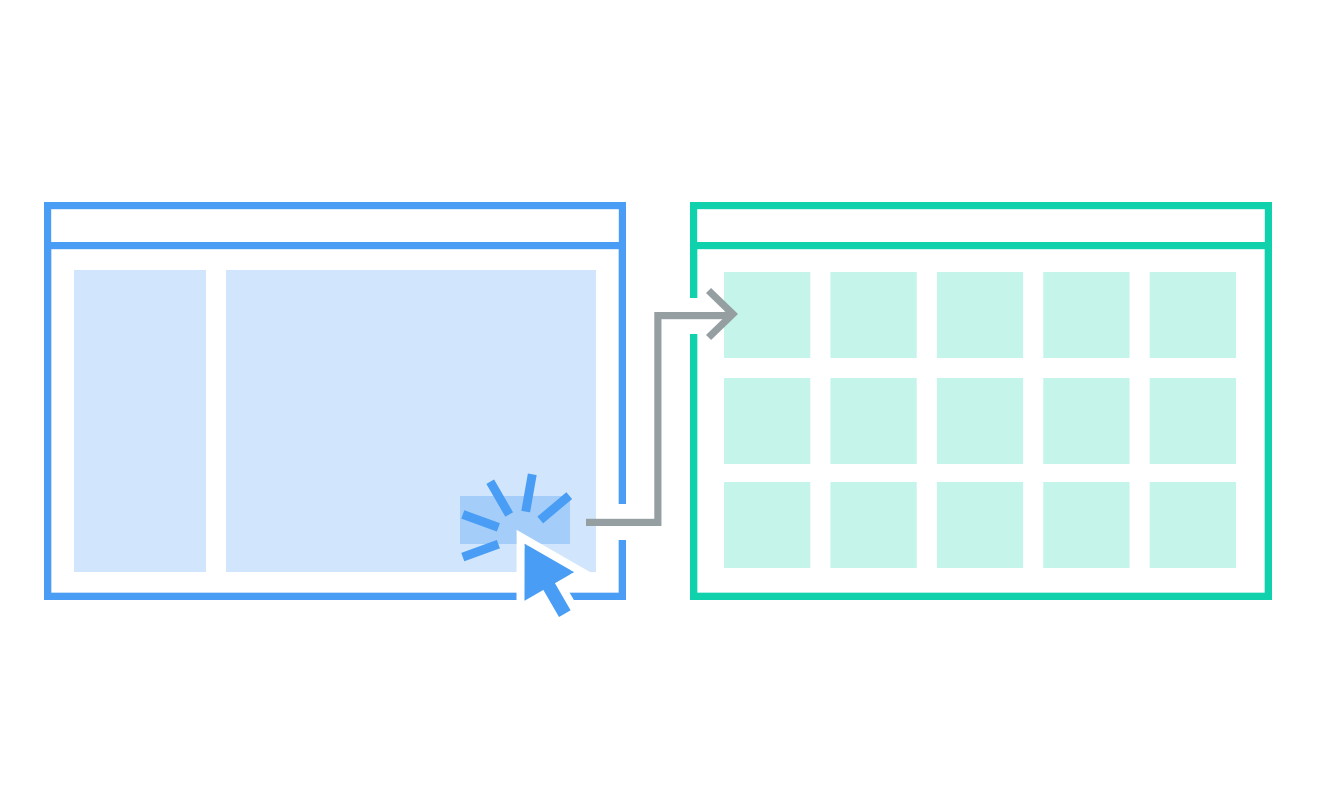

To research how a new feature might work, we need to observe how customers interact with that feature. These observations can be difficult if the feature doesn’t exist yet, which is often the case. So to get our insights before engineering starts building, we put together a script and click-through prototype to take different types of customers through a flow and watch what they do.

Using this script and prototype approach works well on a remote team. We hop on a video call with a participant, send them a link to the prototype, have them share their screen, guide them with our script, and make our observations.

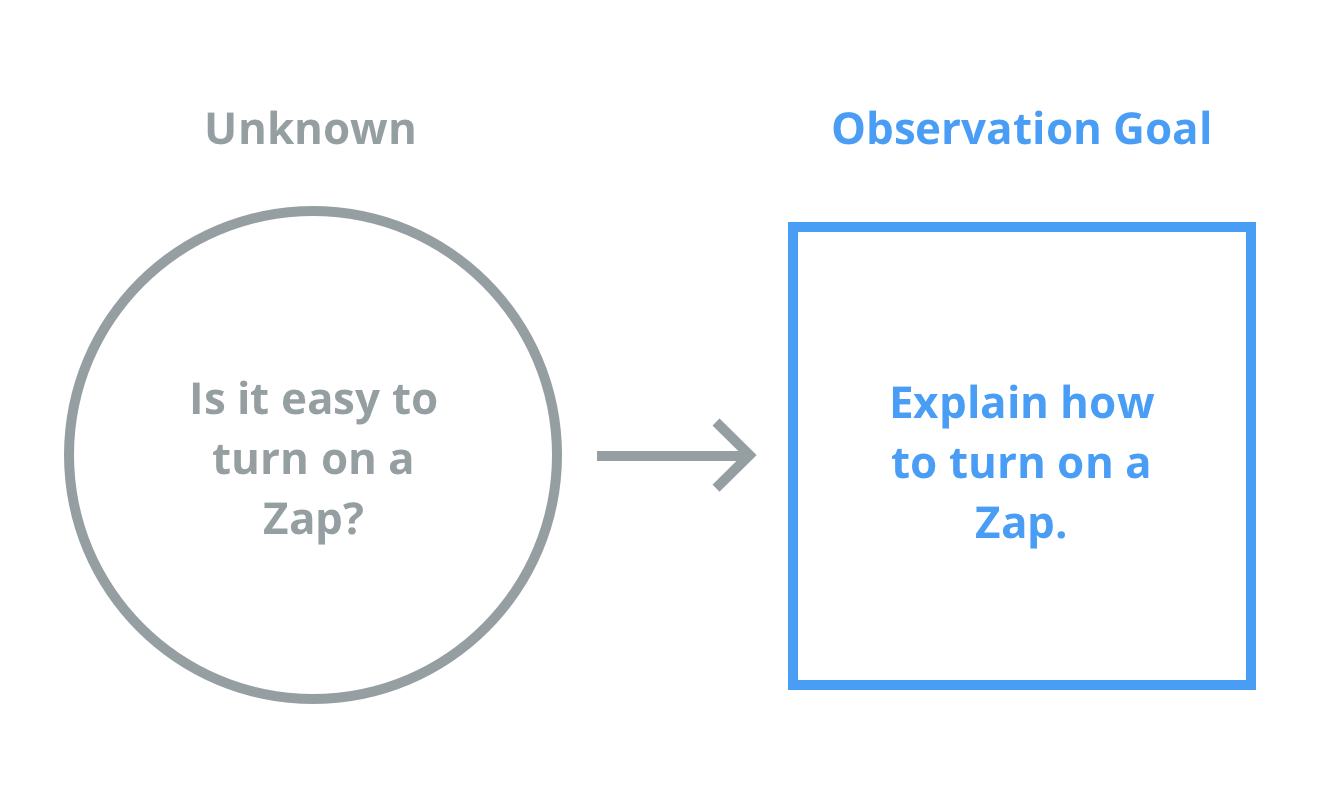

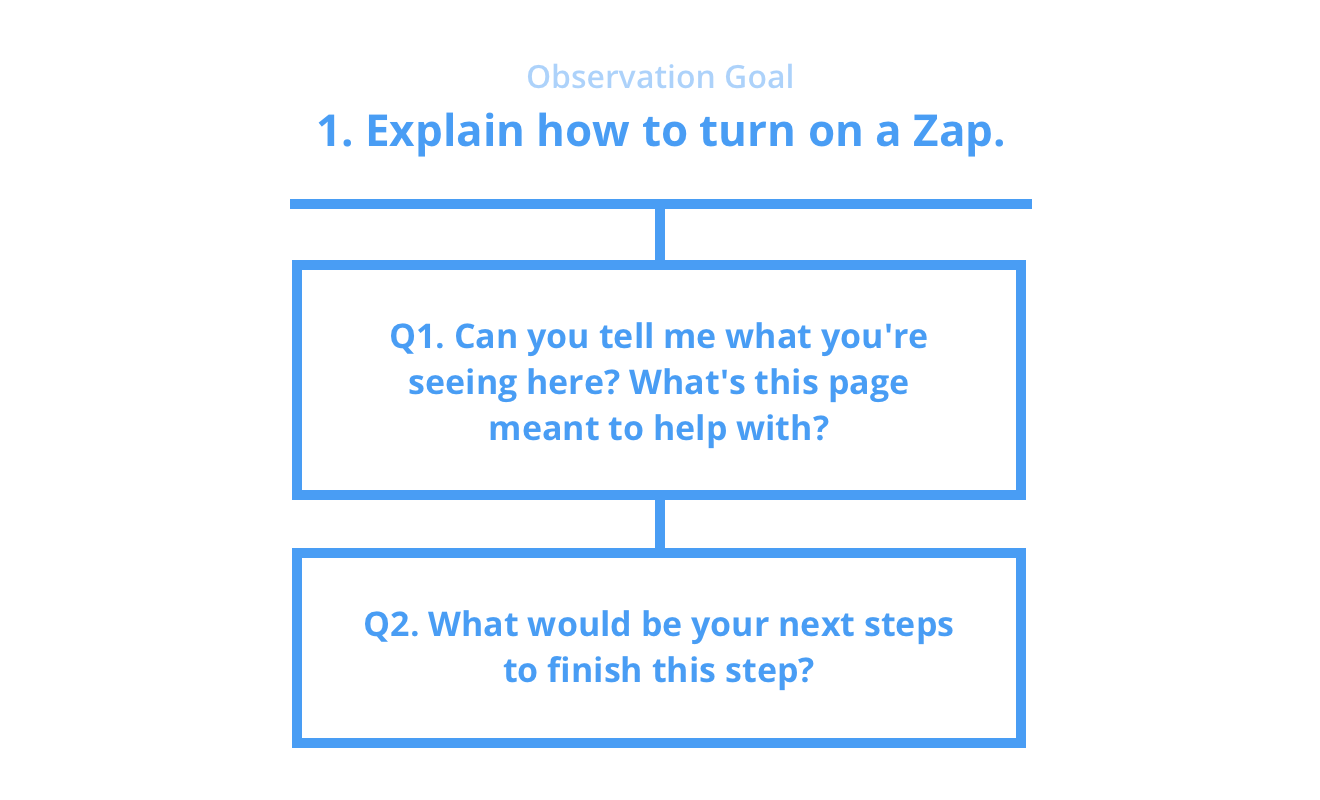

A good script starts with a list of observation goals. You’ll build this list by converting your unknowns, questions, and assumptions from earlier into actionable statements. These goals guide the prototype design and help you put together non-leading questions around that statement so your script feels like a conversation.

Observation goals must be observable—you have to observe the customer providing either an invalidating or validating response, or completing a task. To get participants to do this, you need to form questions around each observation goal. These questions are meant to put the customer in a situation similar to what they’d experience in the wild. To show how this might work, let’s continue with the example from above.

Notice that we don’t outright ask if it’s easy to turn on a Zap (our word for automated workflows). More often than not, participants will answer yes to a question like this to not hurt your feelings, or as a default if they’re uncertain. Leading questions gets you the answer you’re looking for (aka "confirmation bias"), and that’s not the goal of research. Instead, you want to observe customer behavior as close as possible to what it would be like in an everyday situation.

At Zapier, our team uses a script template to create a rhythm throughout our interview. The template handles things like intros, outros, and handy interview tips. We paste our observation goals into the template and use it to guide our discussions. After a few sessions, you likely won't even need the script as you could probably recite it in your sleep. (Template available at the end of this post.)

Design the Prototype

Now that your team has observation goals and questions to gather unbiased observations, it’s a good time to jump into design. Chances are your team has already been noodling around with ideas. Take those ideas and put them into a realistic scenario. Consider the observation goals in your script and make the flow feel as natural as possible—something the user would normally experience within your product.

You may need to give more or less context to your script and prototype. Along with the goals you’re trying to observe, you may have to include backstory to make your feature feel more natural.

Take our example: Since we want to see if it’s easy to turn on a Zap, it might be nice to give participants more context to get them to that point. To make this observation goal feel realistic, we can have them create the Zap before turning it on. In the end, though, you and your team will know what’s right for your project.

Recruit for UX Testing

Armed with a script and prototype, it’s time to go out and observe! But there's a new question: Who will you observe? Recruiting for tests is an article in and of itself, so I’ll keep it brief. Before your team reaches out to possible participants, make sure you understand the types of people you want to talk to. For example, you might want them to be non-tech savvy or only work in marketing. It all depends on who your team’s target market is for the feature you’re working on. When you know the traits of the people you’d like to talk to, it’s time to send out a screener survey.

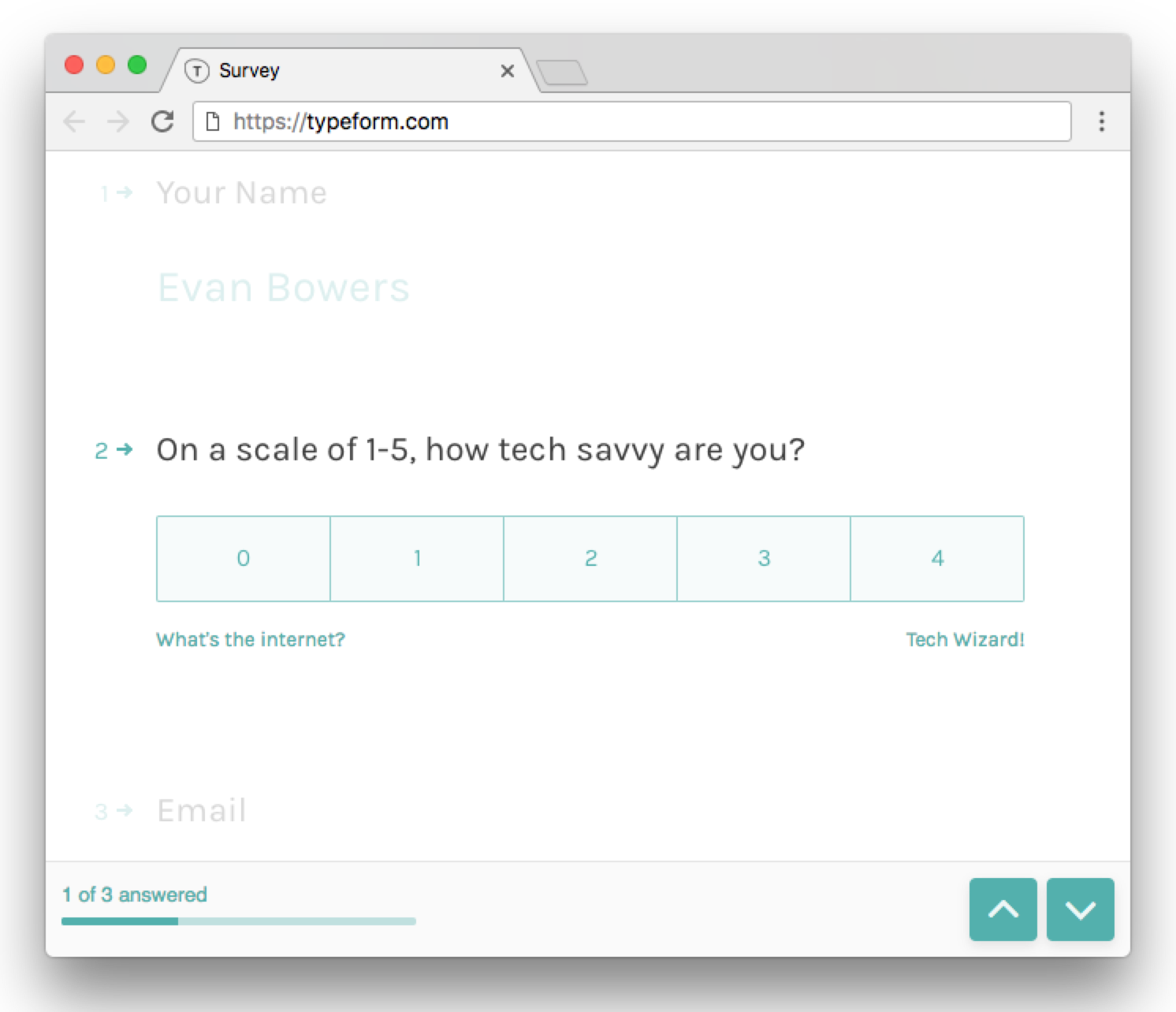

A screener survey helps you find the right people with the right traits. You craft a series of questions with a "correct" answer based on what you’re looking for. For non-tech savvy people, you may ask, "On a scale of 1-5, how tech savvy are you?". That way, you can recruit anyone who answers 2 or lower. When crafting survey questions, make sure you’re not hinting at the preferred responses, so keep the questions broad.

When you have a number of responses, your team can schedule sessions with participants. As far as how many people you should schedule, there are plenty of opinions out there on sample size for qualitative research. We tend to notice patterns in the 10-12 range.

Observe and Revise

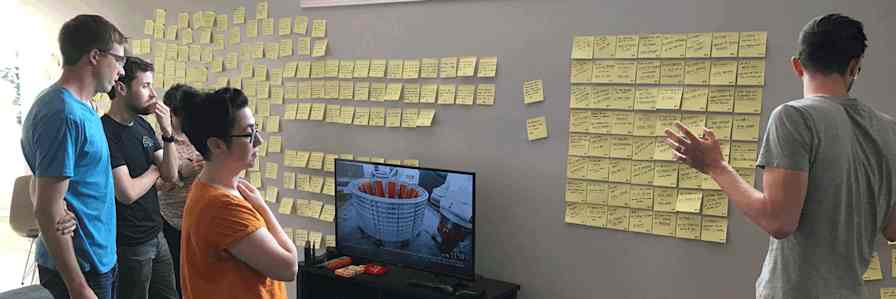

It’s observation time—gather the team, start a pot of coffee, and put on your empathy hat! We encourage our entire cross-functional team (product management, design, engineering, research, marketing, and support) to join. The observation phase gives our team first-hand knowledge of how our customers behave. As a group, we witness why customers do certain things and watch them run into roadblocks. Seeing this as a group will align your thinking quickly so everyone has a better idea of glaring issues that need to be fixed.

Before the session starts, you’ll want to figure out who’s going to facilitate, who will take notes, and who will listen and watch. Folks listening and watching provide commentary in Slack as the session takes place.

Since these sessions take place remotely, we use Zoom to host video calls with participants. Each call is scheduled beforehand with Calendly. About an hour before our meeting, we built a Zap to email the participant a reminder that our session is coming up with a link to join the Zoom call. When the session time starts, the participant joins the call and talks exclusively with the facilitator.

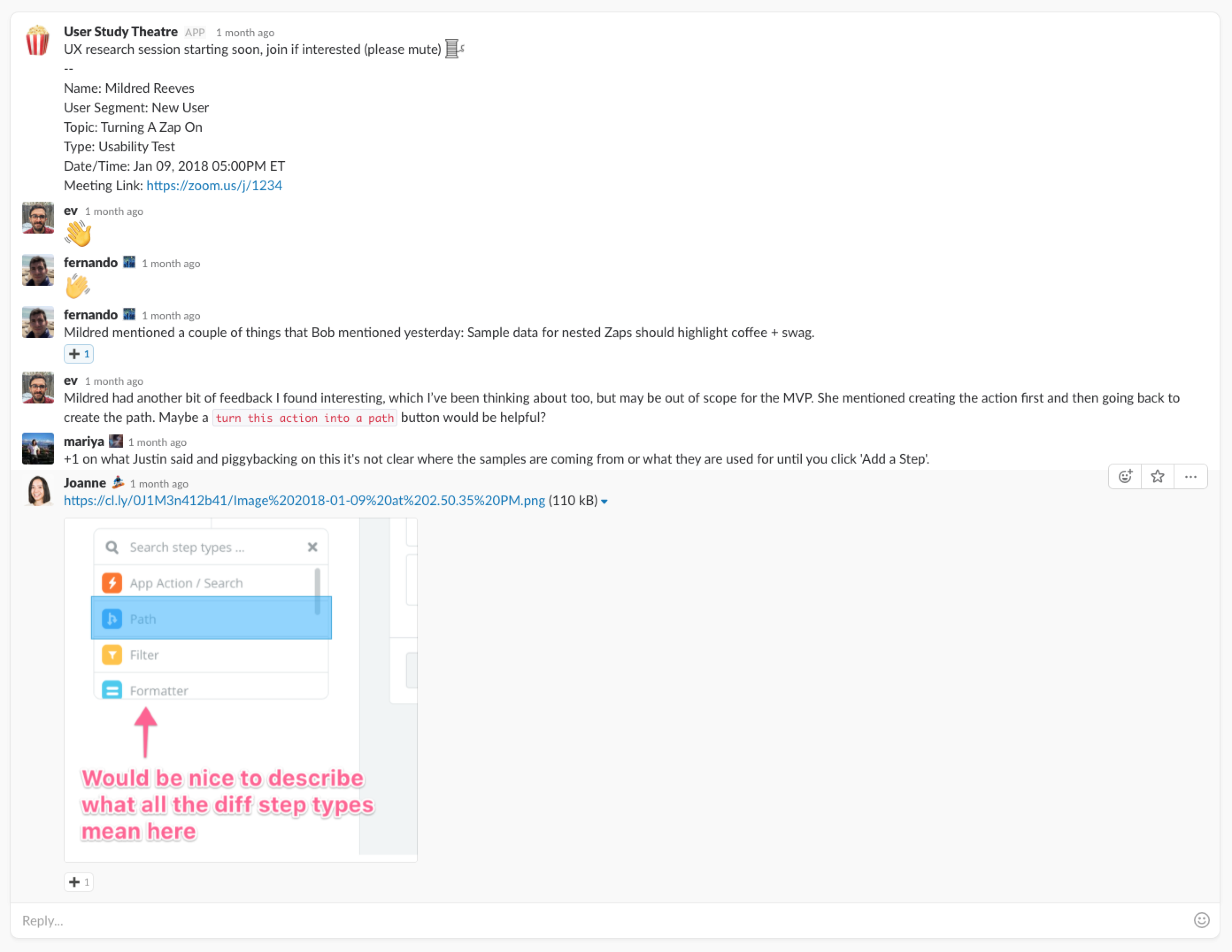

At the same time, we have a Zap spin up a Slack thread for the session, letting members of our cross-functional team (and anyone in the company) know when it starts. When others on the team join to observe, they keep their mics muted and cameras off to avoid disrupting the session. This Slack thread is what we call our "Slackroom" (borrowed/modified from Airbnb to fit our remote work setting). In the Slackroom, we talk about pain points as they happen in the session. We also take this as an opportunity to discuss participants experiencing recurring issues and share sketches and ideas that might help.

The pain points that participants experience, along with the conversation in our Slackroom, provide great prompts that inspire divergent design thinking. This part of the process generates a lot of ideas—which comes in handy when your team gets into final revisions.

Take Notes

For note-taking, we use Google Sheets (template included below). We formatted the spreadsheet so we can quickly choose what goal the note relates to, the emotion of the user at the time of the note, which teammate manages the note in case we need to follow up, and a spot for individual researcher notes. We gather early findings in the researcher note column before we do our formal synthesis. From that column, we create an early findings report for folks who couldn’t attend so they get a feel for things before the full report is released to the entire company.

Synthesize

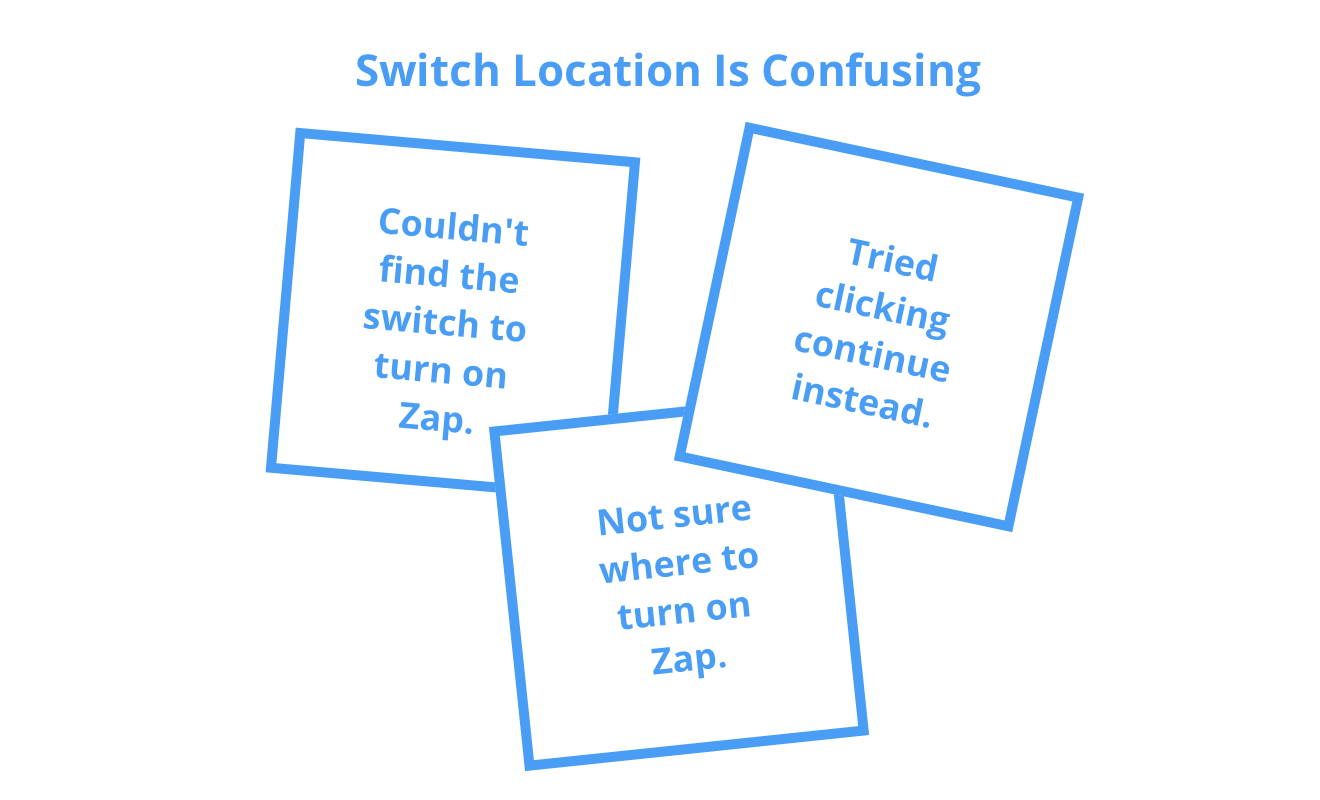

After all the sessions are complete, a couple of folks from the team—typically a researcher and designer—will pull our notes into a collaborative online whiteboard, called Mural, and do an epic affinity diagramming exercise asynchronously. The goal of this exercise is to group similar notes into clusters and give that cluster a theme name. For example, maybe there are a lot of notes related to pain points about the location of the Zap's ON switch. We'd put these together and name it something like "Switch Location Is Confusing".

At the end of this exercise, you’ll walk away with a handful of theme clusters (many times we end up with 10 clusters or less). There are situations where grouping similar clusters makes sense as well; you’d follow the same process and give that new group a theme name. There are also situations where you’ll have outlier notes. That’s perfectly normal—we even have a whiteboard section called the "parking lot" where we place these notes.

These theme clusters will be the takeaways from your research efforts. You’ll use these to put together a report of findings for your company and make adjustments to your new feature. Though we won’t wait until this point to make design changes, by doing everything together, our process becomes less of a waterfall (research > design > engineering > marketing) and more parallel (building features side-by-side).

Research Together

This process has been immensely helpful and approachable for all members of our team: Everyone contributes and knows why projects go in a specific direction.

Remember, this is just one way to approach research and it may not fit your situation. One process rarely fits all problems—remember to be flexible, mix and match methods, or make up your own.

Happy researching!

P.S. Join our Design Feedback Group to try new and upcoming features on Zapier before anyone else and help guide the future of our products to make work easier for everyone.