Before letters and squiggly lines started showing up in math class, I was a mental math whiz. Then algebra arrived and demanded receipts. Turns out showing your work is a better way to learn than transforming into that math lady gif. The same is true for how we teach and use large language models (LLMs) and generative AI.

When you ask an LLM a question, you might get a great answer...or an answer that sounds great while quietly inventing a few key details (especially when math, logic, or real-world constraints are involved). But providing examples, requesting a step-by-step breakdown, and encouraging a logical thought process through chain-of-thought (CoT) prompting can help the AI reason through your question and provide a more thoughtful, accurate output.

This guide is all about chain-of-thought prompting—what it is, when to use it, and how to use it effectively. You'll get practical prompt patterns, examples you can steal, and a few guardrails for keeping your AI model's reasoning inspectable.

Table of contents:

What is chain-of-thought prompting?

Chain-of-thought prompting is a prompt engineering technique that requires generative AI models to explain their rationale for a response through a step-by-step breakdown. So instead of spitting out a quick answer without justification, the AI has to slow down, reason its way through a response, and then show its work.

That doesn't mean you need (or want) a sprawling, unfiltered internal monologue. The goal is to end up with an output you can audit. Think:

Key assumptions (what the model is taking for granted)

Step-by-step approach at a human-readable level

Intermediate checkpoints (quick sanity checks or mini-calculations)

A short rationale for the final answer

While it's not necessary for every query—no one needs a philosophical dissertation to answer "what time is it in Tokyo?"—CoT prompting can result in more effective AI prompts that make it easier to verify the thought process behind the logic and increase the likelihood of an accurate response.

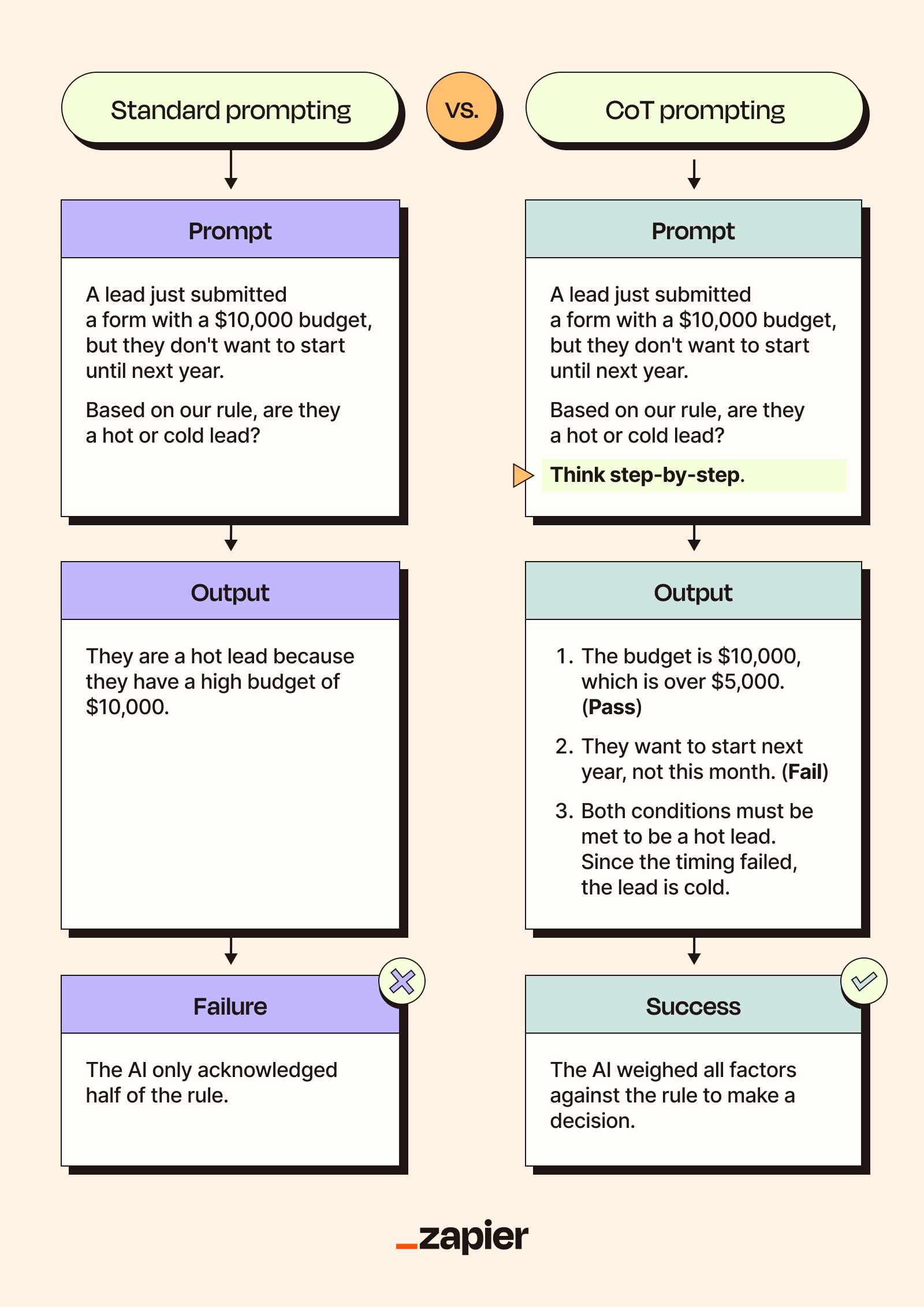

Standard prompting vs. CoT

Standard prompting is a straightforward approach intended to produce a direct response, with minimal structure or guardrails. This is typically a simple prompt or question, like "How do I build a content calendar?"

The problem is that a clean, confident answer can still be wrong, and some models will double down when challenged—not only wrong, but wrong again, now with added conviction. If you've seen any videos of people asking AI how many vowels are in a given word, you know exactly what I'm talking about.

CoT prompting encourages the model to specify how it reached its conclusion, forcing it to expose the process and the logic behind its output rather than simply producing an immediate response and calling it a day. This can improve accuracy, not because the model has suddenly discovered humility, but because it must justify its reasoning step-by-step instead of sprinting to the first plausible-sounding endpoint.

Standard prompting is perfectly fine for tasks that are simple, low-stakes, or purely generative (brainstorming headlines, drafting a rough outline, defining a term). But if you're dealing with a task that has multiple constraints, calculations, edge cases, or real-world consequences (debugging code, allocating budgets, writing for a specific audience with hard requirements), a CoT prompt is often worth the extra tokens.

Example 1: Debugging code

Standard prompt: Is this Python code thread-safe? [Insert Code Block]

Without set parameters, the AI may not have the necessary context for potential issues. And if it discovers something that might not be thread-safe, there's a chance it will only provide a "yes" or "no" without further elaboration, like the world's worst Ouija board.

To use CoT prompting for this task, you can keep the original prompt but add specific instructions and a zero-shot prompt to generate logic, like:

Identify any non-thread-safe libraries, determine the scope of those variables, and conclude if a race condition is possible to explain your thought process.

Example 2: Multi-step budget math

Standard prompt: Our budget for this year was $50,000. We spent $12k on ads, $5k on a contractor, and then spent 10% of the remaining balance on office supplies. How much is left?

Without enough context or instruction, the model might calculate 10% of the original budget or add the amounts spent instead of the remaining balance. But if you start with a similar example that includes reasoning and a correct answer, you dramatically increase the odds that it will follow the intended logic rather than free-associating toward an answer that looks plausible.

So in this case, you might precede the previous query with:

Example Question: A project has a budget of $1,000. We spend $200 on software and $300 on equipment. If we spend 20% of the remaining balance on a team lunch, how much is left?

Reasoning: 1. Subtract the fixed costs from the total ($1,000 - $200 - $300 = $500). 2. Find 20% of the remaining $500 ($500 $\times$ 0.20 = $100). 3. Subtract the lunch cost from the previous balance ($500 - $100 = $400).

Answer: $400

Important caveat: Chain-of-thought prompting doesn't guarantee correctness. Models can still make mistakes, and they can still rationalize a bad answer.

How does chain-of-thought prompting work?

Chain-of-thought prompting effectively changes how AI models process information. A standard prompt asks the model to produce an immediate answer. A chain-of-thought prompt, meanwhile, supplies a framework that mimics human thinking—it nudges the model to work through the problem in a more structured way and to explain the rationale behind what it's saying.

When you ask a student—or an LLM—to show their work, you're not only checking the final answer; you're checking the method. And once the method is clear, it's easier to repeat the process later rather than treating every problem as a fresh roll of the dice.

Types of CoT

The type of CoT prompting you use can affect the quality, repeatability, and accuracy of the results you get. As with all prompt engineering, remember that your input can have a major impact on the output, so try out different approaches to get the best results.

Zero-shot chain-of-thought

With a zero-shot CoT prompt, you nudge the model to reason in a structured way. It's the quickest way to improve responses for multi-step questions, but it's also the easiest to get vague, overconfident "reasoning" back if you don't add guardrails.

All you need to do is add a phrase to the end of your query, something like:

"Let's think it through step-by-step."

"Show me your thought process."

"Solve this logically."

If you keep it too open-ended, error rates can go up because the model may invent steps that sound logical. The upside is you can usually spot the weak link (a bad assumption, a missing constraint, a broken check) and fix it.

Example prompt | My flight leaves at 9:15 a.m. The airport is 30-45 minutes from my home.

What time do I need to leave to make sure I have enough time to check my bags and grab a coffee before boarding? Think it through step-by-step. |

Why it works | Instructing the model to think through the problem step-by-step encourages it to avoid guessing and instead calculate traffic buffers as well as the boarding time and bag drop deadline. |

Few-shot chain-of-thought

If zero-shot prompting feels like taking a shot in the dark (because it is), you can go a bit further with a few-shot CoT prompt instead. What this looks like is pretty simple: you give the AI model a few examples of the kind of question you're asking, along with the correct answer and an explanation of the reasoning behind the logic.

In other words, you guide it on how to think through a similar problem so it can replicate the process and generate more accurate results.

Of course, the quality and relevance of the prompt you create and the examples you provide directly impact how well the model can internalize your process. That means that there may be circumstances where you might be the problem (it's you).

Example prompt | Example Task 1: Fix "Buy Now" button on the home page. Reasoning: This is a technical failure affecting revenue that affects 100% of customers trying to purchase. Priority: Urgent Action: Assign to the Engineering lead immediately.

Example Task 2: Add new photos to "About Us" page Reasoning: This is a cosmetic update that does not impact site functionality, sales, or user experience. Priority: Low Action: Move to backlog for the next marketing sprint.

New Task: The monthly newsletter is 2 days overdue, but the lead writer is out sick. Reasoning: — Priority: — Action: — |

Why it works | Providing examples of differing priority levels gives the AI a range to operate within to inform its answer. Additionally, requesting specific outputs (e.g., reasoning, priority, and action) compels the model to provide a logical breakdown and clear directives. |

Automatic chain-of-thought

Automatic or auto-CoT prompting can sound a lot like few-shot CoT at first. Both rely on examples to guide the model toward better reasoning. The difference is in the "automatic" part: you're not hand-writing those examples.

Instead of manually drafting example questions (which can, admittedly, be fun in small doses), auto-CoT automatically clusters topics and generates questions from a dataset. Then, you'll follow up your examples and actual query with a zero-shot prompt so the response includes a breakdown of the logic.

Why bother automating this process? Sure, it's only a few examples for each prompt, but if you're working across workflows or are scaling agentic AI throughout your organization, manual CoT prompting could quickly become your entire job.

Here's what this looks like in practice. Say you have 10,000 customer support tickets, and you want an AI agent to help categorize and resolve them. You don't have time to write bespoke examples for every possible ticket type. But a purely zero-shot approach is risky because edge cases will wreck your accuracy.

Auto-CoT prompting can:

Analyze and sort the items in your dataset to form clusters

Select a representative problem from each cluster

Use zero-shot prompting to generate cluster-specific reasoning

Build a few-shot prompt using multiple representative problems and generated logic chains to apply to a new task

There's a lot of work happening upfront and behind the scenes, but once the system's up and running, you can speed through prompts and rapidly generate solutions for new problems.

Example prompt | You will triage tickets using auto-CoT.

STEP 1: AUTO-GENERATE EXAMPLES (from the dataset below) 1) Read the "PAST RESOLVED TICKETS" dataset. 2) Infer 2-3 common issue categories from the ticket text + resolution notes. 3) Select TWO representative tickets from DIFFERENT inferred categories. 4) For each representative ticket, generate an example in EXACTLY this format:

Example 1 (Inferred category): <Category name> Reasoning: 1. <step> 2. <step> 3. <step> Action: <one sentence resolution/next step>

Example 2 (Inferred category): <Category name> Reasoning: 1. <step> 2. <step> 3. <step> Action: <one sentence resolution/next step>

STEP 2: SOLVE THE NEW USER TICKET (using the same logic style) After generating Example 1 and Example 2, solve the NEW USER TICKET in EXACTLY this format:

New User Ticket: "<paste ticket>" Reasoning: 1. <step> 2. <step> 3. <step> Action: <one sentence resolution/next step> Questions (only if required info is missing): <up to 3 questions, otherwise "None">

PAST RESOLVED TICKETS (with outcomes) [PASTE HERE]

NEW USER TICKET [PASTE HERE] |

Why it works | Providing the model with relevant examples for context and process allowed it to follow a calculation pattern and recommend a customer-facing response. |

Multimodal chain-of-thought

Multimodal or M-CoT prompting is an all-star, able to process strings of text, datasets, and visual information. The previous models can only process the information you provide in text; M-CoT prompting can read images, too.

In any automated workflow, you might be dealing with PDF invoices, UI screenshots, handwritten forms, and more. A text-only model might try to "guess" what's in an image based on its metadata. An M-CoT model, on the other hand, can actually identify visual features (like the location of a total amount on a receipt) and describe them as part of its logical chain.

Processing visual information is a huge asset; instead of manually transcribing information from an image, the AI can actually identify what's present, where it's located, and how it relates to the file as a whole.

Example prompt | Here is a screenshot of our homepage. The site is not loading correctly. What's wrong, and what should our next steps be? Let's think step-by-step. |

Why it works | An M-CoT workflow reduces the amount of manual effort necessary to process and evaluate visual information because the AI can do it for you. Paired with a zero-shot phrase, this approach can quickly and accurately break down visual and text data. |

Benefits of CoT

Sure, CoT prompting requires some extra work drafting examples or preparing datasets, but wouldn't you rather put in a little extra effort for better, repeatable results?

In a real-life conversation, it's the difference between "word vomit" and a thoughtful apology to your high school (to paraphrase the great philosopher Lindsay Lohan).

Compared to standard prompting, CoT prompting often generates results with better:

Accuracy: Telling an AI to show its work significantly reduces the risk of AI hallucinations, resulting in more accurate outputs. That's the biggest advantage of using CoT prompts: you get better results.

Multi-step reasoning: With standard prompting, AI can falter on multi-step problems, skipping over questions or prioritizing the wrong factors, but CoT prompting sets it up to process and address every part of the prompt in the order given.

Transparency: By providing a breakdown of its reasoning process, AI hands over a self-generated audit trail, so even if it gives an incorrect response, you can see exactly where it went wrong and more accurately redirect it.

Repeatability: If you're training the same model with CoT prompting, there's a higher chance that the AI can continue using the same reasoning for similar prompts, ultimately allowing you to get better results over time.

Scalability: The simpler forms of CoT prompting can provide great results at a lower cost by frontloading directions that give better responses without using extra tokens, even from smaller AI models.

Limitations of CoT

Generative AI systems like ChatGPT typically work by producing near-instantaneous responses to simple questions, but that doesn't always mean they're correct.

Using CoT prompting forces the AI to slow down and do XYZ rather than churning out the first thing it finds, but it's not a universal solution. You can't apply the quadratic equation to every algebra problem, after all.

If you're deciding whether you should use CoT prompting, consider the following:

Token cost and latency: This approach requires a lengthier output, which can increase how long it takes and how many tokens it costs to respond.

Hallucinated reasoning: There's a chance that the AI you're using may invent false information as a part of its reasoning process. Sometimes, the model may even come to the correct conclusion, so it's important to vet the responses and the rationale.

Simple retrieval: For easy answers and quick retrievals, CoT is overkill, adding excessive background information and processing time without impacting the accuracy of the response.

Phrasing sensitivity: The language you use matters, and some models may be more sensitive to how you phrase your prompt. You may need to test different trigger phrases and standardize your prompts to get consistent results.

Chain-of-thought use cases

CoT is most effective in workflows where a "gut reaction" isn't enough. Instead, the model has to navigate rules, math, or multi-step logic to reach a reliable outcome.

Sales

Sales teams can use CoT to move beyond simple data collection to intelligent, automated lead qualification. Instead of just checking if a lead filled out a form, CoT allows an AI to act as a junior researcher, weighing the data against specific intent signals to determine if a prospect is actually ready for a sales call.

Sales use case

Example prompt: Evaluate incoming leads and determine their priority. Before giving the score, think through the lead's potential step-by-step across company size, current technology, and recent funding.

Explanation: A standard filter might normally ignore a lead from a small company. Using CoT, the AI can think through the context to better vet the prospect. That reasoning might look like:

The company just raised a Series B.

They are currently using a competitor's tool.

Therefore, they are a high priority despite their current headcount.

Marketing

CoT prompting can be especially useful in managing multiple objectives at once, like making sure a new blog post is simultaneously SEO- and AEO-optimized, matches a specific brand voice, and addresses a trending topic.

Marketers can also use CoT prompting to plan, evaluate, and refine their strategies. A standard prompt asking why a campaign didn't generate enough leads will likely result in hallucinations. A CoT prompt that gives more context and examples could provide actionable insights.

Marketing use case

Example prompt: Repurpose the attached webinar transcript into 5 high-impact LinkedIn posts. To ensure these posts convert, work through the following reasoning steps before writing the copy:

Extract key takeaways: Identify exactly five distinct "aha moments" or tips from the transcript that solve a specific problem.

Map to pain points: For each takeaway, explain why it matters to an Operations Manager.

Establish a provocative hook: For each post, brainstorm a hook that challenges the status quo (e.g., "Your spreadsheets aren't the problem; your process is"). Avoid generic summaries.

Draft the posts: Using the logic from the steps above, write the final copy for the 5 posts.

Explanation: By encouraging the AI to move step-by-step through the process, you can identify, target, and draft five unique, conversion-focused posts that feel like an expert strategist wrote them.

The logic breakdown can also give insights if an output doesn't meet the mark—like if the AI doesn't have a fundamental grasp of what your target audience cares about.

IT

IT teams may already use AI to generate report tickets when an issue occurs, but that's only the beginning. With CoT prompting, IT teams can get a head start on solving the problem.

A CoT-enabled AI would notice the issue and start a ticket, but before reporting to the human techs, it can think through possible causes, offering an initial diagnosis based on set examples and rules.

So instead of spending precious time trying to figure out why a site's down or a password won't work, the IT team can skip the triage and work on a solution.

IT use case

Example prompt: Diagnose the root cause of the following API alert and prepare a summary for the Engineering team. Act as a Tier 1 Support Engineer. First, perform a digital "triage" by thinking through these steps:

Interpret the error code: Look at the status code provided in the alert. Is it a client-side issue (like a 401 or 403) or a server/traffic issue (like a 429 or 500)?

Check subscription status: Access the provided client metadata. Is their account active, or has their subscription lapsed?

Evaluate system health: Cross-reference the alert with the current "Internal Status Page" log to see if there is an outage.

Synthesize and solve: Based on the steps above, identify the most likely root cause and suggest next steps for the IT team.

Explanation: Providing a clear order of operations guides the AI step-by-step through diagnostics, which can help avoid initial hallucinations such as assuming the server is down. So instead of reporting that some unidentifiable thing is wrong with no real direction, the AI can be more helpful and point the IT team in the right direction.

HR

You probably shouldn't let an AI mediate conflicts between coworkers or handle the hiring process from start to finish. But there's room for CoT prompting to help with HR processes without dipping too much into sensitive topics.

In recruiting and hiring, for example, a CoT-enabled AI could do more than scan applications for relevant keywords. It might instead evaluate the context of a candidate's experience and the sentiment of their cover letters to provide a more empathetic evaluation.

Hiring managers who get thorough, intelligent recommendations may have an easier time narrowing down candidates for screenings and interviews.

HR use case

Example: Evaluate the following candidate for the "Senior Backend Engineer" role. Before making a recommendation, think through these specific criteria:

Analyze growth context: Look at the candidate's tenure. Does their progression at high-growth startups suggest they've gained "Senior" level experience faster than the traditional timeline?

Technical alignment: Map their specific Python achievements against our technical needs. Are they solving "Senior-level" problems?

Evaluate soft skills: Look at their non-work activities. What do their volunteer or personal projects reveal about their leadership and collaboration style?

Final verdict: Provide a recommendation based on the total evidence rather than just the year count.

Explanation: Rather than dismissing candidates based on individual criteria (such as years of tenure), the AI can more accurately evaluate the context of their qualifications and achievements as they relate to the job description. The result is a more human-centric recommendation that might express what's lacking but provide evidence of a strong fit anyway.

Take advantage of chain-of-thought prompting with Zapier

Learning the quadratic equation after only knowing guess-and-check is like receiving a well-labeled map after stumbling around your town, and learning how to use chain-of-thought prompting can have a similar effect.

You'll go from throwing spaghetti at a wall to having a repeatable, reliable framework for solving even the most variable-heavy problems. By encouraging your AI models to show their work, you transform your automated workflows into sophisticated engines that can handle nuance, math, and multi-step reasoning without breaking a sweat.

Implementing CoT prompting can help you graduate from simple prompts to advanced orchestration, and you can start optimizing your entire tech stack by building with Zapier's AI tools. Whether you're training Zapier Agents to act as specialized teammates or adding AI steps to deterministic workflows, CoT prompting can get you where you need to be faster.

Related reading: