Trusting a new AI agent you just released can take time. You run it through your work data, watch it closely for days and weeks, always judging if it's working for you or against you. Just when you're starting to relax and enjoying the productivity boost, the AI provider launches a model update: the responses have now shifted, your instructions get interpreted differently, and you're back to zero trust again. So… progress?

This is normal, as AI needs maintenance like any other tool or product.

I've been structuring and simplifying the fundamentals to tune an AI agent I'm building. At the same time, I've been developing a repeatable process so I can improve all my future AI agents too. Here, I'll share that framework, so you can help your bots stay fresh and powerful.

Table of contents:

Part 1: Preparation

Part 2: Finding solutions

Part 3: Implementation

Tips and additional guidance

Part 1: Preparation

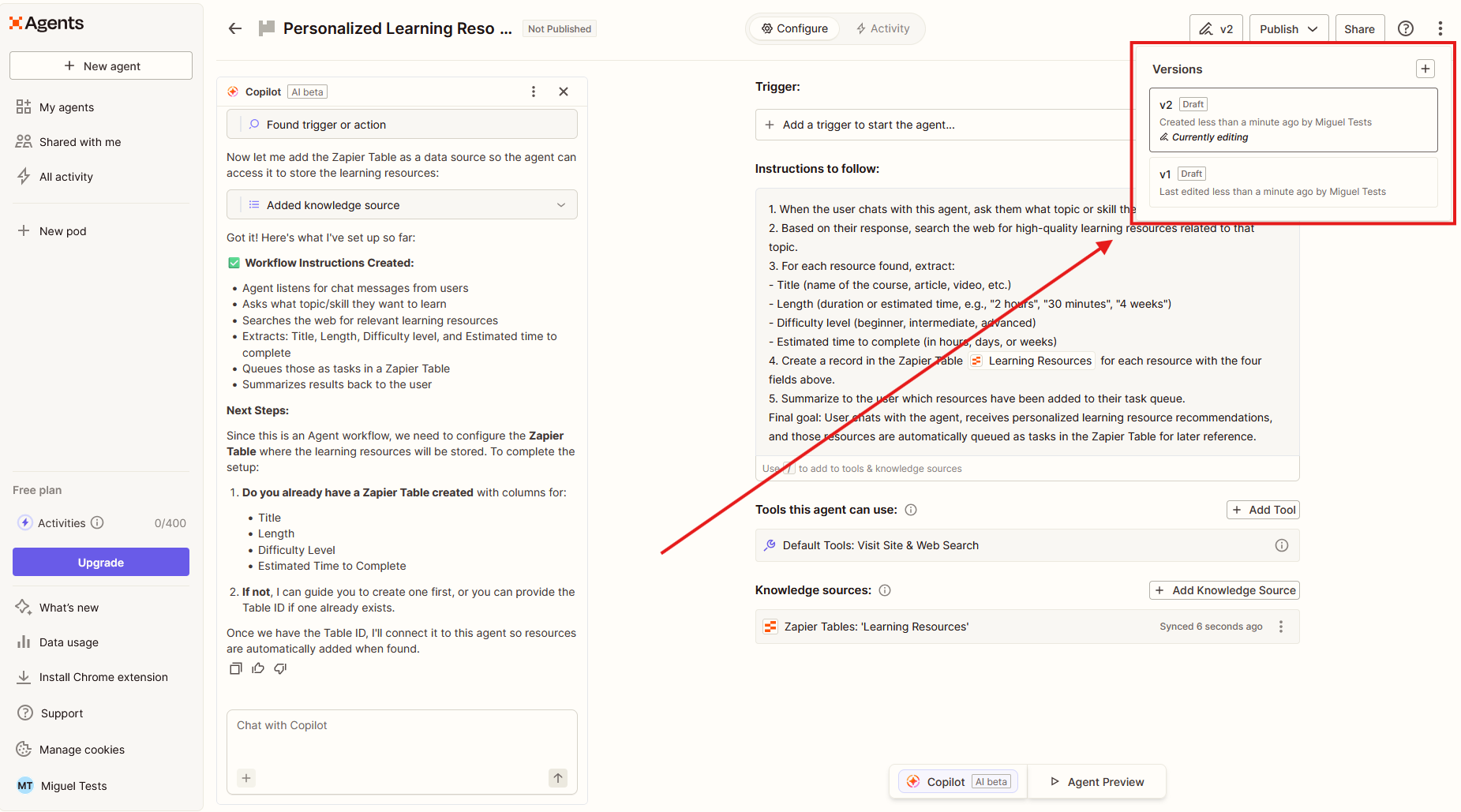

Add version control and set up a sandbox

Version control sounds boring, but saving and naming your AI agent versions will save you a lot of trouble as you update it over time. Without it, you can't easily collaborate with other builders in your team, and you can re-introduce bugs that you previously fixed.

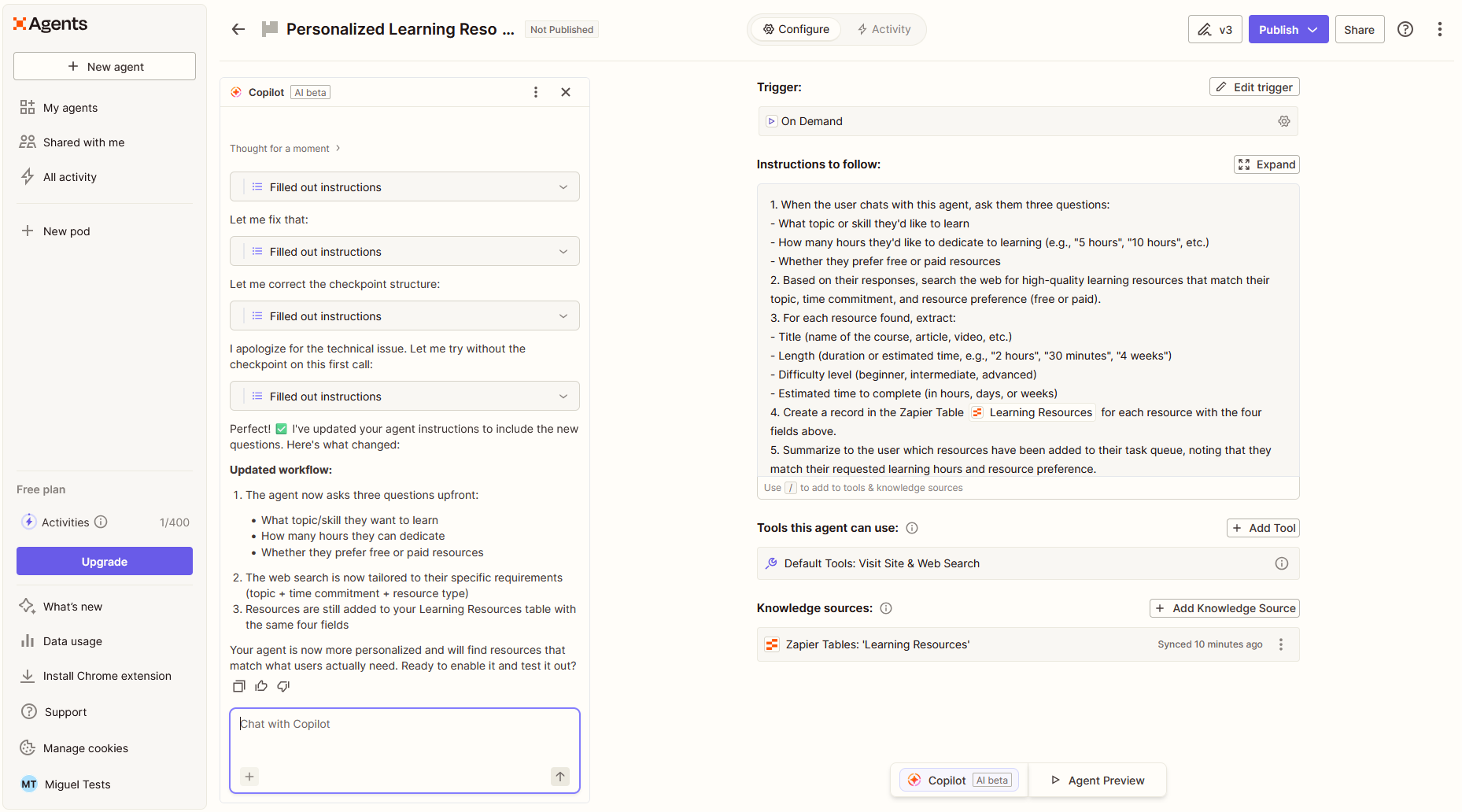

Some AI agent builders, like Zapier Agents, offer built-in version control. This is ideal. If yours doesn't, save every part of your configuration in a single source of truth. Here are the kinds of things you'll want to track:

Which AI model you're using

Any system instructions

A list of connected tools

Knowledge base version (including the versions of each document included in it)

Any other elements that change your agent's behavior when added, changed, or removed

If this is your first time adding version control, you can keep it simple and set it to v1.0.0. Later in this guide, you'll learn how to increment versions and what to keep track of.

Lastly, before making changes, duplicate anything you're planning to work on for the new version. This will be your sandbox to play around and test without breaking the current version. If you have multiple builders in your team, you can create one for each person to brainstorm approaches, and pick the best candidate for release as you wrap up.

Set objectives and a scorecard

Like with any project, you start by setting where the finish line should be. The very first step is deciding what you're improving:

Model fails to reply on-target? Focus on accuracy.

The voice is off-brand? Focus on tone and style.

Tool calls and actions got unpredictable? Get ready to dive into schemas, MCP, and APIs.

With your objective in mind, create a scorecard. This will help you rank responses, separating useful results from bad outputs. There are two main rubrics in the scorecard.

Rubric 1: Dealbreakers. These are pass/fail conditions. If the model hallucinates, ignores a critical instruction, violates safety or compliance, or uses a tool incorrectly, these are instant fails.

Rubric 2: Quality scoring. This is a 0 to 2 scale across metrics to help you identify the severity. Here are a few examples you can use as a base, but you can include your own quality scoring for what matters in your use case.

Metric | 0 score | 1 score | 2 score |

|---|---|---|---|

Correct and complete | Incorrect or incomplete | Partially correct, missing some elements | Correct and complete |

Grounded | Guessing or fabricating facts while ignoring provided data | Uses some facts from the data, but misinterprets or fills the gaps on its own | Clearly based on provided information |

Helpful and clear | Confusing, unclear, difficult to follow | Acceptable, but could leave users with unanswered questions, doubts, or trigger escalations | Actionable and easy to follow |

Tone, format, and brand | Off-brand, poorly formatted, difficult to digest | Off-tone, mixed formatting, creates friction | On-brand, well-structured, engaging |

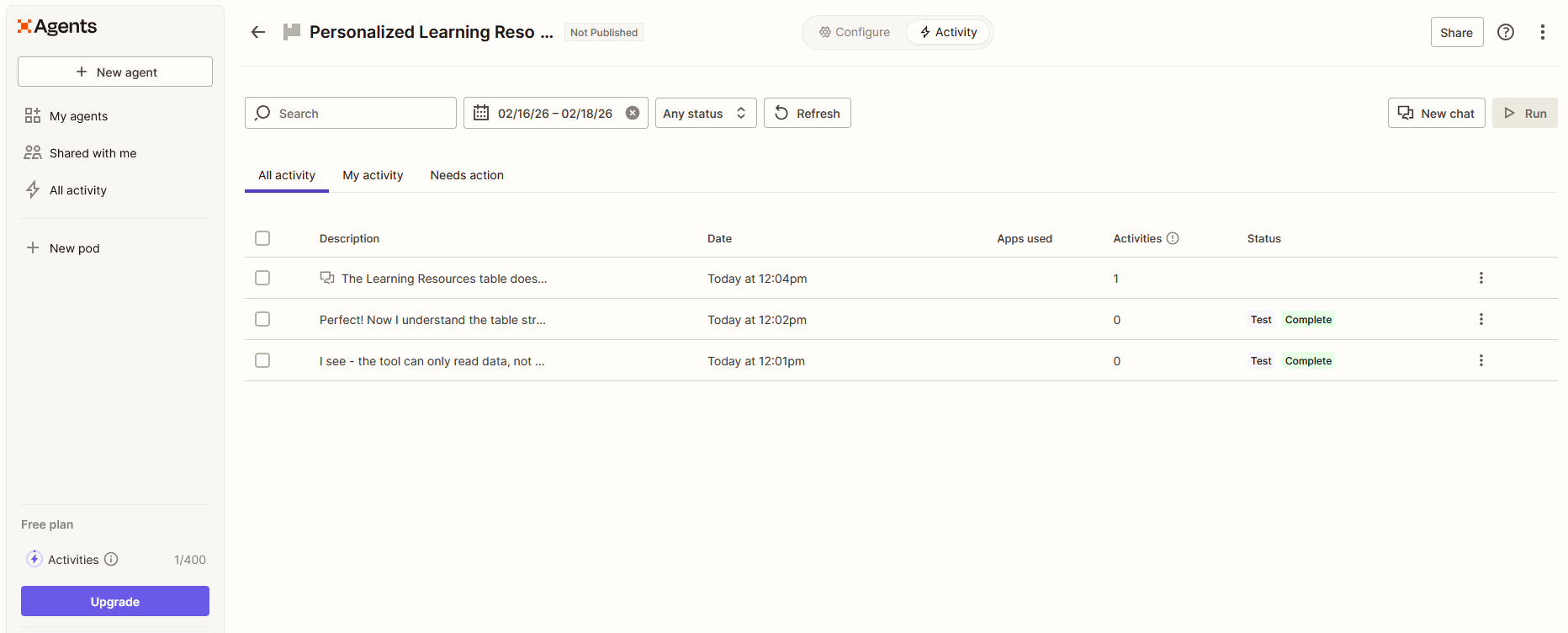

Collect outputs

Time to collect agent responses. Gather 20 to 50 recent responses from conversations or agent runs, enough for getting good results without overwhelming yourself with too much data. Make sure this response set reflects the full range of what users are asking; otherwise, you'll be optimizing for a narrow range of uses and make the agent inflexible.

For single-turn Q&A chatbots and simple agentic AI tools (those that only run a couple of tool calls for every user prompt), pairs of user prompts and agent responses work fine. But if you're running a full AI agent with multi-turn reasoning, lots of tool calls, and embedded in a multi-agent system, you need full chat/run threads.

In either case, store everything in Zapier Tables or another spreadsheet or database tool and add this metadata to each message or run to keep things organized:

User message(s) and agent response pairs

Timestamp

Agent version (add 1.0.0 if you're just starting)

Tool calls executed in the run and tool outputs

Retrieved knowledge base chunks to ground the response

Outcome: did this solve the request

User feedback (if your AI agent platform supports upvotes/downvotes on a response)

Grade outputs and find top problems

Add the grading columns from the first step to the spreadsheet, one for the pass/fail dealbreakers, and multiple for the quality metrics (correct and complete, grounded, etc). Go over every response: did the agent pass or fail? If it passed, add the 0-2 quality score. Keep going until all of them are graded.

Next, prioritize the responses based on:

Severity. If a response causes harm, triggers a compliance issue, or is risky in any way, put it at the top of the list. These usually fail on the dealbreakers, so they're easy to spot on the spreadsheet.

Frequency. Problems that keep showing up repeatedly go second on the list.

Business impact. These are the outputs tied to improving productivity, unlocking opportunities, or otherwise creating business impact.

Looking at your graded list, you can get a good sense of where to focus. At first, you might have plenty of high-severity rows; however, as you improve your agent, you'll find yourself moving on to frequent issues and then to business impact.

Build a test suite

Since you have your graded list at hand, this is a good time to save responses to help you test your AI agent at the end of every future project, so you can be sure the problems you're solving aren't coming back.

From your original responses list, create three other lists with:

10 happy-path responses, where the agent successfully responded to and solved critical tasks

10 worst-case responses, a list of the messiest and most broken outputs with the worst scores

10 red-team responses, which cover sensitive topics, edge cases, or inputs that try to break your system

These lists will become your test suite. You can put these lists away for now—we'll come back to them later.

Part 2: Finding solutions

Brainstorm approaches

Some problems are pretty straightforward: you look at your grading list and instantly know this is a knowledge base issue or a tool call that's going off track. In these cases, you can jump in right away and start building. But I've also found situations where the problem is hard to diagnose: it could be two documents with conflicting information, but it could also be that my system instructions need a revamp.

For harder problems, I like to brainstorm potential solutions first before jumping into building, as each attempt is part finding the correct diagnosis, part getting to the solution. At this stage, I make a simple list of issues I found in the grading list and how I'm planning to address them. Here's an example from the agent I'm working on right now:

Agent is too chatty

Change system instructions to control length

Check model API settings to see if there's verbosity controls

Adjust max output length in API (last resort)

When switching from chat mode A to chat mode B, the agent loses memory

Check system instructions for both conversation modes to understand if/how they limit topics

Check if my system is sending the full conversation thread to the AI provider

Agent fails all tool calls that involve adding a new recommendation to a user's account

Check the tool call schema to see if all details are correct

Check my system's API permissions to see if they accept agent calls

If you look at your graded list and don't know where to start, here are a few leads to help you brainstorm:

Issue | Potential causes and solutions |

|---|---|

Hallucinations and wrong/fabricated facts | • Connect a knowledge base (RAG) to your agent and load it with your documents and data. • If you already have a connected knowledge base, re-check the document content to see if there's conflicting or incorrect information. • If your agent has to process a lot of data, consider switching to a model with a larger context window. |

Unpredictable tool use | • Check if tool descriptions are too similar and confusing the model as to which is best for each task. • Models with lots of connected tools (more than 15-20) can become more unpredictable when picking the best one. Consider splitting the functionality into 2 agents or into a multi-agent system. • Consider if the model you're using has high enough intelligence to understand the nuance of user commands. Smaller models can sometimes struggle and need more direct commands. |

Unpredictable or failed interactions with external systems | • Check connected tool descriptions to make sure the model understands the purpose of the tool and that it knows how to fill each parameter correctly. • Limit access privileges for the AI agent in your API endpoints to prevent unintended CRUD operations. |

Verbose or off-brand | • You're using a model tuned for chattiness. Consider switching to a different model or adjusting settings. • Adjust verbosity settings in the model API. • Adjust system instructions to control response length, tone, and style. • Compress your tone and style instructions to the essentials, as longer instructions can sometimes invite unpredictable behavior. • Consider setting a maximum output token limit in the API settings to force shorter outputs. • Consider adjusting temperature or top-k (not both at the same time) to reduce how much each response varies. |

High token usage | • Check all inputs for lots of text being passed to the model: system instructions, knowledge base chunking length/overlap, user prompts. • If available, check the reasoning strength API setting: higher settings consume more tokens. • If your tool needs to support long chats, consider summarizing the conversation as it progresses instead of always sending the full thread. |

Start building and experimenting

You have your brainstorming list; it's time to execute. Starting with the first line of your list, make changes to your setup, and keep the build/test loop tight: every time you make meaningful progress, test it by feeding 5-10 response examples from your graded list, and see how the agent behaves. This approach is good for two reasons:

If there's an error or something that doesn't look right, it's easy to reverse it.

You're building your understanding of the system as much as you're building a tool. Once it's clear in your mind, you usually get to the solution surprisingly fast.

Keep going through the list, and take notes as you go. If you get stuck or if you run out of potential solutions, review your notes and come up with more hypotheses. For really hard problems, shorten the build/test loop even more so you get as much understanding as possible over every change you're making.

You know you're on to the result when an agent response consistently passes on the dealbreaker scoring rubric and has the highest 2 scores in the quality metrics across 5-10 tests.

Run your test suite

Once you find a viable solution that does well during your build/test loop, try to break it with your test suite. Grab your happy-path, worst-case, and red-team lists, and run all the inputs to check that the agent still:

Completes all the tasks in the happy-path list accurately

Responds appropriately (or at least shows improvement) on the worst-case list

Doesn't fail any of the red-team tests

You can grade the responses just like you did at the start to have an objective metric to understand what counts as an improvement. If the agent fails these tests, keep changing your configuration and re-running these tests until the scores improve.

While you may be forced to go back to the drawing board when you run the agent against the test suite, this ensures that you're actually making progress across versions, not just fixing some issues while introducing (or re-introducing) others.

Optional: use AI to evaluate your agent's responses

As you grade your test's results, you'll know how to articulate what you're looking for and what's a bad output. At this point, you can use AI to scale testing, using it to evaluate responses quickly, so you can spend more time building.

Document the reasoning behind your scores. What must be in the output for "grounded" being a 2 instead of a 1? What counts as on-brand? Write down the criteria in a system prompt, including examples, and save it to a GPT in ChatGPT, for example. Then, every time you run your agent, send the responses to your AI evaluator GPT and see if it reproduces your judgment pattern accurately.

Beyond speeding up development, you can use this AI evaluator on the responses of the live tool in the future. Export a list of latest responses, run them through the evaluator, and read what it tells you. This can help you detect if your agent is drifting again and needs to be improved soon, or if you can focus on other projects.

Part 3: Implementation

Write a changelog

Now that you've found and validated your solution, it's time to save what changed. Pick your favorite workspace app, open a new folder to organize all your changelogs, and increment the version of your AI agent using this framework:

Increase the main version number for large changes that drastically change the way the agent works and behaves. Example: v1.0.0 > v2.0.0

Increase the middle number for noticeable changes but not radical. Example: v1.0.0 > v1.1.0

Increase the last number for bug fixes and very small changes. Example: v1.0.1

After the version number, add a short title of the major changes so you can see what each version is about with just a glance. Lastly, 2-5 bullet points of the major changes will do wonders to give more context. Keep it simple but descriptive enough. Here's an example of a simple changelog from my project:

v1.7.2 - model upgrade to GPT-5.1 |

|---|

• New AI model: GPT-5.1 • Adjusted responses to be shorter, both in the model's API settings and in the system instructions • The system instructions for all chat modes were updated to reflect the new model's higher intelligence • Input/output token values are now tracked in our database, with two new reports available for total token usage and cost per user |

Go live with the new tool

You're ready to launch. Replace the access links to the old version with the new one, including any embeds in internal tools. Email the changelog to your coworkers to let them know what's new, and invite their feedback.

Make it repeatable

More than a one-time fix, you want to build a system that'll help you improve your AI agent over time as circumstances change. With that in mind:

Create a simple feedback form so users can flag problems as they encounter them. This makes it easier to grade response lists in the future and find new quality metrics to optimize for.

Mark a review on your calendar. For a new agent sitting on a critical workflow, you might want to look at responses once a week; for an agent with a proven track record of delivering good responses, you may move this to monthly, or quarterly.

Reuse and curate your test suite. As you add more features and capabilities to your agent, the ways it can mess up may also increase. Keep your happy-path, worst-case, and red-team lists up to date by adding new entries, so you can test your agent against evolving needs and threats and make sure everything works as it should.

AI agent tips and additional guidance

AI models

Changing AI models can completely change the agent's behavior, depending on your setup and model capabilities. Make sure to retest everything against the latest responses and your test suite to make sure behavior improves.

Depending on the provider and the model, you may be able to change settings that control how it processes your requests. Here's a shortlist of settings worth exploring:

Temperature and top-k (pick one or the other; never use both at the same time) control randomness. Lower values have more predictable outputs, while higher values introduce more variety in wording and sometimes structure. Adjust this when responses are either repetitive (low temperature) or too chaotic (high temperature).

Extended thinking or reasoning strength (available on some API models) can improve response quality for complex tasks, at the cost of more tokens and longer response times.

Smaller models can work well for simple tasks such as classification or text extraction. Try your setup with a smaller model for faster responses and lower token costs.

System instructions

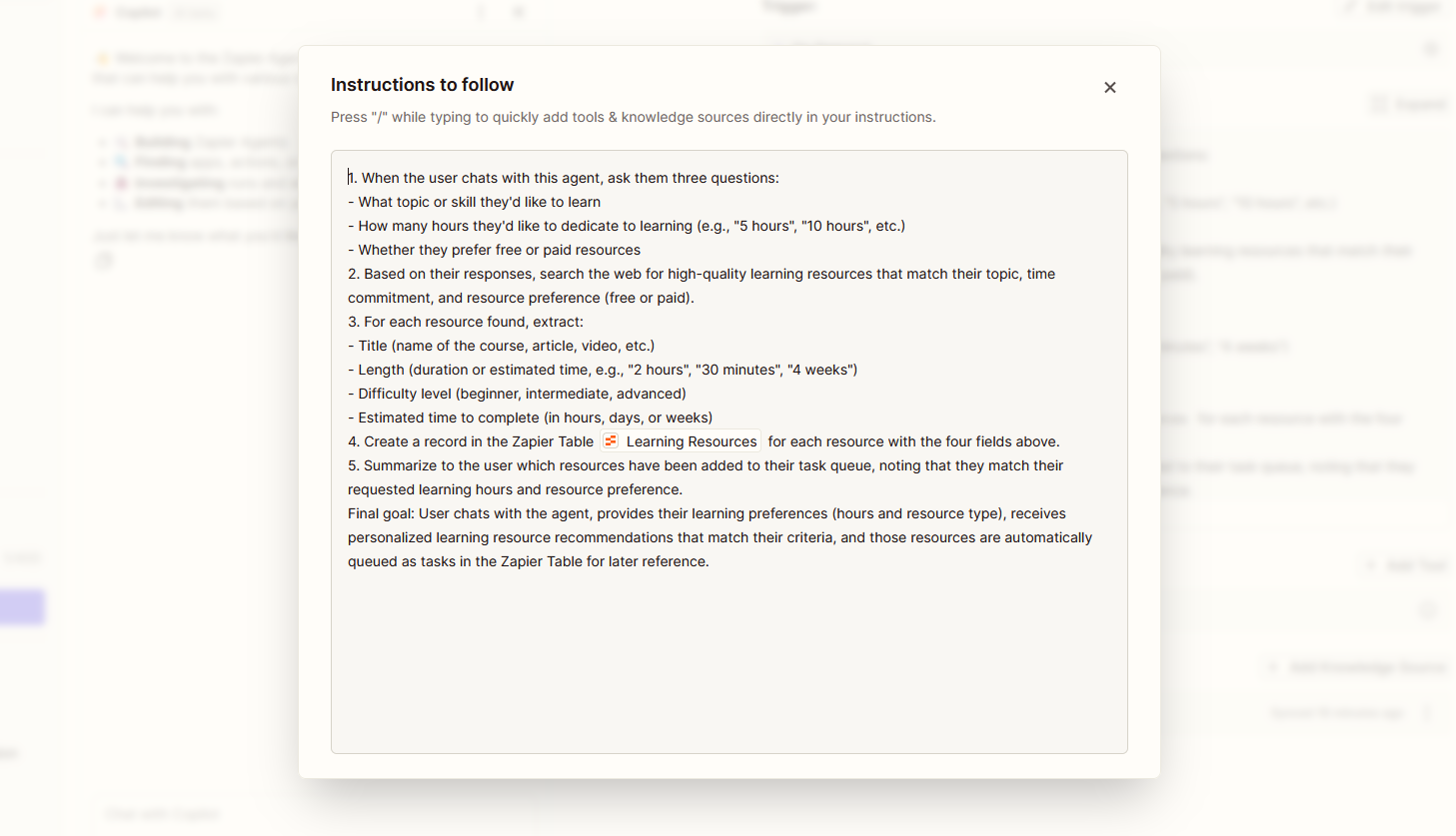

The system prompt is where you define the agent's personality, rules, constraints, and behavior. This is usually the first thing to adjust when something goes wrong and the most cost-effective fix available.

Small changes to wording can sometimes produce large changes in behavior. Be specific. Use examples. State constraints explicitly rather than hoping the model infers them. Here are some prompting tips for agents.

Connected tools and tool configurations

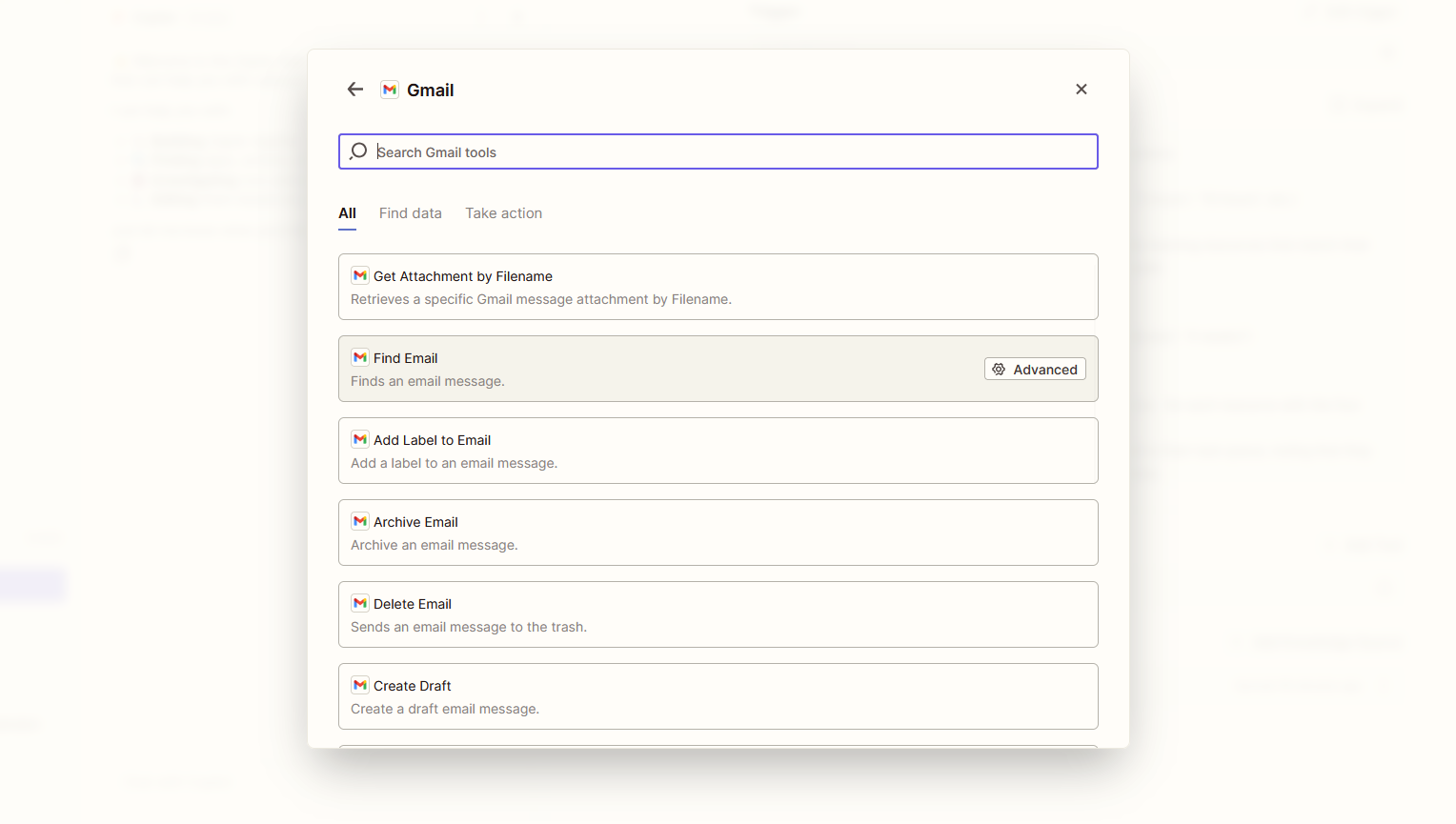

If your agent uses tools (MCP, APIs, database lookups, actions), check three things:

Whether the right tools are connected

Whether the agent is choosing the right tool for each situation

Whether the tool itself is functioning correctly

If you have access to the underlying workflow or logic that runs when a tool is called, review it. A tool can be triggered correctly by the agent but still produce bad results because of a bug or misconfiguration in the tool itself.

With Zapier Agents, adding a tool just takes a few clicks. You can choose from 8,000+ apps, control which specific actions the agent is allowed to take in those apps, and control everything from the same interface.

Knowledge bases (RAG)

If your agent uses retrieval-augmented generation (RAG) or pulls from a knowledge base, the quality of that content directly affects response quality.

Add, remove, or rewrite documents to improve accuracy.

Writing knowledge base content in your target brand voice can improve the consistency of the agent's tone.

Adjust chunking length and overlap based on your data. Short chunks work better for factual lookups. Longer chunks preserve more context for nuanced questions.

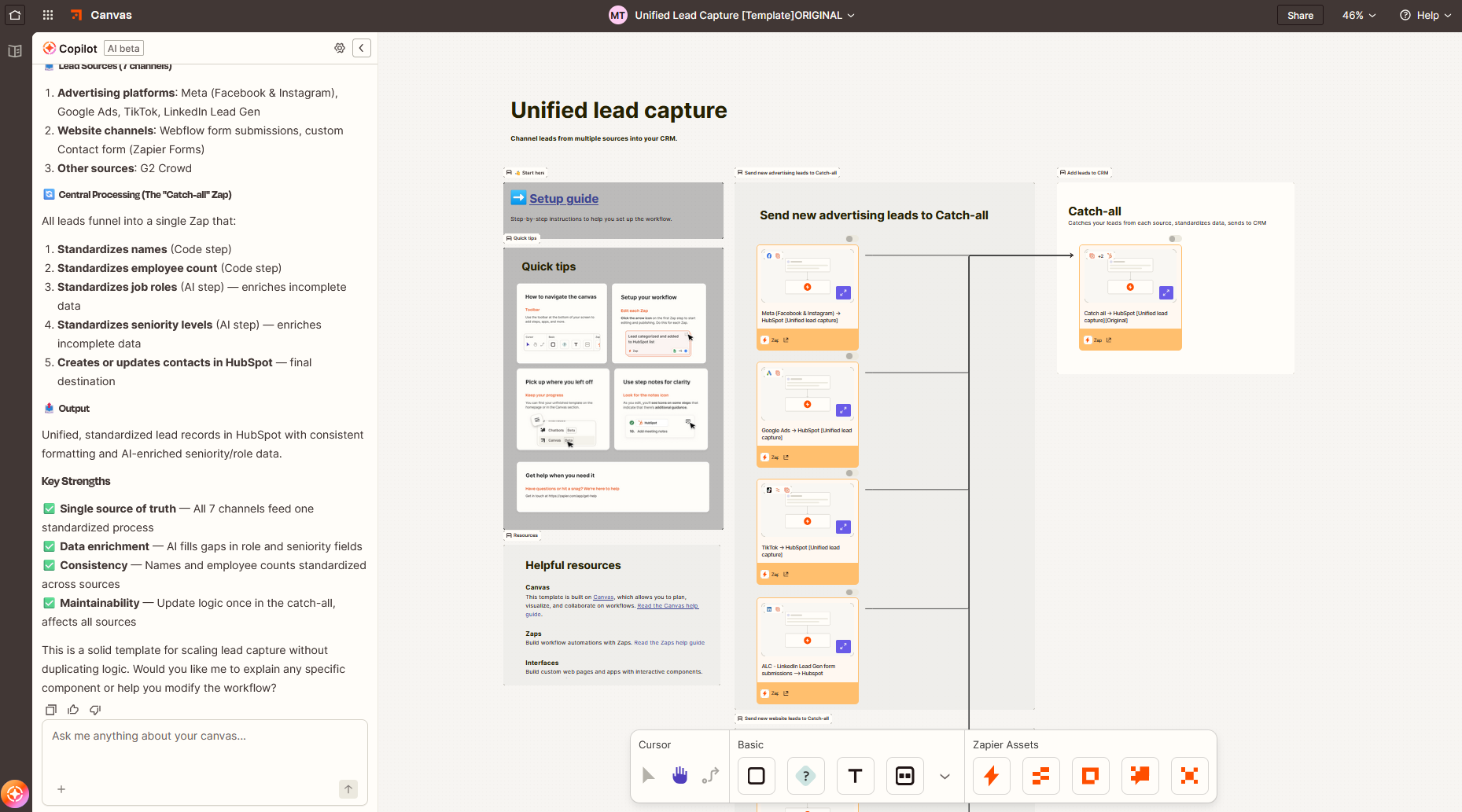

Orchestration architecture

If your agent is part of an orchestrated system—a network of connected systems and tools that are activated and sequenced based on a set of rules—action triggers and flow of information matter. Depending on where your agent is sitting:

It might not have enough information to provide a grounded answer if the bulk of data searching happens after your agent is triggered.

It may have too much information if your orchestration is returning 15 Google Drive files, 20 Docs, and 5 Sheets. You need a way to summarize the results.

If the agent you're improving depends on the results of other agents, make sure they run upstream and that you set the agent to wait until all responses come in, especially if reasoning time varies a lot per task.

Troubleshooting and building advanced functionality depends a lot on which orchestration platform you're using: some expose all data and capabilities to all agents/nodes in a single project; others limit them to each processing step for security reasons.

Humans in the loop

AI does better with humans keeping an eye on it. At first, it's best to have your agents ping you with outputs so you can see if they're useful—and, if so, greenlight the next step. As you trust your agents more, you can remove human in the loop stations to try to automate a workflow end-to-end as much as possible. In this case, make sure you have good auditing logs, as an error is no longer a quick check of one response list: it becomes a cross-system investigation.

Zapier has built-in human in the loop steps, and because of robust governance and auditing, you'll be able to keep track of everything your agent does—before and after improvement.

Keep improving your AI agents

AI is super flexible, but that doesn't mean that you can launch it once and be done with it. Each improvement project is an opportunity to provide more context about the work you do, the workflow the agent sits on, and what are the core do's and don'ts of the tasks.

Use this article as a blueprint to guide you the first few times you want to improve your AI agent tools, and add your notes and constraints to expand it and make it more useful for your circumstances. And if your development platform is the obstacle, check out Zapier's automation, AI agent technology, and orchestration capabilities—reliable, easy to use, and safe for enterprise environments.

Related reading: