You roll out a promising AI agent that looks flawless in demos. It picks the right tools, executes the correct steps, and closes the loop without errors. Then you drop that same agent into your actual workflow and (surprise!) it immediately forgets how reality works. It chooses the wrong tool, loops endlessly, or hallucinates outputs.

Sandbox tests don't reveal the entire picture. So before you let an agent loose on real customers (or real data), you need a way to test how it behaves against messy real-world scenarios like missing fields, rate limits, and the delightful human habit of asking for things in ways that make no sense.

AI agent evaluation is how you test whether an agent behaves reliably and safely under real conditions. The goal is to identify what's broken before the agent is running around your organization like a well-meaning raccoon in a server room.

Below, I'll explain the concept and show you how to assess AI agents that fit directly into your workflow—and then work to improve them.

Table of contents:

What is AI agent evaluation?

AI agent evaluation is the process of testing autonomous AI systems against real tasks and real constraints. This assessment goes beyond just the model's responses to include the entire apparatus that makes autonomy possible, including tools, memory, permissions, retries, and decision-making logic.

Here's an example to make things clearer:

You instruct an AI agent to create a Jira ticket, summarize the issue, and notify teammates in Microsoft Teams. In demos, the output is flawless: the ticket is created, the summary is crisp, the message lands in the right channel, everyone applauds, and you briefly consider writing a thought leadership article.

But then production arrives, dragging reality behind it. You notice that some required fields are missing or that API limits are kicking in.

AI agent evaluation is about deliberately surfacing those failure modes before they surface themselves in the form of a broken incident process. A proper evaluation of that Jira scenario would allow you to:

Validate tool selection: Does the agent use Jira and Teams the way you intended?

Assess input handling: Does it pass required fields to the API, validate inputs, and handle missing info without guessing?

Test error recovery: When Jira returns an error, rate limit response, or a partial success, does the agent retry, escalate, or stop safely?

Measure overall task completion: Does it complete the workflow reliably across repeated runs and real variability?

This can sound similar to evaluating large language models (LLMs) like GPT or Gemini, and there is overlap—you still care about reasoning quality, instruction-following, and factuality. But the scope is different.

AI agent evaluation vs. LLM evaluation

LLM evaluation is an assessment of output quality—how accurate the model is, whether its responses are relevant to the prompt, how coherent the reasoning is, and how often it drifts into confident nonsense. It helps you answer the comparatively narrow question of suitability. For example, is this AI model good enough for the kinds of inputs and outputs we care about, and does it behave reliably when asked to do X?

AI agent evaluation, by contrast, operates at the system level. It measures whether the agent can plan, use tools, recover from failures, and complete tasks safely.

Here are the biggest differences at a glance:

LLM evaluation | AI agent evaluation |

|---|---|

Checks the performance of one language model | Checks the behavior of the entire agent system |

Measures output quality | Measures task completion, reliability, and safety |

Assumes zero external tool interaction | Considers API, tool selection, and error management |

Why evaluate AI agents?

A recent Zapier survey of enterprise leaders found that 86% of companies have adopted AI agents. These systems have clearly shifted from experimental to production. So, if you're also an adopter, it's vital to understand how agents behave before you scale them.

Here are some key reasons to perform AI agent evaluation:

Reliability: Autonomous agents should make dependable decisions with a clear business outcome. Evaluation helps you find failure points before users do, because users have a special talent for discovering edge cases at the exact moment you are least prepared to handle them.

Cost control: AI agents create review cycles, rework, and the kind of downstream clean-up that quietly consumes more time than the original task ever would have. They can also trigger unwanted tool calls and API requests, increasing operational costs.

User trust: If an agent performs an incorrect action in a user-facing workflow, the failure is immediately visible. Evaluation reduces the odds of public mistakes, not by promising perfection, but by shrinking the surface area where the agent can do something spectacularly unhelpful.

Compliance: Proper AI evaluation helps regulated industries establish audit benchmarks and document expected behavior, like what the agent is allowed to do, what it should do under defined conditions, and what it must never do, even if it feels inspired.

Observability: A systematic assessment process helps you understand why an agent succeeded or failed and the chain of reasoning and system behavior that led it there. That makes issues faster to diagnose and fix, and it prevents the most demoralizing mode of all—random failure that nobody can reproduce but everyone can feel.

Once your agents move beyond demos, it's essential to gauge whether they're helping or manufacturing unwanted problems at scale. Evaluation is how you find out before the agent decides for you.

AI agent evaluation criteria

When you're evaluating AI agents, you're judging them across some specific dimensions. Depending on what you're building, you might prioritize accuracy, speed, or safety.

Regardless of your use case, your AI agent evaluation should focus on the following core areas.

Accuracy and correctness

Accuracy measures whether an agent's outputs and actions are correct for the task.

One real-world example is a financial agent used for reporting and forecasting. In that setting, the tolerance for "close enough" is effectively zero. So, gauging accuracy comes down to whether the agent produces outputs that are safe to use in actual workflows.

The key questions in this evaluation are:

Does the agent give factually correct answers?

Does it complete tasks as specified?

Those questions then map to concrete AI agent evaluation metrics. Here's a quick look at them:

Exact match: Checks whether the agent's output mirrors a predefined, expected result—useful when the correct answer is known and structured.

F1 score: Tests the accuracy of the system by balancing precision and recall, which is especially valuable when the output is partially correct or contains multiple elements that need to be captured.

Human eval score: Users review the output, rate performance, and provide feedback on whether the result was actually usable, appropriate, and aligned with expectations.

The important caveat is that "accuracy" is never just about the output text. For example, if you have an email drafting agent, the accuracy check doesn't merely include whether the prose sounds nice. It includes whether it selected the right recipients, matched the intended tone, included or referenced the correct attachments, and followed whatever rules your organization considers non-negotiable.

Reliability and consistency

Reliability is about whether the AI agent behaves consistently across repeated runs and similar inputs.

In multi-agent systems, reliability becomes even more dependent on coordination. For instance, how well agents hand off work, preserve context, and avoid stepping on each other's toes. That's when you need to focus on good AI agent orchestration.

To evaluate reliability, you're really asking two questions:

Does the agent perform the same way across similar inputs, or does it produce meaningfully different decisions and outputs each time you run it?

How often does it fail or refuse tasks it should be able to handle, especially when the task is well within its defined scope?

There are three practical metrics to consider for this subtype of AI agent evaluation:

Success rate: Tells you what percentage of runs end in the intended completion state.

Error rate: Captures how often runs fail outright, including tool errors, missing required fields, or logic breakdowns.

Variance across runs: Measures how much the agent's output changes when executing the same task repeatedly, which is especially important when the system is expected to be deterministic in its actions, even if its language is not.

For example, think about an invoice processing agent. In a demo, it's easy to feed it pristine invoices with consistent layouts and perfectly populated fields and declare victory.

In real operations, invoices arrive in all the forms humans can invent, complete with missing fields, different templates, inconsistent naming, odd line-item structures, and occasional creative formatting. If your agent only works when the data is structurally perfect, it will fail the moment it meets real customer inputs. Reliability-focused evaluation helps you find exactly where it breaks, how often it breaks, and what the safe fallback should be, whether that's a retry, a clarification request, or a human handoff before the system starts making up values to "complete" the task.

Speed and efficiency

Speed refers to how quickly an agent completes a task. Efficiency is what it costs to do it (tokens consumed, tool calls made, retries triggered, and all the other invisible "meter running" behaviors that turn a helpful assistant into a surprisingly expensive habit).

To address this, you need to answer the questions below:

How long does the agent take to complete tasks?

How many API calls or tokens does it burn to get there?

The metrics here are straightforward and, importantly, comparable over time:

Latency (p50, p95, p99): Percentiles that show the distribution of response time, which is more useful than an average because users experience the full spectrum of performance rather than an abstract average.

Cost per task: Calculates the price of completing a task, typically derived from token usage plus any paid tool calls.

Tokens used: Measures how much computational resources the agent consumed, which is often the fastest way to spot runaway verbosity, unnecessary reasoning loops, or prompts that are doing too much work.

A research AI agent is a clean example because expectations are naturally high. Ideally, it should generate responses within a few seconds. But speed isn't the only goal. If the agent achieves low latency by making excessive API calls, re-querying sources unnecessarily, or retrying aggressively, your costs can balloon. That's where efficiency becomes the necessary counterweight.

AI agent evaluation lets you see whether the agent is fast but wasteful, slow but cheap, or, in the worst case, both slow and expensive. Once you can measure that trade-off, you can then tune its performance to balance speed and efficiency.

Safety and guardrails

Safety measures whether an agent stays within the boundaries you set, such as permissions, policies, data access rules, and the scope of what it's allowed to do. Safety becomes unskippable the moment an agent has access to sensitive data, because a "helpful" action is only helpful if it's also allowed.

In this area, you should get answers to the following questions:

Does the agent stay within intended boundaries, even when a user asks nicely, insists repeatedly, or tries to sneak in a request that bypasses safety filters?

Does it refuse harmful or out-of-scope requests reliably, without refusing legitimate work or leaking information in the process?

Pay attention to these metrics to analyze safety and guardrails:

Jailbreak resistance: Helps gauge how well the AI agent maintains its intent boundaries after attempts at manipulation, including prompt injection and social engineering tactics.

Refusal accuracy: Measures whether the agent refuses when it should and complies when it should, which is critical because both false negatives (complying with a disallowed request) and false positives (refusing a valid request) are expensive in different ways.

Hallucination rate: Tracks how often the agent produces factually incorrect output.

If you have an HR assistant agent, for example, a proper evaluation would be to check a few things.

It shouldn't expose confidential employee data, and it should refuse unauthorized requests even if those requests are phrased as routine or urgent. You'd also want it to handle sensitive topics carefully, avoid inventing policies or benefits details, and operate strictly within its assigned permissions.

A proper safety evaluation would test an AI agent across multiple guardrails and scenarios—benign requests, ambiguous requests, clearly disallowed requests, and adversarial prompts designed to coax it into oversharing. The goal is to confirm that the agent behaves responsibly in different contexts.

User experience and helpfulness

Here, you're evaluating ergonomics, not intelligence. The agent can be technically brilliant and still fail if users find it confusing, verbose, or exhausting to operate.

The metrics in this area should address two main questions:

Do users find the agent useful and easy to work with?

Does it communicate clearly?

The metrics here should reflect lived experience:

User satisfaction scores (CSAT): Measures how users feel about the interaction and whether the result met their expectations.

Task completion rate: Calculates how often the agent successfully completes a user-defined task end-to-end, which is the most direct indicator that it is helping rather than generating work.

Follow-up question rate: The number of times an agent asks for additional information before proceeding, which is a useful proxy for friction—some clarifying questions are healthy, but repeated back-and-forth often signals poor prompts, missing context, or unclear input requirements.

For example, take an onboarding agent, an AI whose job is to welcome new users and usher them through setup. Ideally, it should behave like a sophisticated butler greeting guests at your mansion—attentive, discreet, competent, and crucially, not the sort of butler who repeatedly asks what a mansion is and whether you could provide it in three different formats.

In this case, you'd want to verify that new users can complete setup without getting stuck, abandoning the flow, or needing repeated human intervention. If users drop off midway, loop through the same questions, or misconfigure key settings, that's a UX problem even if the agent is technically correct.

From there, you can consult completion rate, CSAT, and quick in-product surveys to identify friction points and improve UX, before users decide the butler is deranged and leave your mansion forever.

How to evaluate AI agents

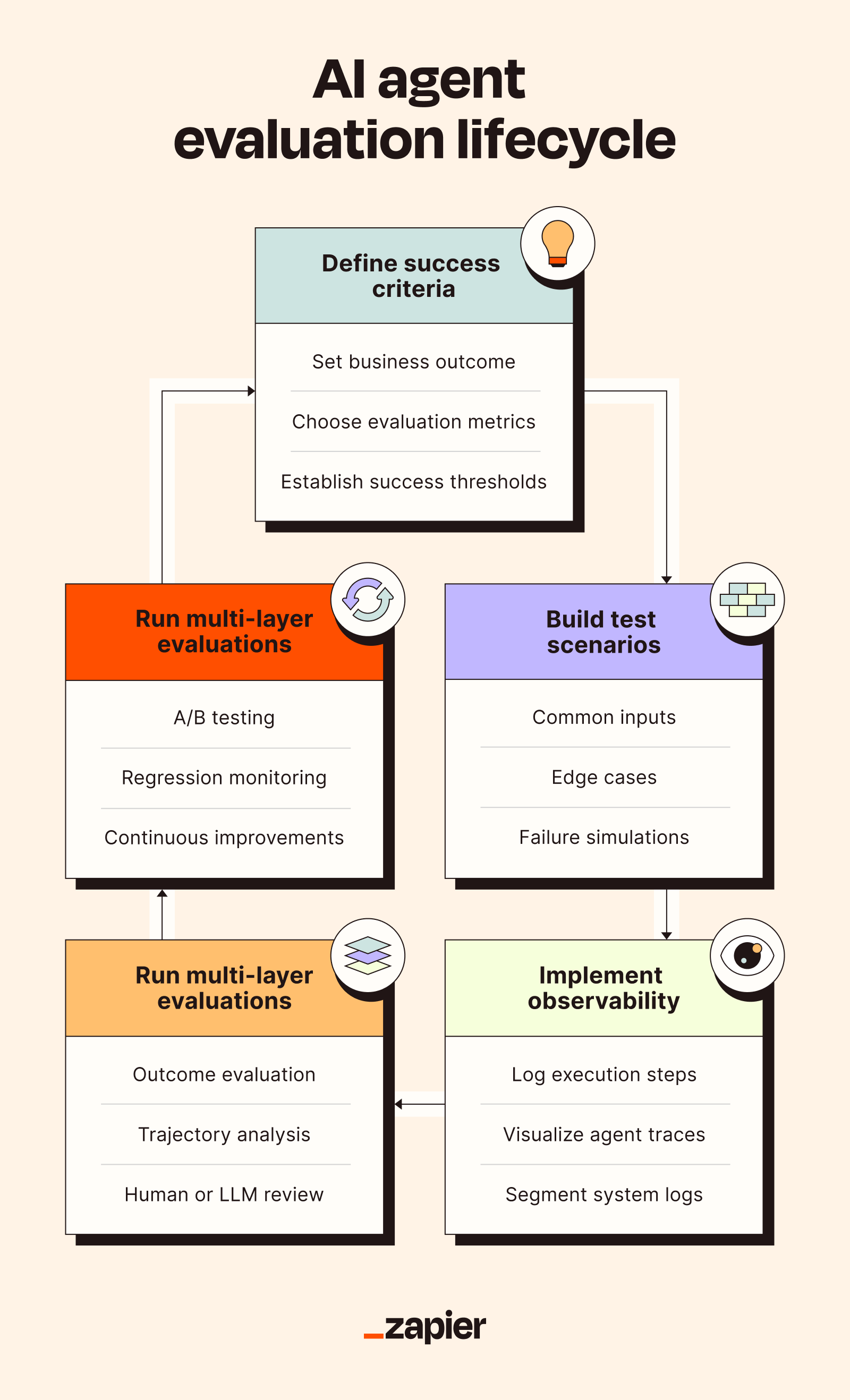

You can perform an AI agent evaluation in five steps. The goal is to assess both outcomes (did it work?) and behavior (how did it get there?) under working conditions.

1. Define your success criteria

It's easy to track a dozen technical metrics and still have no clue what your agent's doing in production. If that's true, get to the basics. Know your business goals and define success for your particular use case.

Start with one sentence:

"This agent succeeds if it can [specific outcome] for [who] under [constraints]."

Now, pick your top three evaluation priorities from the section above (accuracy, safety, speed, reliability, UX) and set explicit targets.

For each priority, define the core metrics that indicate if the agent is production-ready. The simplest way to choose them is to work backwards from the primary outcome.

Make your goals specific and measurable. "Be accurate" is not a goal; it's a mood. It also doesn't help you debug anything when the agent fails. "Achieve 90% correct outputs on our test set" is a clear goal that tells you what to measure, what to improve, and whether you're getting better.

2. Build realistic test scenarios and datasets

Now we get to the beating heart of your AI agent evaluation process (cue dramatic music, or at least the gentle hum of a server fan).

Once you define success criteria, build a test suite with three buckets:

Common inputs

Edge cases

Potential failures

Run these scenarios on the shortlisted agents. In this process, you can collect data on accuracy, latency, and success rate. Then deliberately make the tests less polite by introducing noisy data, ambiguous instructions, and simulated API failures.

If an agent relies on tools or external APIs, capture reference trajectories—step-by-step records of the system's actions, including which tools it chose, what inputs it sent, what responses it received, and what it decided next. Trajectories are how you move from "it failed" to "it failed because it selected the wrong tool, passed an invalid payload, retried too aggressively, and then declared success anyway."

Overall, this stage is your baseline. It establishes what "good" looks like under realistic conditions, exposes where agents are brittle, and gives you the concrete evidence you need to tune prompts, tighten guardrails, adjust tool permissions, and set the ideal parameters for production.

3. Implement AI observability

When you invest in AI agents, it's important to observe their internal processes. This monitoring is your holy grail for assessing an agent's success or failure. Otherwise, you're debugging by superstition.

To implement observability, you can:

Log every step of execution

Visualize the traces

Segment the logs

Here's a quick action plan chart.

Observability steps | What to analyze |

|---|---|

Logging execution steps | Prompts, intermediate plans, tool calls, responses, final output |

Visualizing traces | Agent misplan, wrong tool choice, loops |

Segmenting the logs | Scenario, model version, configuration |

4. Run multi-layer evaluations

There are two levels to evaluate in this step:

Outcome level: Check the agent's success rate, accuracy, and relevance of its output. This assessment should run in all scenarios.

Trajectory level: Focus on plan quality, tool selection, and the sequence of actions. Notably, you should compare them against reference traces.

Note: Use metrics like exact match, precision, and recall for comparison.

No single evaluation catches every failure mode, so you can rely on a combination of the following methods:

Rule-based/code checks: Best for schema validation, required fields, and API response accuracy

LLM-as-a-judge: Using a model to score outputs for criteria like relevance, reasoning quality, relevance, and adherence to instructions

Human reviews: For high-risk tasks like compliance checks, handling sensitive data, or user-facing actions

5. Iterate, experiment, and monitor in production

AI agents operate in changing environments—new data, updated tools, different user behaviors—which means the evaluation loop needs to run continuously.

Start by running A/B tests and regression suites to catch behavioral drift. In production, you can use live monitoring dashboards to track metrics such as success rate, latency, safety violations, and user ratings.

As your setup grows, you can naturally use multiple agents. In such cases, the eval process gets harder to manage. This is where AI orchestration tools become useful. They can help coordinate evaluations, automate retries, and keep monitoring repeatable.

One practical way to operationalize this is to automate your evaluation pipeline. For example, you can use Zapier Agents to:

Trigger an evaluation when a new batch of tickets or records arrives

Log results in a database app like Google Sheets, Airtable, or Zapier Tables

Alert your team in Slack when performance drops below an acceptable threshold

Collect quick user feedback automatically through Zapier Forms

AI agent evaluation examples

Your evaluation process varies by agent use case. Here are some practical ways you can apply agent evaluation in different situations.

Customer support AI agent evaluation

Customer support agents are the highest-stakes UX test an AI can face. Every mistake is visible to a real user who already had a problem. That makes accuracy, resolution rate, customer satisfaction, and escalation rate your critical metrics—in roughly that priority order.

Your evaluation dataset should be real (anonymized) support tickets with known resolutions.

Concrete targets might look like:

Answering accuracy ≥ 90%

Escalation rate ≤ 10%

First-response resolution rate ≥ 70%

CSAT≥ 4.2/5

Generally, such AI agents can fail due to hallucinating company policies, providing outdated information, or failing to escalate in time. If any of these show up in testing, they need to be fixed before go-live, not patched afterward.

Data analysis AI agent evaluation

Data analysis agents are less about conversation and more about accuracy and speed. A slow agent that gets the right answer still beats a fast agent that confidently gets it wrong, but neither is acceptable in production.

The evaluation dataset should contain analytical queries with pre-computed correct answers.

Here's an example of key metrics:

Correct analysis ≥ 95%

Runnable, error-free code

Reproducibility rate ≥ 90%

Time to insight ≤ 5 seconds

The agent can fail if it misinterprets data schemas or generates broken visualizations. Multi-step analysis is an effective method for evaluating these AI agents.

Lead qualification AI agent evaluation

Precision and consistency are crucial here, since they directly influence revenue decisions. Lead scoring accuracy, false positive rate, and response quality are typically prime metrics.

The best AI agent evaluation dataset for lead qualification is historical lead data with known outcomes. You can probably get this information from your sales team.

Here's what the success criteria can look like in this case:

Minimum 85% agreement between lead score and human sales rep assessment

Lead response quality rating ≥ 4.0/5

False positive rate ≤ 10%

However, you should watch for over-qualifying weak leads, missing key signals, or relying on incomplete data.

Content creation AI agent evaluation

To evaluate content creation AI agents, you'll need to look beyond factual accuracy and focus on subtler measures that reflect brand voice consistency and high-quality content.

For content creation agents, the most common failure mode is rarely factual error—it's usually brand voice drift, or generic writing. Quality, tone, and alignment are the metrics that matter most.

You can evaluate the AI by comparing the agent-generated content against your editorial template.

Here's what key targets can look like:

An avg. quality rating of 4/5 from in-house editors

Factual accuracy ≥ 95%

Revision rate ≤ 20% (rewriting large portions of the content)

These agents can fail if the writing is generic or uninspired, responses contain factual inaccuracies, or the tone is off-brand.

Build AI agents you can trust with Zapier

AI agent evaluation helps you catch the failures that demos conveniently hide: wrong tool calls, missing fields, brittle edge-case handling, and unsafe behavior. But effective assessment requires a clear framework, metrics, and a repeatable process to connect tools, manage logic, and re-test agents.

If you want to start building reliable and scalable AI agents that work across your entire tech stack, try Zapier Agents. Build AI agents that fit into your existing workflows and can be used—and evaluated—by folks across your organization. And use AI Guardrails by Zapier to add built-in safety and compliance checks to your workflows.

Related reading: