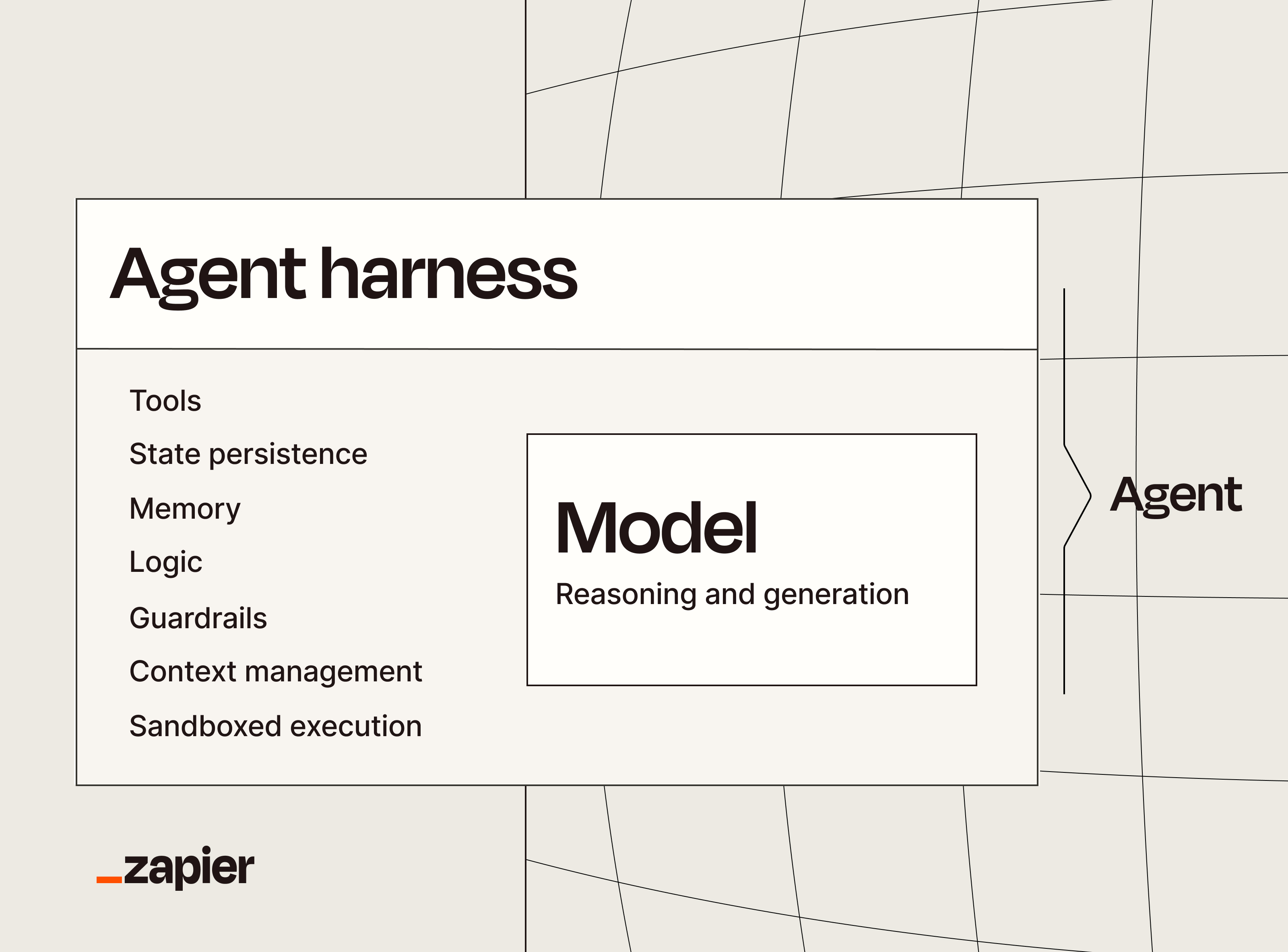

An agent harness is everything wrapped around an AI model that turns it into a working agent: tools, memory, state persistence, context management, sandboxed execution, guardrails, and the logic that ties it all together. It doesn't include the model itself.

The other day, I used the phrase "agent harness," and my colleague asked me if I had an equestrian show on in the background. I didn't (that time). But it made me realize that not everyone is as deep in the weeds of AI tooling as I am, and that a plain-English explanation was in order.

Here, I'll tell you what agent harnesses are and how they're different from models and agents. I'll also make the case for interoperability—why you don't want your app connections, context, and governance trapped inside your harness.

Table of contents:

What is an agent harness?

An agent harness is the scaffolding around an AI model that makes it capable of doing real work. The model handles reasoning and generates responses, but it needs the harness to use tools, hold onto memory across conversations, manage its own context, run code in a safe environment, and follow guardrails.

When ChatGPT launched in late 2022, it was basically a web interface for a raw model. You gave it a prompt, it answered you with text, and that was the whole nine yards of your interaction. ChatGPT couldn't search the web, remember what you told it in a previous message, read your files, or take action in your apps. If you wanted to use it for real work, you had to copy its output and do that work yourself.

That was the baseline for a while. Then in March 2023, a developer named Toran Bruce Richards released AutoGPT, the first thing most people would recognize as an agent harness. It wrapped GPT-4 in a program that could do things like set subgoals and use tools.

Although AutoGPT wasn't reliable, it called attention to the fact that the wrapper around a model could be just as important as the model itself. That wrapper is what we now call an agent harness. And today, every serious AI tool you use comes with one.

AI model vs. agent harness vs. agent: What's the difference?

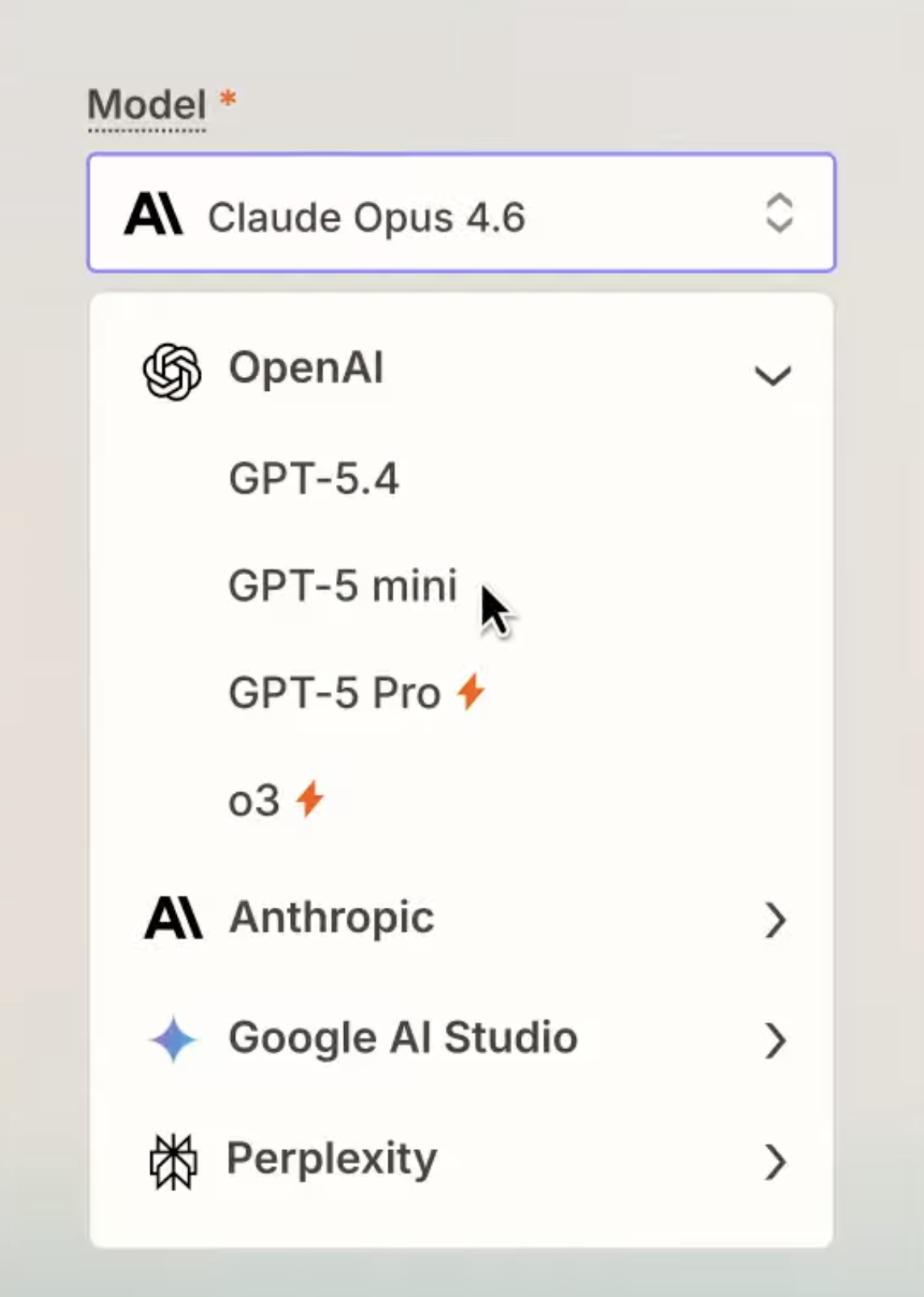

An AI model is a program that receives your prompt and gives you something in return. It's the brain, so to speak, that powers an agent. GPT-5, Opus 4.7, and Gemini 3 Pro are models. On their own, they don't have memory, tools, or a way to act outside a chat window.

An agent harness is all the stuff that's wrapped around a model. The system prompt that shapes its behavior. The tools it can call, like web search, code execution, file reading, and app integrations. The memory that persists between conversations. The logic that decides which tool to use and when. The sandbox, or the walled-off environment where an agent can test things out without touching anything important. The guardrails that keep an agent from doing something you'll regret, like emailing a customer without your approval or burning through a million API calls in a blink. The harness is what makes a model useful for actual work.

An agent is what you get when you smash 'em together. If you primarily use GPT-5.5 on ChatGPT, that entire unit is considered an agent.

What you lose when you switch agent harnesses

When someone asks you which AI you use, you might say ChatGPT, Claude, or Cursor. If we're being persnickety about terminology, which why would we not be, you're naming the agent harness. You may decide to toggle in and out of different models, but the harness is the consistent layer you interact with.

That means when you switch from one agent harness to another, you lose most of your operational investment.

Three pieces in particular tend to disappear: governance, app connections, and context.

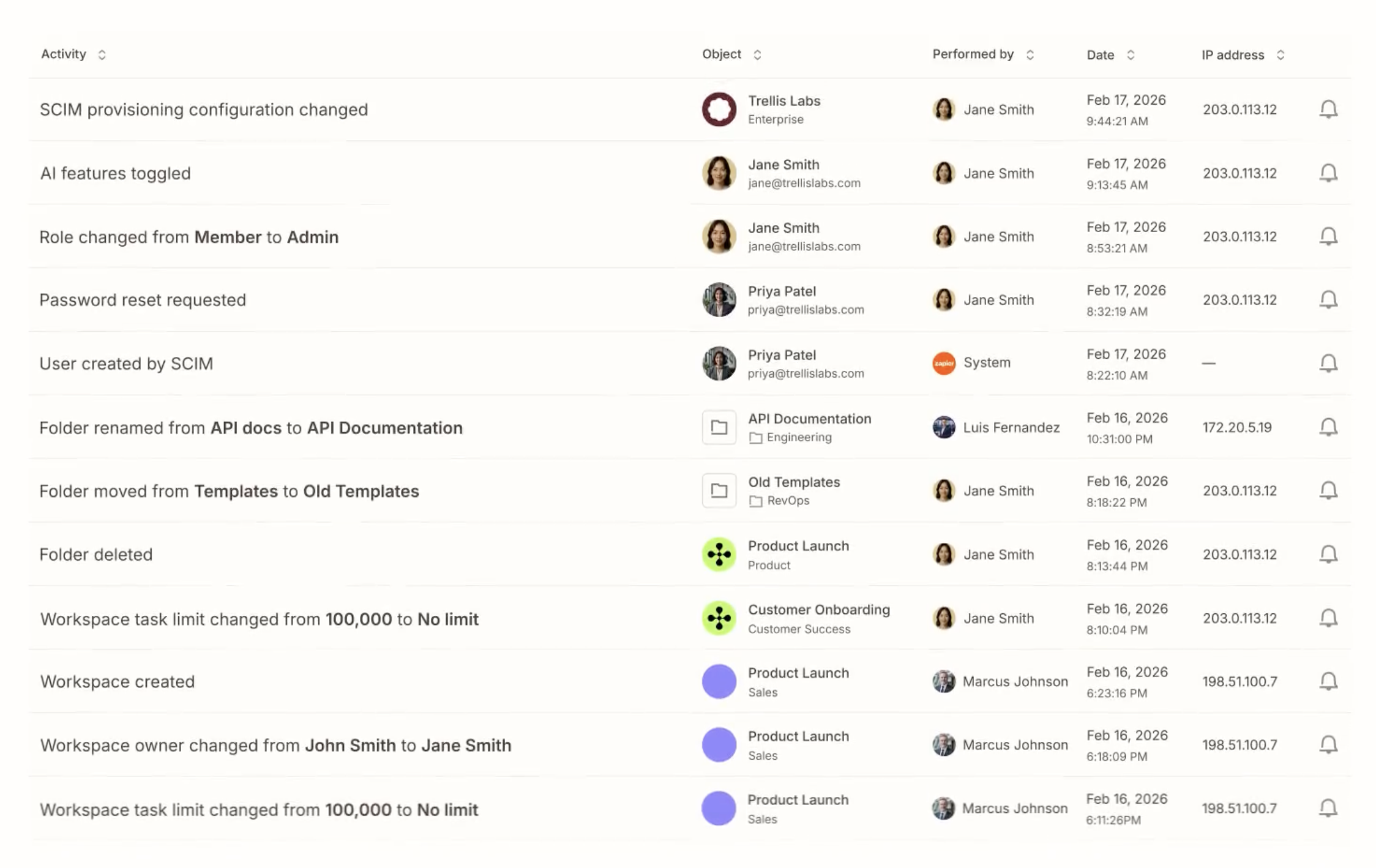

The access controls, audit logs, and model policies your IT team configured usually live inside the tool too. That means every harness change turns into a governance rebuild.

The integrations you wired up in ChatGPT don't follow you to Claude: Custom GPTs stay in ChatGPT, Claude Projects stay in Claude, and Cursor rules and MCP setups stay in Cursor, so you end up reconnecting the same apps over and over.

The instructions you fine-tuned, the knowledge bases you loaded, and the memory your agents built up about how your team actually works are all tied to the harness by default, so that hard-earned context resets the day you switch.

That's the migration tax. And at the pace AI tools are moving right now, you might be paying it every few weeks.

Zapier lets you switch agent harnesses freely

Zapier sits below the agent harness layer. That means your apps, context, and governance live at the platform level instead of inside any specific AI tool. When you switch harnesses, your setup stays put, which gives you:

Governance coverage. Permissions, access controls, and SOC 2 Type II, GDPR, and CCPA compliance are all enforced at the Zapier level. You set the rules once, and they apply no matter which AI tool a team decides to experiment with next.

App portability. Zapier is already wired into more than 9,000 apps, and those connections belong to your Zapier account, not to whatever AI tool you happen to be using this quarter. Point a new harness at Zapier MCP (chat), Zapier SDK (code files), or Zapier CLI (terminal), and it inherits every action your last one had.

Context durability. The instructions, knowledge sources, and running history your agents rely on sit in Zapier, so they're not tied to the harness you built them in. Tools like Zapier Tables give your agents a long-term memory they can read from and write to regardless of which model is at the wheel.

If you're experimenting with new AI tools on the regular, you need a secure layer that lets you hang on to your app connections, context, and rules. That's what Zapier provides.