When building software, how comfortable would you be leaving the room? Describe what needs to be done, hand it to an AI, and come back to review the result. Just the same as a senior developer reviewing a junior's pull request, but this time, the junior is a machine.

Right now, the best AI coding tools are competing on their delegation features, offering more ways to put models to work on their own. Codex is designed exactly for that. Cursor, on the other hand, started as an AI pair programmer that keeps you in the loop, but now it has an agent workspace geared for delegation—so it's in the race as well.

I've spent a lot of time inside both apps, both for personal use and to put them through the wringer for this comparison. After dozens of hours of testing and building, I have a lot of insights (and opinions…) on which tools is best for which job.

While on the surface, Codex and Cursor look like they're converging, you'll find fundamentally different assumptions under the hood. Let's pop it.

Table of contents:

Cursor inherits VS Code's experience; Codex has no IDE of its own

Cursor indexes your codebase before you ask; Codex clones it before every task

Both offer parallel agent execution: Codex has stronger security defaults, Cursor is more agile

Codex defaults to OpenAI models; Cursor lets you pick your favorite

In terms of token usage, Codex is more efficient than Cursor

Codex vs. Cursor at a glance

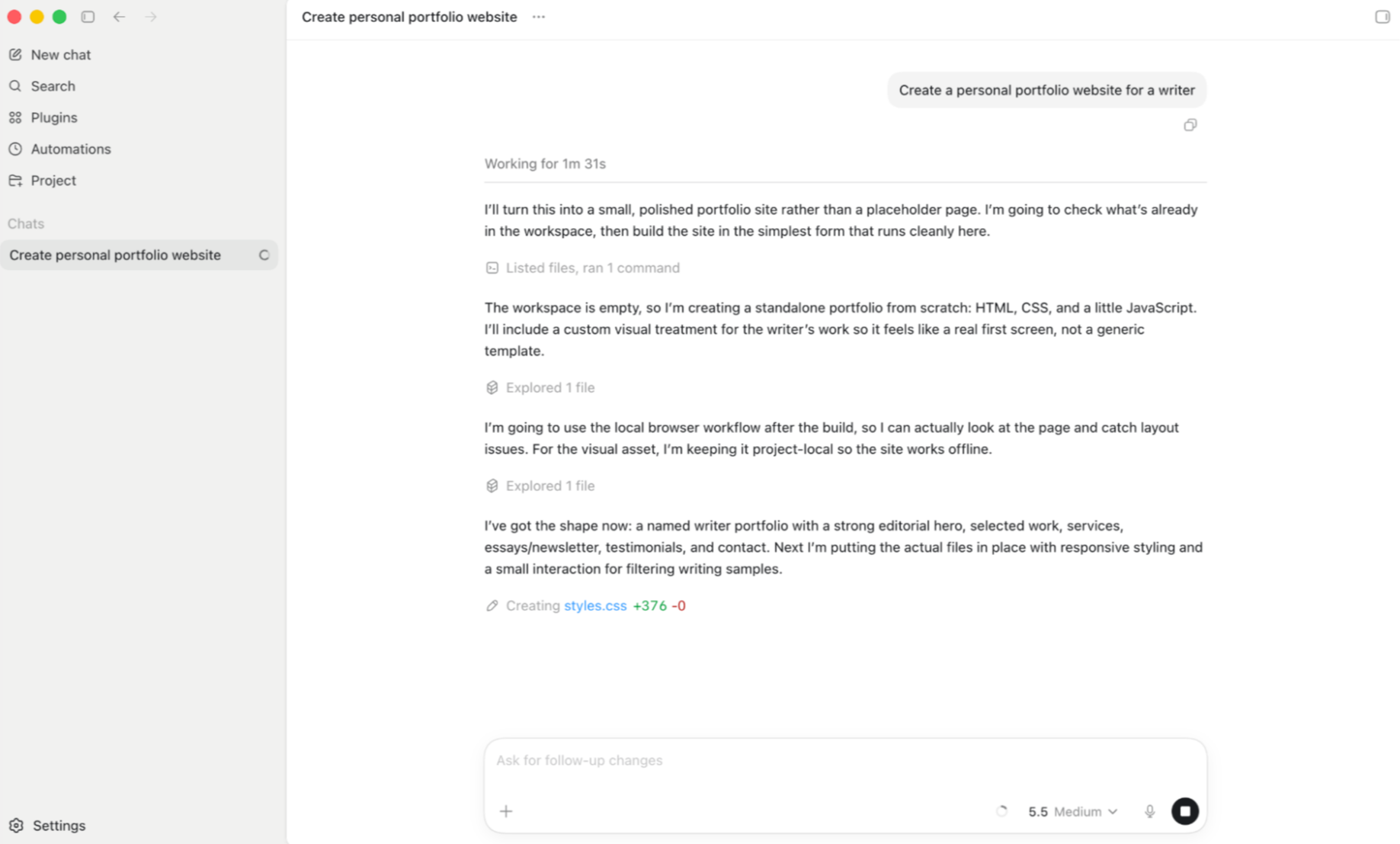

Codex is a coding agent built entirely around delegation. You describe what needs to be done in a web app, the CLI, or a macOS desktop app; it spins up an isolated environment, works through the code on its own, and hands you back a result to review. There is no code editor.

Cursor is an AI-powered IDE, a fork of VS Code with AI tab autocomplete, inline diffs, and an agent chat inside the editor. Now, with the Cursor 3 release, it's closing the gap on agent-only tools with the agent workspace, a separate interface where you can delegate tasks and review them once complete.

Both expect you to know how to code and to think like an engineer. This is a barrier for non-technical users, but if you have patience and curiosity, both are accessible enough to learn.

Cursor | Codex | |

|---|---|---|

Coding workflow | ⭐⭐⭐⭐⭐ Full IDE with tab autocomplete, inline diffs, and agent workspace; you choose how close to stay to the code | ⭐⭐⭐⭐ Delegation-only interface (web, CLI, macOS); no editor, pure async review (describe a task, get back a PR) |

Codebase understanding | ⭐⭐⭐⭐⭐ Persistent semantic index built from embeddings; instant context across files and functions, shared across your team | ⭐⭐⭐ Fresh clone per task with targeted search; clean isolation but slower to start |

Agent & delegation | ⭐⭐⭐⭐ Agent workspace supports up to 8 parallel agents, local-to-cloud handoff, and side-by-side model comparison with /best-of-n | ⭐⭐⭐⭐⭐ Built for delegation from the ground up; async cloud agents run up to 30 minutes, with native GitHub PR review |

Model flexibility | ⭐⭐⭐⭐⭐ OpenAI, Anthropic, Google Gemini, xAI Grok, plus Cursor's own proprietary Tab autocomplete model | ⭐⭐ OpenAI-only on web and desktop; Codex CLI is open source and can be pointed at other providers with some configuration |

Token efficiency | ⭐⭐⭐ Heavy context loaded per message; heavy users regularly hit ceilings and risk overage costs | ⭐⭐⭐⭐⭐ One of the most efficient agents available; internal reasoning keeps token burn low, running 2–4x leaner than Cursor |

Pricing value | ⭐⭐⭐ $20/month for the IDE; past pricing changes have surprised users with unexpected bills | ⭐⭐⭐⭐ Bundled into ChatGPT Plus and above ($20/month); no separate subscription needed if you already pay for ChatGPT |

Cursor inherits VS Code's ecosystem and experience; Codex has no IDE of its own

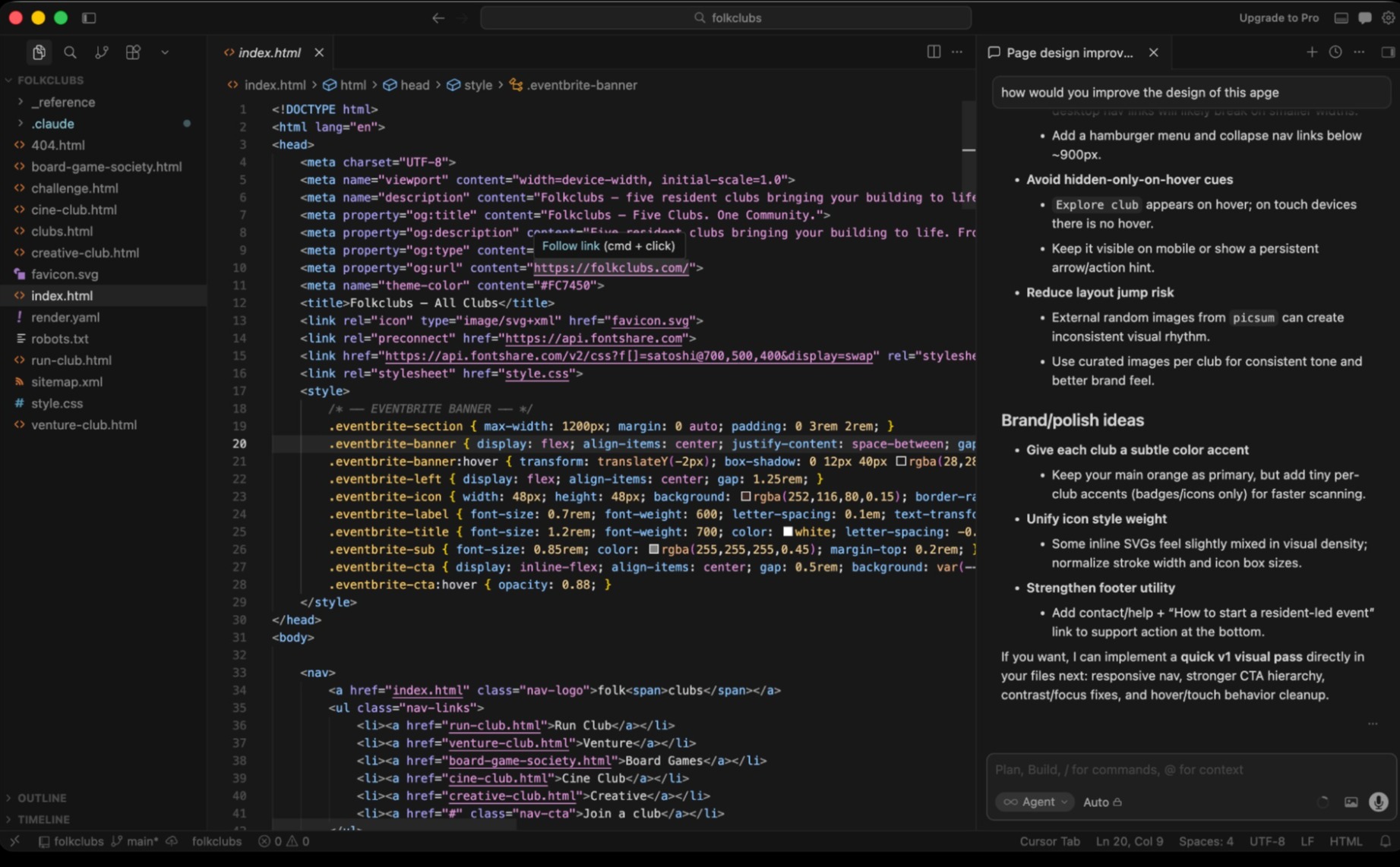

As a fork of VS Code, Cursor inherits its core user experience, layering AI features on top of it. Codex isn't an IDE, instead offering an interface where you can prompt, keep track of chat threads, and review results once the agent finishes working.

Developers open Cursor and are already home. The file tree is in the same place, extensions work the same, your keybinds all work. If you already use VS Code, the switching cost is close to zero.

Cursor is a direct fork of VS Code, meaning that everything carries over without reconfiguration. Well, almost everything: Microsoft clocked Cursor's popularity and started restricting its own extensions outside of the original VS Code, such as Remote SSH and Live Share, adding a point of friction to the switch.

The AI features layer on top of an environment you already know. As you code, you get autocomplete suggestions that you can accept by tapping tab. Highlight anything to ask questions or refactor on the spot, inspect inline diffs, and use the agent chat panel for longer discussions or bigger changes across files.

Codex takes a different bet entirely. There's no editor. When you delegate a task, you describe it in a web app, a CLI, or a macOS desktop app. The agent works through the code in a cloud sandbox and returns when it's done. You review a pull request, not a diff inside an IDE.

For developers who spend most of their day writing and editing code, this requires a mindset shift. In Cursor, you're the developer with an AI assistant. In Codex, you're closer to the senior: you brief, you set expectations, you review. The hands-on writing happens elsewhere, or not at all.

The delegation experience is gaining traction, which explains why Cursor 3 adds an agent workspace that narrows the gap to Codex. A separate interface from the IDE, you can manage multiple agents as they write, edit, or refactor multiple files at the same time.

Cursor is actively promoting this new interface as the future of software development, but you can still access the classic IDE at any time. The main advantage here is choice: if you prefer to have both experiences in a single platform, Cursor lets you choose how close you want to be to the code.

Cursor indexes your codebase before you ask anything; Codex clones it before every task

When you open a project in Cursor, it builds a persistent semantic index of your codebase using embeddings and a Merkle tree that updates as files change.

In essence, it works like a RAG framework for your codebase: you can ask about your payment flow logic, and the agent will both locate the code and be able to discuss it, even if your variable names aren't explicitly connected to payments.

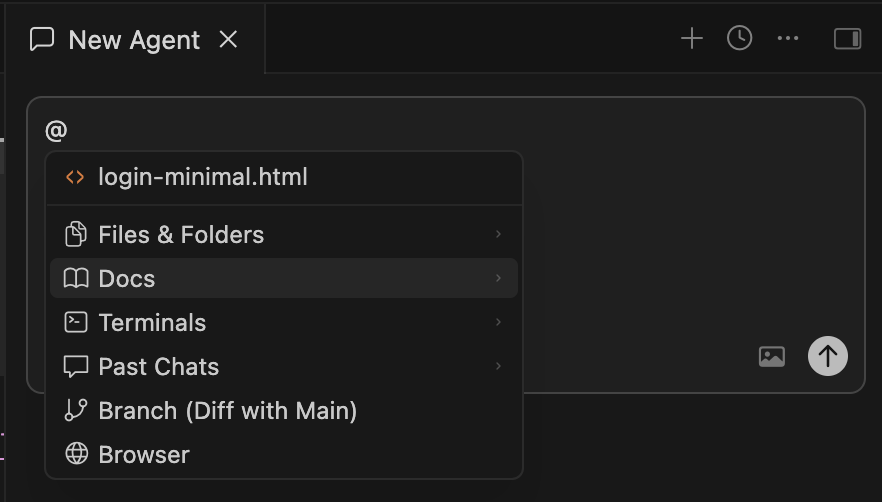

This lets AI understand your code deeply, also making it great for brainstorming on architecture and implementation. When you're ready to build, you can directly mention specific functions, files, or documentation with the @ sign, so the system will use them for context or edits.

The index is built locally, but the embeddings are stored on Cursor's cloud servers. The bad: if someone gains access to your vector database, they could reconstruct your code by reversing the embeddings. The good: indexing speed for large codebases is extremely fast for teams. After it's indexed for the first time, any teammate that opens it gets the starting point, and only the changes are processed from that point on.

Context is different in Codex: every task starts fresh. The platform spins up a new container, clones your repository into it, and uses targeted search steps to locate the parts of the code connected to your prompt. That means no persistent index and no embeddings sitting on a server between tasks.

It's clean, but you pay for that cleanliness with startup time: Codex can't give you a straight answer without searching and reading through your codebase first. And since it's more geared for autonomy, that tradeoff makes sense.

This feature is one of the core reasons why Cursor is better for pair programming. The index helps both you and AI models navigate the code on what it means and what it does, so both human and machine can make a better plan before executing it.

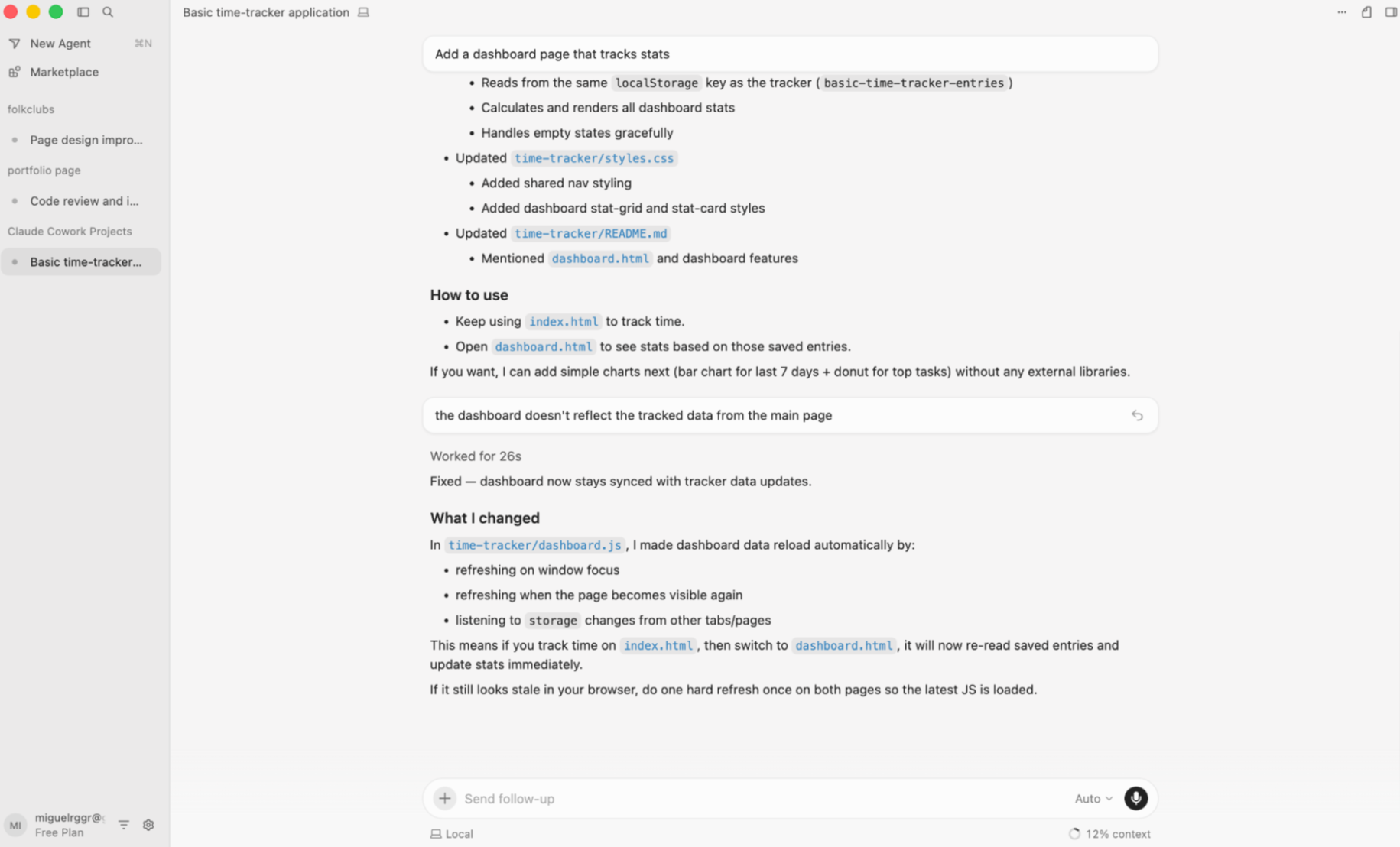

Both offer parallel agent execution; Codex's have stronger security defaults, but Cursor's are more agile

Cursor and Codex both let you run multiple agent tasks at the same time. When running, Codex's defaults prevent the agent from accessing the network, where Cursor still maintains the connection to trusted domains to provide more flexibility when testing code.

Running one AI agent at a time is already useful, but the productivity curve math changes dramatically when you run more in parallel: one agent handles the bug fix, another writes the tests, a third updates the documentation. When orchestrated well, you can collapse the time it takes to ship a new feature.

Both Cursor and Codex support parallel agent execution, locally and on the cloud—but there are differences depending on where the agent is actually working.

Running agents locally means the agent harness runs on your machine, consuming your CPU and RAM. The AI model itself stays in the cloud; what's local is the coordination layer. A typical high-end machine can run around eight agents simultaneously before everything starts slowing down.

Cloud agents work differently. Both platforms provision a fresh virtual machine per task, give the agent a sandboxed environment to work in, and destroy the VM when the task is done. Only the output code and pull request remain. You can spin up as many as you need, though more agents mean faster usage consumption.

In the local vs. cloud discussion, Cursor has an edge: you can hand off agent tasks you're running on your machine to the cloud, and back again if needed. This is useful to free up local resources or for pushing a long-running process to the cloud: don't work overtime waiting for an agent to finish a task, just review it in the morning when it's done.

Delegation can speed up shipping, but it also changes the security calculus. In this area, Codex and Cursor ship with different out-of-the-box configurations.

Codex's default posture is locked down. When the container starts, there's a brief moment when the network is on: it contacts trusted domains to download dependencies. After that, the network access is turned off and the agent is fully contained. It can't be hijacked by anything it encounters mid-task, such as a URL it's told to visit or an unexpected redirect.

Cursor's default posture is productive. The network stays on, but not open: agents have access to an allowlist of domains commonly used in software development, including Docker and Cloudflare. They also have browser access, which means an agent can test changes against a staging URL rather than just reasoning about whether they'll work. The sandbox is designed to mimic a developer at a workstation.

In both cases, you can adjust the network access controls and allowlists, so both platforms are flexible in this aspect. Make sure you're aware of what your agent has access to before integrating it into your workflow.

Codex defaults to OpenAI models; Cursor lets you pick your favorite

Codex works out-of-the-box with the OpenAI model lineup; the agent harness itself is open source, meaning that you can point it to other models by modifying the code. Cursor has a proprietary coding model and integrates with leading model providers, but the agent harness isn't open source, so you can't change it.

Codex runs on OpenAI's models. Using codex-1 at launch, it got consistent upgrades over time, now sporting the latest GPT-5.5. If you find that you like the agent harness but would like to try other models with it, there's an escape hatch: the Codex CLI is open source. You can inspect, modify, and point it at other model providers by changing file-level configurations. The web app and desktop app stay on OpenAI's models regardless.

Cursor takes the opposite approach. It connects to every major provider: OpenAI, Anthropic, Google Gemini, and more. The routing is smarter than a single dropdown, too. For tab autocomplete, Cursor uses its own proprietary low-latency Tab model; for agent tasks, it auto-routes to the best fit while letting you override per session.

The most aggressive version of this flexibility is the /best-of-n command in the Cursor 3 agent workspace. You send the same prompt to multiple models simultaneously, review the outputs side by side, and pick the one you want.

One thing worth flagging: the Codex CLI is open source but defaults to a single vendor; the Cursor CLI is not, despite integrating with multiple models and being more flexible out of the box.

Both harnesses can work across 9,000+ apps with Zapier

Once your agent writes the code, how does it actually do things in the real world? Most agents work in a vacuum—they can write a script that sends a Slack message or creates a HubSpot contact, but they still need someone to wire up the authentication, handle token refresh, and make sure nothing silently breaks when a credential expires.

That's the work Zapier handles. Both Cursor and Codex connect to the same integration layer—9,000+ pre-built, maintained apps, with OAuth-managed auth and access controls that travel with the integration regardless of which agent is running the code. Your credentials never reach the model.

There are three interfaces into that layer, all compatible with Cursor and Codex:

Zapier MCP for chat-native environments. Configure which actions your agent can call; it invokes them in plain language as it works.

Zapier SDK for code editors. A TypeScript package with generated types for every app and action, plus raw API access to ~3,000 additional apps via fetch. Auth, token refresh, and retries handled by Zapier's infrastructure.

Zapier CLI for the terminal, with a fast install path (

npx zapier) for scripts and one-off runs.

All three run through the same governance layer—same credentials, same access controls, whether it's Cursor or Codex doing the work.

In terms of token usage, Codex is more efficient than Cursor

Codex's agent harness is leaner than Cursor's, delivering comparable results with a lower token consumption.

Even when you send the same prompt to Codex and Cursor, the token burn is different. This has to do with a range of factors, including which model you're using and how the agent harness assists the model as it works.

In Cursor's case, every time you send a prompt, a lot gets passed behind the scenes, explaining why users can say "hello" to the agent and burn 18.5k tokens. This context includes information on the editor state, open files, any information returned from your codebase index, MCP server metadata, as well as model-specific optimization instructions.

Codex is one of the most efficient agents in the market today, loading very little context at the start when compared with Cursor. Even after completing the research and plan stages of the agentic loop—two tasks that typically consume lots of tokens—it stays leaner as it does a lot of its reasoning internally, not through outputting it as messages in text. This is consistent with the delegation-oriented user experience: the model doesn't need to explain its steps as it works, only focusing on delivering the end result.

While there are no official benchmarks between Codex and Cursor's token usage, developers report that Codex is around two to four times more efficient. Due to usage limits on both platforms, this generally means that you can prompt Codex for longer before hitting the ceiling, where with Cursor, you might cross into overages territory sooner.

Cursor's pricing has been more unstable in the past

The price for AI software development tools is moving toward token-based pricing. Cursor has struggled with communication around pricing changes, leading to surprise high bills for developers. OpenAI has been better in communicating pricing changes, but the value of the paid tiers has progressively tightened and become more complex, leading to frustration in the user base.

In the AI coding category, the monthly subscription price is becoming the entry ticket, not the full package.

Cursor made its transition in the worst possible way. In the past, Pro users would get 500 fast requests and then get unlimited responses at a slower rate. In June 2025, that changed to $20 worth of usage and anything beyond that billed at enterprise API rates. The change was poorly communicated: users were frustrated by making a handful of requests and running out of usage, with others not expecting high overage costs for going over the bundled usage. Cursor issued a public apology shortly after and offered refunds for unexpected charges between mid-June and early July.

OpenAI's arc hasn't been as dramatic, but still bumpy. Codex launched with generous allowances, including extra usage, lulling users into a misleading sense of abundance. Then came the 5-hour rolling windows, which limit how much you can run in any given period and force usage to be distributed over time. Even though this is now the standard in the AI coding sphere, users felt blindsided by the change.

More important than the play-by-play of pricing drama, here are the levers that control pricing changes:

Every new model release shifts costs across the board.

Changes in the agent harness can lead to price increases as well: even though model price remains the same, the tools that control it can increase usage.

You'll feel this more strongly in Cursor if you switch models frequently. As for Codex, you'll feel it mostly when OpenAI releases a new model (which is all the time).

When choosing a paid plan, overestimating your usage and upgrading earlier is the more predictable path. The higher paid plans usually have more features and higher limits, so you won't be stopped mid-work by a usage alert message.

Cursor vs. Codex: Which should you choose?

If you're not a developer, definitely go with Codex—it'll save you a massive learning curve. If you are a developer:

Choose Cursor if you want to stay close to the code: you write your own features, you want AI filling in the gaps and handling the tedious parts, and you like seeing the diff as it happens. And with the Cursor 3 agent workspace, you now have delegation included in the mix, so it's starting to feel like an all-in-one, from the IDE to autonomous agents.

Choose Codex if you're ready to delegate whole tasks and review the output, you think in terms of outcomes, not sessions, and you want to spend more time reviewing PRs than writing lines. And if you're already paying for ChatGPT Plus, Pro, or Team, Codex is included: no extra subscription, zero additional entry cost.

There's also a case for using both. You can enjoy the AI pair programming lean of Cursor and Codex for working in a sandbox on bug fixes or new features, so you can review and integrate the results in the end.

Over to you: are you staying in the room, or leaving AI to do most of it on its own?

Related reading: