Meta has introduced a new flagship AI model called Muse Spark and sent the Llama "herd" of models to the farm upstate. It's a dramatic shift in AI strategy from the company that was once pioneering open models.

Muse Spark is a multimodal reasoning model. It's basically the Facebook parent company's response to OpenAI, Anthropic, and Google Gemini. Unlike the Llama models, Muse Spark is a closed-weight proprietary model. You won't be able to download it from Hugging Face or run it on your own machine for any reason. Right now, it's only accessible through Meta AI.

It's a shame to see Meta pivot away from Llama, but it's understandable given the rising competition from Chinese open models like DeepSeek and Kimi, as well as the need to stay competitive with the other American AI labs. So, let's look at how Muse Spark stacks up.

Table of contents:

What is Muse Spark?

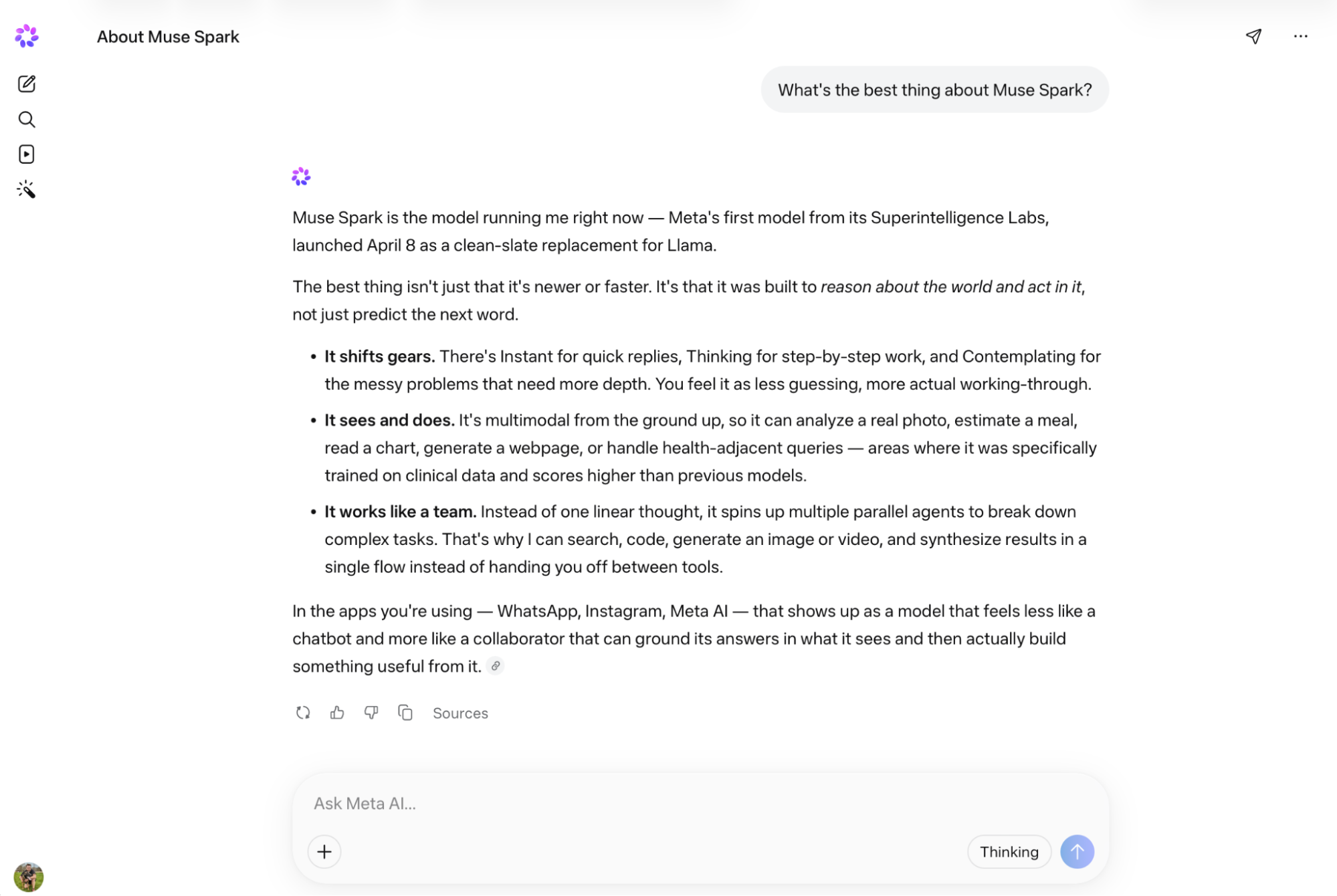

Muse Spark is the first model developed by the awkwardly titled Meta Superintelligence Labs. Like other frontier models, Muse Spark is a multimodal reasoning model that supports tool use and agentic behaviors. In other words, it can analyze visuals as well as text, decide whether to generate an image in response to a question or search the web, and have sub-agents handle tasks.

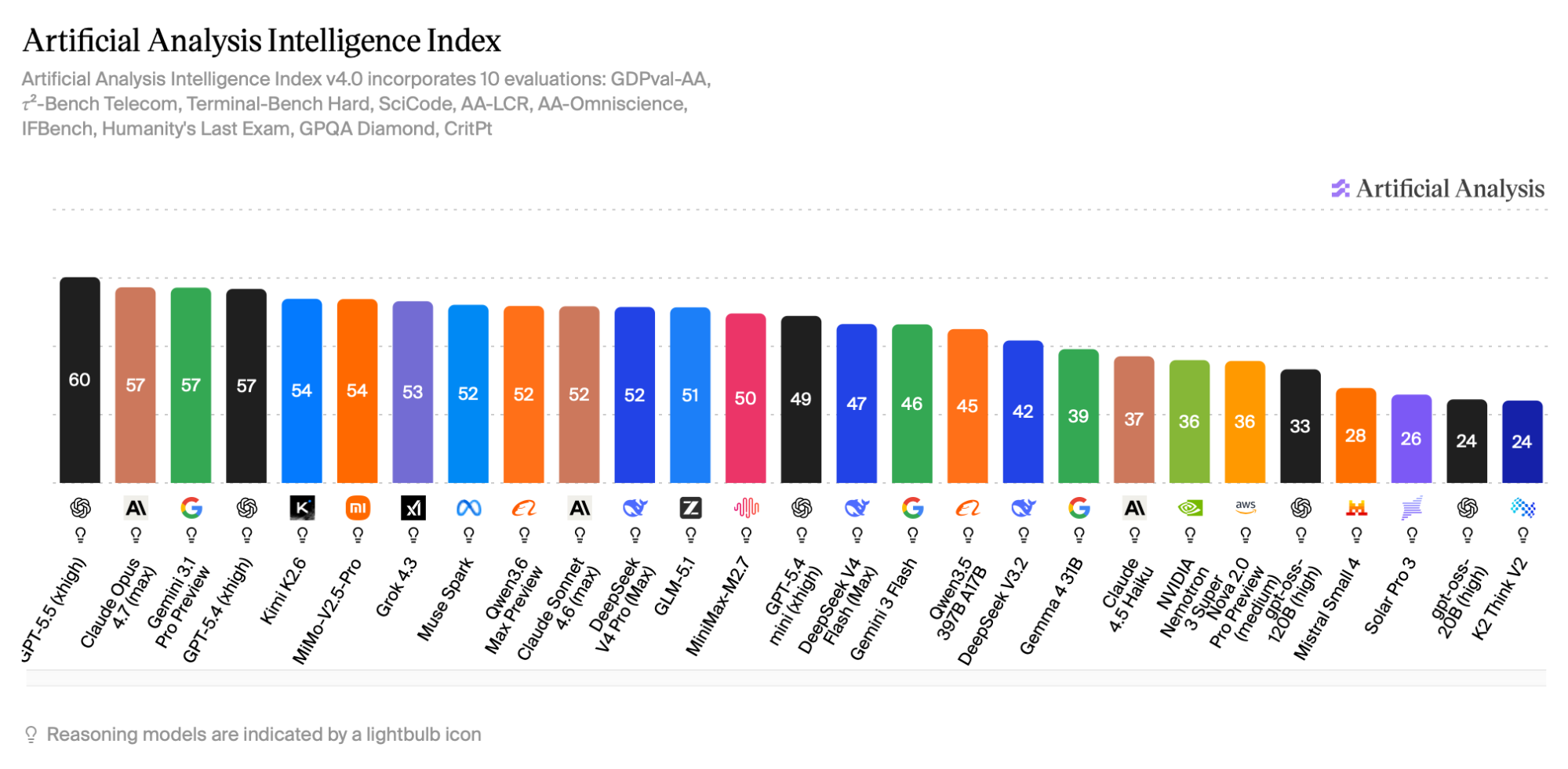

And Muse Spark is legitimately a frontier model. It's currently ranked seventh on Artificial Analysis, behind models like GPT-5.5 and 5.4 (extra high), Claude Opus 4.7 (max), Gemini 3.1 Pro Preview, and Kimi K2.6, and ahead of models like Claude Sonnet 4.6 (max) and DeepSeek V4 Pro (max). For context, Llama 4 has been out of the top charts for almost a year.

Muse Spark has three modes: Instant for quick replies, Thinking for regular reasoning, and Contemplating, which uses multiple agents' reasoning in parallel and is akin to the deep reasoning modes in other models.

Muse Spark is meant to be the first in a family of models. While details are scarce, it is apparently the "first step on [Meta's] scaling ladder and the first product of a ground-up overhaul" of its AI program. While Meta has previously announced AI models that never shipped, including Llama Behemoth and Llama Reasoning, it seems like this really is the start of a big refresh.

Part of that is a brand revamp. Meta says Muse Spark has "capabilities for personal superintelligence" and was specifically trained on health reasoning. Meta claims to have worked with more than 1,000 physicians to develop training data. It can also apparently count calories from an image. It seems to me that Meta hopes its AI tools will go from an annoying pop-up inside its apps to a tool that people rely on. We'll see if it succeeds.

One area where Muse Spark seems to excel is visual reasoning. Part of this is the underlying model and part is the built-in tools. It failed to solve a Sudoku puzzle in my testing, but it was a tricky edge case (though ChatGPT and Claude passed it).

Right now, Muse Spark is powering the Meta AI app and Meta AI features in Meta's other apps like WhatsApp, Instagram, and Facebook. It's due to come to other products like the Meta Ray-Bans soon. There's a private API preview for "select users," but it's unclear if and when that will be more widely available.

It's also worth noting that there's no Muse Spark AI coding tool or desktop app. Both OpenAI and Anthropic have leaned heavily into agentic coding and workflow tools over the last number of months, and it will be interesting to see if Meta follows.

Meta AI: How to try Muse Spark

Meta AI, the AI assistant built into Facebook, Messenger, Instagram, and WhatsApp, now uses Muse Spark—at least in the U.S. The best place to check it out is the dedicated web app.

What happened to Llama?

In short, Llama was outcompeted by Chinese research labs like DeepSeek and Qwen. By the time Llama 4 shipped in April 2025, it was already trailing DeepSeek V3 and other top open models. The announced Behemoth and reasoning models that were meant to be competitive never shipped. Also, Llama 4's benchmark performance was overstated as specifically tuned versions were used to inflate scores.

All told, Llama 4 never had the impact that Llama 3 did, and the AI landscape moves incredibly quickly.

By June 2025, Meta was reorganizing. They formed Meta Superintelligence Labs (still a ridiculous name), hired talent from OpenAI, Anthropic, and Google with wild compensation packages, had a new Chief AI Officer, went through a round of layoffs, and otherwise fully restructured their approach to AI. It's clear that, at this point, the company had decided that open source models were not its future.

Because they're open, you can still download the various Llama models. They're just out of date. There might one day be an open version of Muse Spark, like Google has its Gemma models and OpenAI has its oss models, but Meta isn't pursuing an open-first strategy anymore.

It's disappointing, but there are other open source options out there now—though they come with some geopolitical implications.

What's next for Meta?

Meta is still midway through a massive AI shift. Muse Spark is the first model to result from Meta Superintelligence Labs and the pivot to Personal Superintelligence, but it's safe to assume that there's a lot more coming down the pipeline. It's unclear how many more models there will be in the family, whether they'll be more or less powerful, and whether there'll be a public API.

Personally, it looks to me like Meta has lost a bit of time in this transition period. Over the past year, AI agents and particularly AI coding agents have become increasingly important. They're what justify enterprise and high-value AI subscriptions. Meta may face a choice of trying to catch up to Anthropic and OpenAI or doubling down on trying to be the personal AI embedded in the apps you use.

Either way, Meta is no longer a pioneering open source AI lab. It's another large tech company duking it out with its proprietary models.

Related reading:

This article was originally published in August 2023. The most recent update was in April 2025.