Agents are getting much better at building automations. But if you've spent time with AI automation tools, you've almost certainly hit both ends of a frustrating spectrum. You type something like "help me automate my sales process," and the first result is so far off that you're back to square one. Or you sink an hour into specifying every app, field, and branch before you ever see anything run. Neither path gets you to a useful first run quickly.

Over the past year at Zapier, as AI-native workflow builders moved from experiments to everyday work, we kept seeing the same pattern: the difference between someone who sticks with automation and someone who bounces isn't usually model quality: it's whether their first prompt lands in a sweet spot between too vague and fully specified.

Too little detail, and the system guesses wrong. Too much upfront, and people never get the "aha" that keeps them iterating.

This article is our working definition of that sweet spot: what we call the minimum lovable prompt—enough structure to get something real running fast, without requiring a complete spec on day one.

How we think about prompt quality for automation

We map prompt quality across a simple spectrum. It isn't a scorecard for beginners versus experts; it's a way to know what to add next when the first run misses.

Too vague means the AI can't narrow the problem. "Automate my sales process" could describe thousands of workflows. There's no clear goal, no named apps, no trigger—so the first output is a shot in the dark, and debugging doesn't teach you much because the intent wasn't constrained enough to learn from.

Fully specified is the other pole: every app named, every step typed out, branching logic, field mappings, and formatting. That's the end state you want for production—deterministic, repeatable, ownable—but you don't need to be there to get value. Many people stall because they think they have to build the whole workflow in the prompt before the first run.

Minimum lovable sits between them: the smallest amount of detail that still produces a first run worth reacting to. Something that runs, does something recognizably right, and gives you a concrete surface to refine. That's the bar we optimize for when we think about onboarding, templates, and how we coach people to prompt at Zapier.

What makes a prompt minimum lovable

Across the builders and workflows we've looked at, three ingredients consistently separate "minimum lovable" from "still too thin." We use these as a checklist before someone hits run or test.

1. Purpose and goal

Say what the workflow is for and what should happen in rough order.

You don't need exact thresholds, error handling, or every edge case—you need enough narrative that the system can infer the main sequence and the kind of actions (for example: qualify the lead, then notify the right person, then update the CRM). Without that thread, even correct apps and triggers get wired into the wrong story.

Make sure you add the "so what?" with your goal; this allows the LLM to better work through the tools it has to reach that goal, even if it can't follow the exact path you specified.

2. Apps and tools

Name the actual systems.

"Notify me" isn't enough. Do you want Slack, email, or SMS? "Our CRM" still isn't enough: Salesforce, HubSpot, and a spreadsheet-backed process behave differently in automation. Specific apps remove guesswork and make the first run a test of your stack, not a generic demo.

3. Trigger

Be explicit about what starts the workflow.

Say which app, which event, and which resource (for example, when someone submits this Typeform, or when a deal moves to this stage). If the trigger is wrong or fuzzy, the rest of the workflow is built on sand, so this is non-negotiable for a lovable first pass.

When those three components are present, you're usually at the point where running once teaches you something: you can see misfires, tighten wording, and add structure on top of real output instead of theory.

Moving from minimum lovable to production-worthy

Minimum lovable is deliberately not the ceiling. A complete instruction set—the kind you want for something you'll trust in production—layers on a few more components:

Branching logic: Define when to take one path versus another, and what happens on each path.

Step-level configuration: Specify the right action type per step and what each step must do (for example, Slack DM vs. channel post).

AI-step details: When the workflow invokes a model, what should it produce and in what format?

Field-level formatting: Clarify how messages read, how fields map step to step, and how outputs should look to downstream systems.

None of that has to arrive in the first prompt. In practice, the strongest workflows we see are built by people who run, observe, and extend: they start at minimum lovable, then promote detail into the spec as they learn where the model and integrations actually need constraints.

Why iteration beats front-loading

Research on how people work effectively with AI—including work like Anthropic's AI Fluency Index—emphasizes that high performers tend to treat the model as a thought partner: they prompt, push back on outputs, and adapt.

That maps cleanly to automation. A rough first workflow with visible reasoning and tight revision loops is often a better signal of eventual quality than a polished static spec that never touched a real run.

We care about minimum lovable prompts because they lower the cost of that loop: you get to "real enough to react" in one or two steps instead of burning motivation on either vagueness or over-specification.

How to use the minimum lovable prompt framework

Before you hit test or run, scan your prompt against the three components:

Purpose

Named apps

Concrete trigger

If one is missing, add a sentence or two there first—that's usually the highest-leverage fix. If all three are there, you're at minimum lovable: run it, read the output, then layer branching, step config, AI details, and field formatting as the gaps show up.

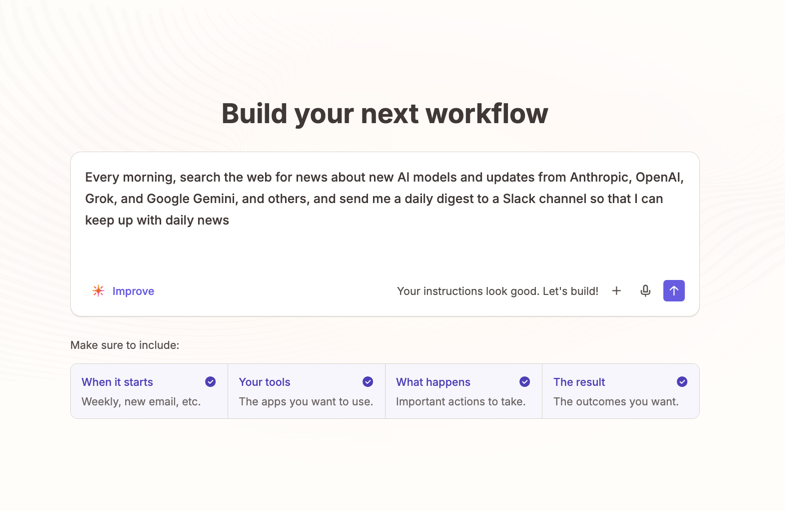

We've built this into the Zapier product as well: in the agentic building user experience, we have an LLM check whether the prompt fits these "minimum lovable" criteria to allow an agent to build it to a first run. From initial testing in early access, we've seen this increase the activation of workflows by 10%+.

Good prompts get you to a useful first run; great prompts come from iterating on that run, not from front-loading everything you might ever need.

What's next?

As models, connectors, and builder UX evolve, the exact words people use will change—but the underlying idea won't: constrain enough to learn, not so much that you never ship a first version.

We'll keep refining how we teach this in the Zapier product, docs, and programs that help people take their first steps with AI automation.

If your team has landed on a similar framework—or found edge cases where "minimum lovable" breaks—we'd love to learn from you.