Lately there's been a lot of interest in using the outbox pattern for reliability in event-driven services. We used it at Zapier for our Events API at scale.

There were a lot of upsides, but we also ran into some significant issues. Some of those came from the choices we made and the constraints we were operating under. Even so, this design gave us a lot of peace of mind for years.

We've now decided to move away from it. You can already see part of that direction in our earlier post on using a sidecar to reduce Kafka connections. I hope this post is useful if you're considering a transactional outbox backed by a local database in production.

Why we decided to use a transactional outbox

We run a really large managed Kafka cluster in AWS. Our critical workloads flow through our events systems, and we wanted to ensure reliability even when our Kafka brokers are under stress and we time out. Our goal was to keep accepting the events from different services in the face of downtime.

We have an events API service that provides an API to emit events to our Kafka cluster. It's a high-throughput service built in Go, but we ran into timeout issues with our Kafka cluster since producer latency would spike, especially during security updates and cluster upgrades. We also had a single point of failure around our Kafka cluster, and if it were to go down, we'd potentially lose critical events.

The challenge was that we wanted to keep accepting events even during a complete Kafka outage. That's when we decided to use the mechanism of a transactional outbox.

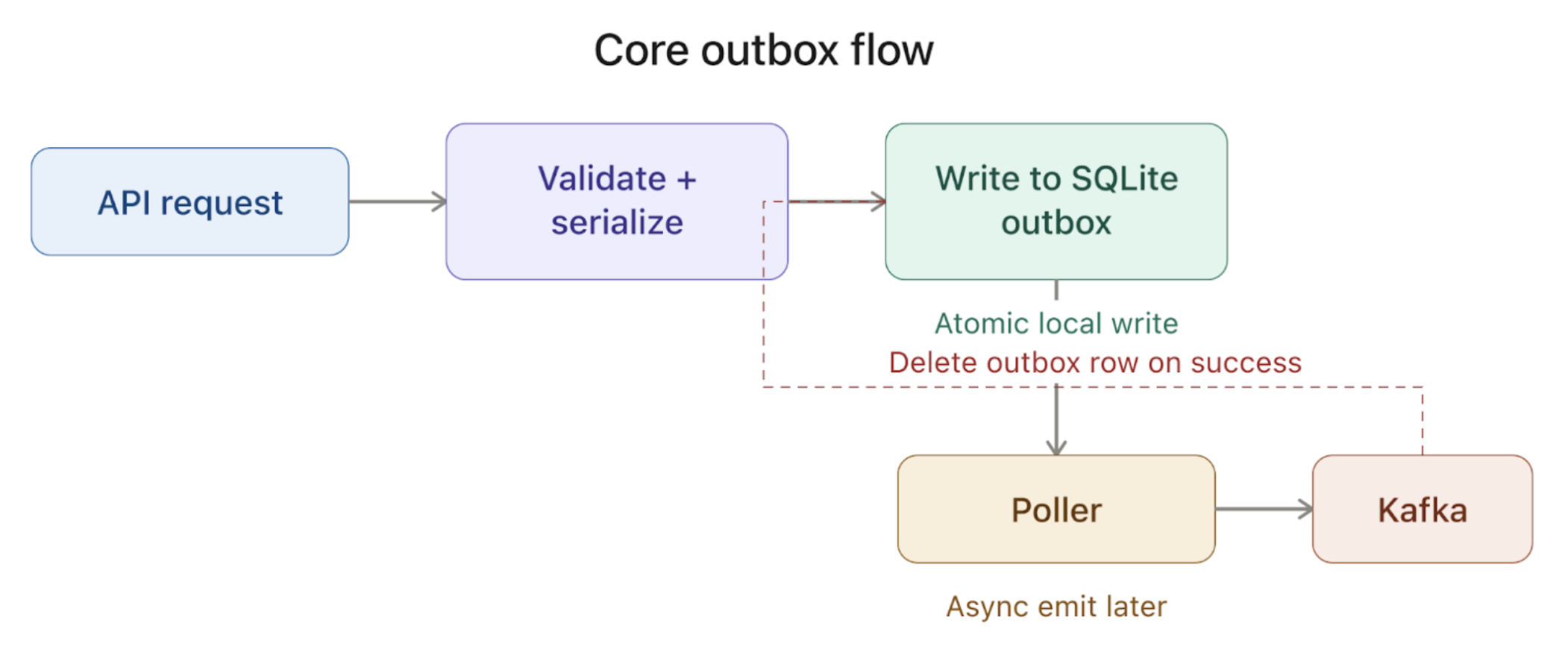

This wasn't the textbook transactional outbox pattern. In our case, the important atomic step was the local database write. Kafka emission only happened later for rows that were successfully written to the outbox.

We also decided to use SQLite for this outbox over a traditional RDS setup for a few practical reasons:

It gave us a local durable buffer without a provisioned database or network hop, and kept deployment simple across multiple regions and AWS accounts.

Our relational database infrastructure was also going through a transition at the time, and we didn't want this service coupled to that work.

Our events service API is built in Go and deployed using Kubernetes. This service basically lets teams produce events in Kafka in Avro format via a simple API without worrying about internal details.

Once we receive the API request, we validate it and serialize it against the schema registry since the events are in Avro format. Before we introduced the outbox, the event would be emitted to Kafka directly after this step.

After we moved to the outbox, we wrote events to a local SQLite table instead. We kept the schema simple, storing fields like the key, timestamp, payload, event ID, and status.

In the earliest version of the outbox, we had a scheduled job that periodically scanned the outbox for in-progress events and retried emitting them to Kafka. We later replaced that with a Go channel based poller that continuously picked up pending rows, emitted them to Kafka, and deleted the rows that were successfully sent. The delivery goal was still at-least-once, just as it had been before.

We stored the SQLite database on an EBS-backed volume attached to each Kubernetes StatefulSet pod. That way the data survived pod crashes, and when the pod came back up, Kubernetes could reattach the same volume and continue from the existing outbox state.

However, this early version didn't scale for a few reasons:

Our volume of events was too much for one outbox. We instantly got

SQLITE_BUSYerrors.We were initially running SQLite in default rollback journal mode, which didn't handle concurrent reads and writes very well.

How we scaled it

We took a few steps to scale this SQLite-based outbox:

Changed the journal mode to WAL

One of the first things we did was enabling write ahead logging for our SQLite database. It basically allows concurrent reads and writes by letting writers append to the WAL file while readers keep working on a stable snapshot.

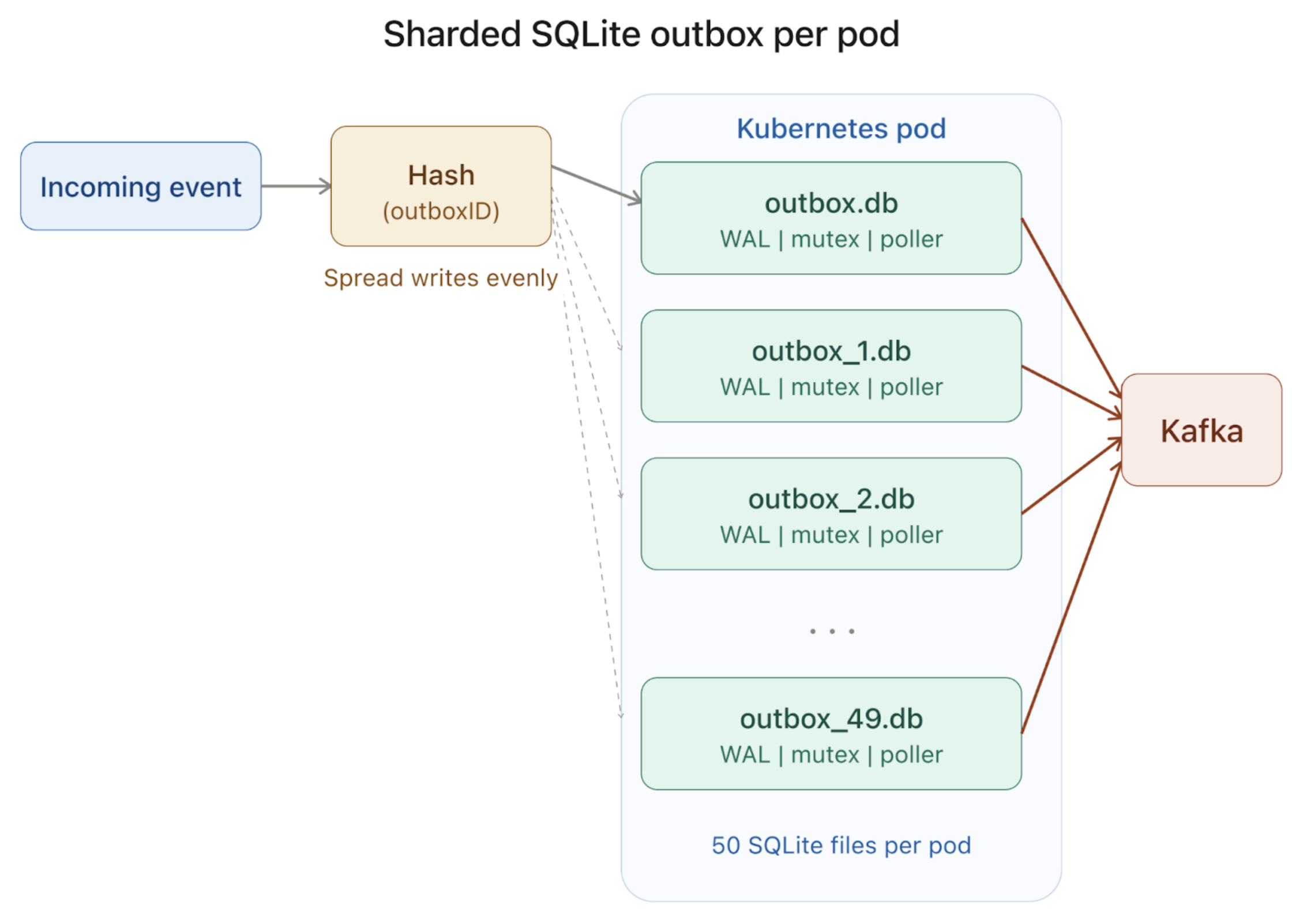

Sharded SQLite

The way you shard a SQLite database is by splitting the main database file into multiple database files. So we split our main outbox into 50 files per pod. This was about the upper limit our servers could handle at the time before CPU and memory became a problem.

Each shard got its own file, writer lock, and WAL. We used hashing to pick the shard and made sure to spread the writes and reduce collisions. We also could've used the shards to preserve ordering in the future by changing the hash function to route related events to the same shard.

Per shard mutexes

Sharding helped a lot. But we still got SQLITE_BUSY errors because two requests can hash to the same outbox, and SQLite still only wants one writer per database. SQLite recommends handling this with application level locks. So on top of the 50 shards, we also created a per-shard mutex in our service. That cleaned up the remaining write contention.

Tuned the database settings to keep EBS volume manageable

We ran into an issue where the EBS volume backing our local SQLite outbox kept growing under heavy traffic, so we tuned a couple of settings to keep disk usage under control.

We set journal_size_limit = 0 so that when SQLite resets the WAL after a checkpoint, it truncates the leftover WAL file down to its minimum size instead of keeping a larger file around for reuse.

Our rows were short lived. They got written, emitted, and deleted pretty quickly under normal circumstances. But deleting rows doesn't automatically return disk usage to the shape we want. We used auto_vacuum=FULL to keep steady-state space usage under control for the main database files, and we also ran VACUUM on startup to rebuild and compact those database files more aggressively. But adding vacuum delayed the startup time for our pods, so we added a startup probe path that accounted for it.

These settings helped a lot in keeping EBS usage stable under heavy writes.

How we fared

It served us pretty well, and these optimizations didn't take much time to test and implement. We were able to iterate fast on our approaches.

We were able to keep accepting traffic even when Kafka producer latencies were spiking, which removed a lot of operational pain. At peak, we were handling roughly fifteen thousand events per second. Our outbox could easily handle spikes in events during downstream failures, and it let us keep accepting events even during catastrophic Kafka cluster failures.

The downsides

This approach served us well in the grand scheme of things, but we did come across quite a few limitations.

Scaling up the number of outboxes wasn't easy. We used a simple modulo-based hash to choose the outbox, so changing the shard count would remap existing events to different databases. We would need to create a dedicated migration path for this.

We were coupled with limitations of StatefulSet pods. That meant slower deployments and availability zone constraints. It also meant we couldn't quickly scale up the pods in the event there were sudden bursts of traffic.

We also saw slower recovery after incidents where the outboxes built up a heavy backlog. When pods crashed or restarted, startup VACUUM had to work through much larger database files.

We also had to use synchronous Kafka producer calls on the emit path instead of asynchronous ones, because we needed guarantees from Kafka before deleting events from the outbox.

The local EBS volume was one of the main failure points in this design. Any failures or latency spikes could cause our application to become unstable, and the outbox data on that volume could be at risk.

This risk is still present, albeit reduced, even if you're using the highest tiers of EBS storage from AWS. But thankfully, we haven't encountered any reliability issues with our EBS volume so far.

The path forward

Apart from the downsides I mentioned earlier, another reason that we're moving away from this approach is that our requirements around latency and deployments have changed, and our fallback infrastructure around S3 and SQS has become much more robust. In our new sidecar mode, we have another durability path for Kafka failures, where failed events are written to S3 and an SQS message referencing the S3 object is enqueued, so they can be replayed later.

This fits our use case better. It removes the local SQLite write from the hot emit path, avoids the EBS and StatefulSet overhead that came with the outbox, and leans on our replay and redrive infrastructure built around S3 and SQS that we already trust operationally.

Acknowledgments

I want to thank Sarah Story, Aaron Kosel, Lizzy Nibali, Brian Corbin, Mahsa Khoshab, and Charan Mahesan for their contributions to this effort.