It's not uncommon for a business to invest in analytics, only to find that it's still difficult to get people to use data in their daily workflows. It doesn't matter which analytics and business intelligence (BI) tools you buy. Bold, colorful charts and visualizations don't always make much of a difference.

You can only solve the data adoption problem when you understand why people don't want to use data in their day-to-day workflows. And while there's no shortage of reasons why people steer clear of data, I've noticed that there are three common blockers that come up time and time again:

People can't find the right data.

People don't know how (and why) metrics are defined.

Working with data is too manual and time-consuming.

Let's take a closer look at each problem, plus some potential solutions.

Problem #1: People can't find the right data

"Hey! Sorry to bother you...(again) for this 😬 I can't find the right ticket data!"

I've sent many Slack messages like this one while searching for data, with varying degrees of desperation in my requests. As the VP of BizOps and then VP of Customer for a real estate technology company, I was responsible for reporting on my team's performance and how it impacted the company's finances.

I often needed data for quarterly board meetings, weekly business reviews, and daily decision-making, but getting access to it was rarely straightforward. That's just one example from my personal experience, but I've heard hundreds of similar stories.

When tracking down the correct data is a battle, people aren't likely to adopt it into their daily workflows. They'll cobble something together for big meetings, but only when necessary.

Solution: Remove data silos

There are a few ways to approach this problem.

Most often, companies will try BI tools like Tableau, Looker, or Mode. Each tool has its pros and cons. For example:

Tableau is user-friendly but expensive

Looker is better for technical people but requires a heavy lift to implement

Mode is cheap and simple but requires strong SQL skills to be effective

Unfortunately, BI tools and the dashboards, charts, and graphs they generate often contain more style than substance. Answering questions still requires manual work, like downloading files and copying/pasting/combining data into a new spreadsheet for further analysis.

Data catalogs, which include information like metrics definitions, business logic, technical metadata, and operational metadata, are another option. But they're designed for data teams and can be overkill for business teams and individual users. So instead of approaching data adoption from a tool-first perspective, your first order of business should be to reduce data silos and get organized.

Data silos occur when data availability isn't uniform across a company or department. If Marketing doesn't have access to sales data, a data silo develops and makes it difficult for the marketing team to measure the performance of their campaigns.

Fixing data silos is a big project. This guide from Segment breaks it all down and provides a roadmap you can follow: How to Fix Data Silos & Unlock Your Data's Full Value.

Problem #2: People don't know how (and why) metrics are defined

"Wait, how do we define 'Active Users'? Did the definition change recently?"

When people can't keep up with ever-changing metrics definitions and the underlying business logic that supports those definitions, they're not going to use data as often as they should.

Data catalogs can help teams keep metrics definitions and supporting logic well organized and documented. But, again, they're overkill for non-data teams. To get Marketing, Sales, Customer Success, and other departments to use data more often, take a more holistic approach.

Solution: Help people understand the "why"

Often, misunderstood metrics definitions result from poor organization and a lack of background knowledge.

Once again, data catalogs theoretically solve this problem: they centralize metrics definitions and the data points that feed into those definitions. But unless you have a data team running the show, they're still too much for most use cases. Instead of data catalogs, many companies opt for tools like Notion and Confluence to manage changing definitions. Keep in mind that this approach requires manual version control to avoid obsolete documentation.

Once your metrics definitions are organized, and people have easy access to updated information, you need to show them why metrics are defined in a specific way. Share the underlying business logic that supports how a metric is defined; that way, people have context.

People might also need extra education to become more comfortable working with data. I've seen companies with data teams succeed with "office hours," where business users are encouraged to ask questions and learn from the data team. The team at Zapier offers that, and also has a "Golden Path to Data," an internal course that helps non-data folks better understand how to work with data.

If you don't have a data team, spend some time creating learning resources—even a roundup of articles, eBooks, and other materials can give data newbies some initial guidance. For example, if I were building a syllabus of data-related resources for a marketing team, I'd include resources like:

My Journey Toward Becoming A More Quantitative Marketer. From Janessa Lantz, the VP of Marketing at dbt Labs. It's a great article about why it pays to become data-driven in marketing.

Content Marketing Metrics: 23 Metrics to Track Success. From Alex Birkett, co-founder of Omniscient Digital. This roundup includes detailed information about some essential content marketing metrics.

How to design a winning metrics framework. I wrote this guide to building a metrics framework—any function can use this approach to figure out which metrics they should focus on moving.

Problem #3: Working with data is too manual, time-consuming, and error-prone

"Ugh, the CSV import to the weekly leadership meeting template broke again."

If you're like me, you've also spent countless hours on manual data processes like copying/pasting data between spreadsheets and fixing CSV imports. Because the pipeline between your data sources and your data's final destination (often a spreadsheet or other document) is broken, tons of manual work is needed to get usable data.

Unfortunately, manual processes are frequently the "best" solution available for people who want to work with data. Even if those processes are frustrating, they waste precious time and create the perfect breeding ground for mistakes. Some people will power through these roadblocks. Others will "go rogue" and build their own data processes (with varying degrees of efficacy and accuracy). And others will give up—they don't have time to fight with data.

Fortunately, there are plenty of ways to streamline and automate the path between your data sources and the places where people want to consume data.

Solution: Refine your data source → data destination pipeline

In past startups I've worked at, my colleagues and I usually preferred doing our data analyses in collaborative tools like Google Sheets, Google Docs, Notion, and Airtable. Because getting data into those tools was manual, time-consuming, and error-prone, low data adoption rates were the norm. If I could have automated the journey between our data sources (e.g., HubSpot, Salesforce, Zendesk) and our preferred destinations like Sheets, data adoption would have increased.

If employees in your organization frequently rely on manual processes to get data into their preferred tools, here are some potential ways to automate things to make it easier for teams to access and analyze data:

Reverse ETL tools, like Census and Hightouch, push cloud warehouse data to various third-party business tools and applications, such as HubSpot, Salesforce, Zendesk, Google Sheets, Notion, and others. Reverse ETL is great for larger data sets but not the most efficient solution for smaller use cases. Additionally, the user interfaces of reverse ETL tools aren't great for non-technical users. They require heavy assistance from a data team (or technically savvy team members). But if you work somewhere with the appropriate resources to set up reverse ETL, it can be a viable way to make it easier for people to work with data.

Python scripts allow you to build a pipeline that automatically refreshes operating documents (like a Google Sheet) with new data. This approach is highly customizable, so you can develop a custom-designed pipeline to suit your users' data-related needs. It can be a powerful and reliable option for some companies, but it does require ongoing maintenance and data engineering resources.

No-code automation tools like Zapier allow you to transfer data from one app to another as well as set triggers to automatically send data between apps—that way, your data will always be wherever people spend their time.

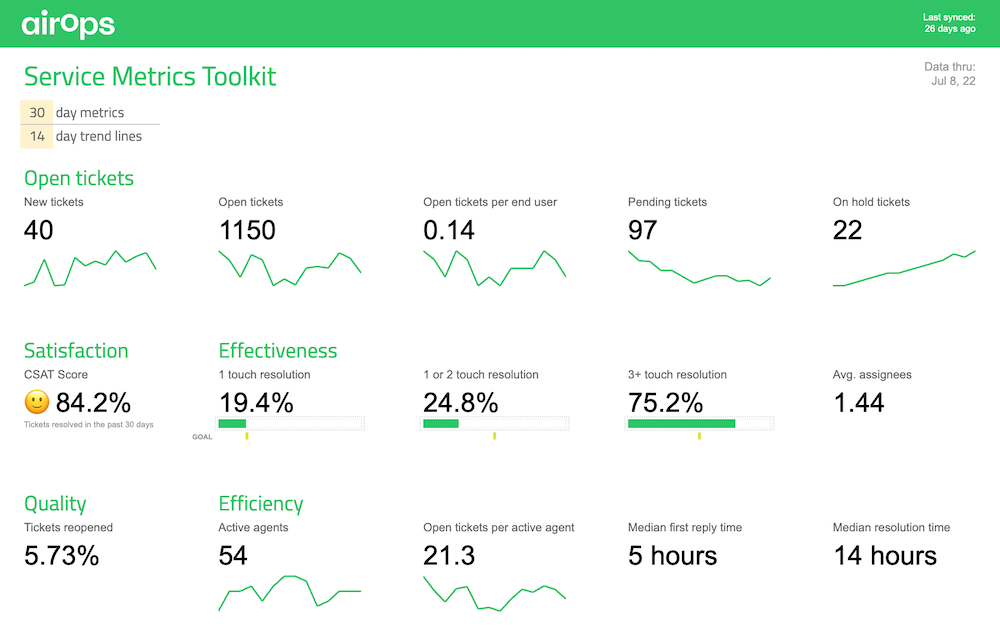

AI-assisted data tools are relatively new and constantly evolving, so I expect we'll see lots of innovation in this space in the coming months and years. There are tools for various use cases, including tools that sync data between sources and destinations. One tool in this category is AirOps Workspace (disclaimer: I'm one of the co-founders of AirOps). People in sales, marketing, finance, and other data-heavy functions can use Workspace to access important data and quickly set up automatic syncs into everyday tools like Google Sheets, Google Docs, Notion, and Airtable. Here's an example of an AirOps-powered Google Sheet template that automatically updates with data for a customer service team.

Data adoption is an ongoing process

Changing human behavior is tricky. In all likelihood, getting your team to use data more frequently will require some trial and error and a mix of different approaches. So if data adoption is a problem in your organization, take some time to understand exactly why before making any sweeping changes or big investments in new data tools.

Once you understand the origins of the problem—whether it's one of the three problems listed above or a completely new data-related issue—you can decide which solution is best.

Related reading: