Artificial intelligence (AI)-powered tools are everywhere now. Google recently added AI to Gmail, Google Docs, and Google Search. Microsoft has added AI to Bing, Word, Excel, and its other Office apps. Even Apple is quietly adding AI-powered features to its latest iPhones. And that's even before we consider AI-specific apps, like OpenAI's ChatGPT.

While all these tools and apps are incredibly impressive, very few of them live up to the sci-fi expectations of an AI. ChatGPT certainly isn't closing the pod bay doors or hunting down Sarah Connor any time soon.

So let's look at the difference between the limited AIs we have now and what's perhaps the end goal of AI research: artificial general intelligence.

What is AI?

Let's start with the big question: what is artificial intelligence?

Unfortunately, it's not a simple question to answer. I've written a whole AI deep dive here at Zapier, and the simple fact is that no one really agrees. There are multiple definitions, ranging from "a poor choice of words in 1954" to "machines that can learn, reason, and act for themselves."

The big issue is the word "intelligence." "Artificial" is easy to define, but psychologists still haven't managed to agree what constitutes intelligence in humans, let alone computers.

It also doesn't help that what is and isn't considered AI is constantly changing. The AI effect (or Tesler's Theorem) is the idea that "AI is whatever hasn't been done yet." A decade ago, it was thought that a computer needed some level of artificial intelligence to read written text; now, it's built into the camera app of your smartphone. Deep Blue was the embodiment of AI when it beat Garry Kasparov in 1997; now, the best grandmasters couldn't beat a smart watch, and no one thinks their Apple Watch is artificially intelligent. Even AI chatbots are starting to feel less impressive as people discover their limits.

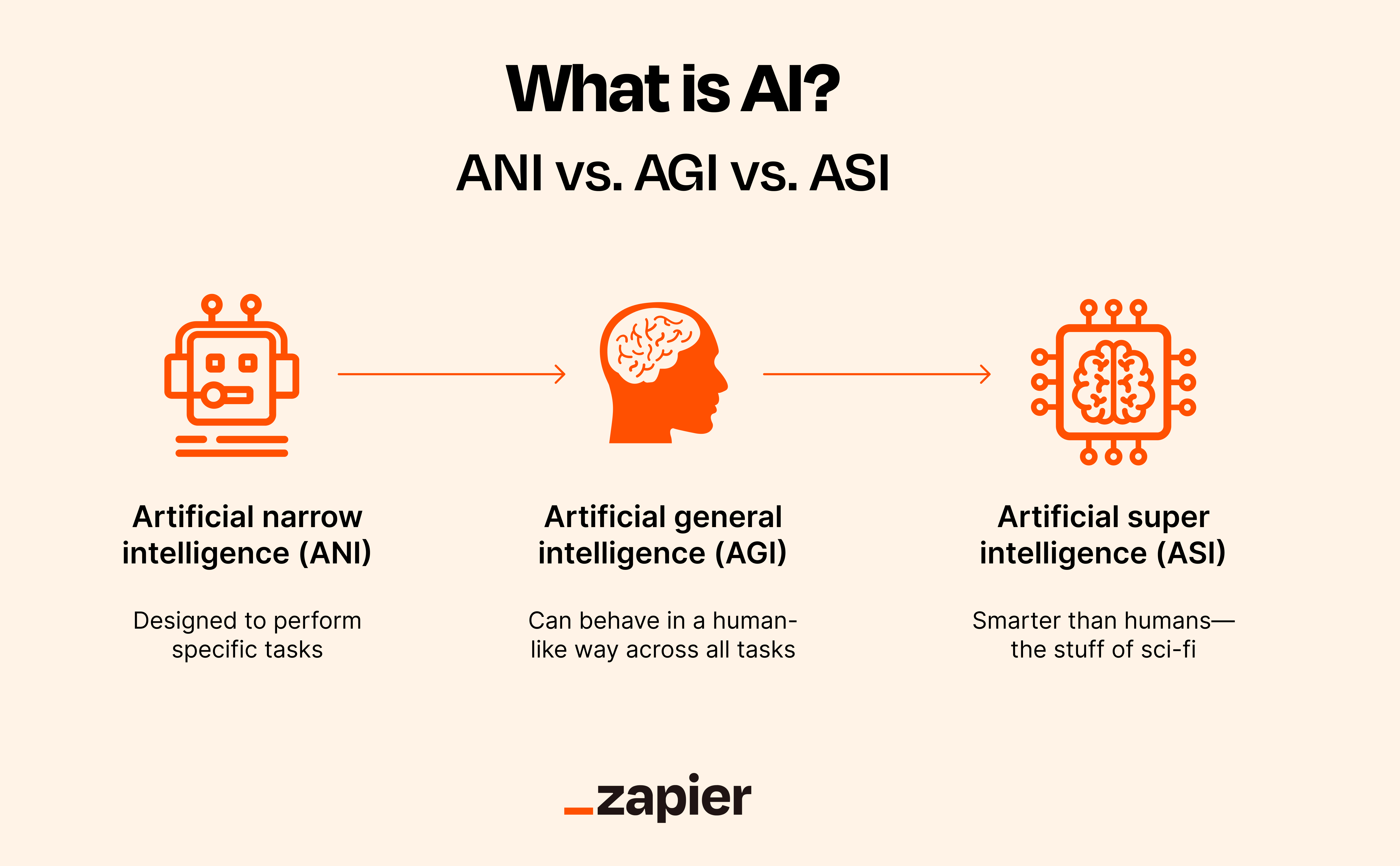

So, instead of working with a wildly wishy-washy definition of AI, let's work with three slightly less wishy-washy definitions:

What is artificial narrow intelligence (ANI)?

Artificial narrow intelligence (sometimes considered weak AI, but that really gets into the semantics of AI research) is an AI system that's designed to perform specific tasks. While there will always be disagreement as to what exactly constitutes AI and where the bar is set, let's look at some of the things that are near universally considered ANIs at the moment.

ChatGPT and other AI-powered chatbots

Although incredibly impressive, ChatGPT and other AI chatbots like Bard are still narrow AIs. While they can field and understand a huge variety of questions or prompts, that's their specific task. You can't, for example, ask ChatGPT to give you directions to the nearest shop, even if you can ask it to write a poem about Walmart.

GPT-4 and other LLMs

More broadly, GPT-4 and the other large language models (LLMs) that underlie AI-powered chatbots are also ANIs. While they're able to generate surprisingly good written text, they don't truly understand language.

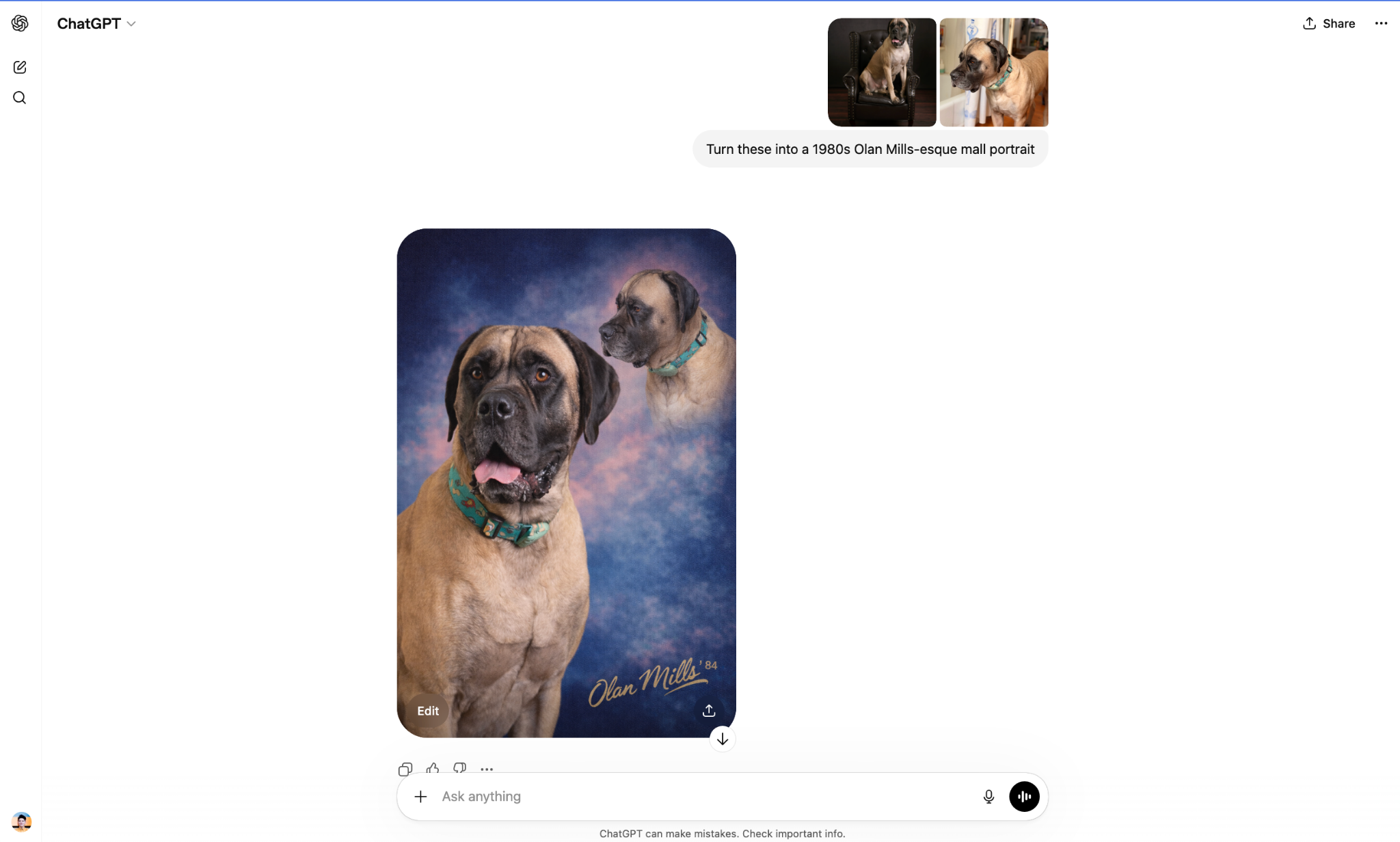

DALL·E 2 and other text-to-image generators

DALL·E 2 and other image-generating AIs are also ANIs. They rely on natural language processing (NLP) to understand your prompt and turn it into an image.

Self-driving cars

A self-driving car is perhaps the best example of an ANI, as its specific purpose—safely controlling a car—is so well-defined and near universally accepted as something that requires intelligence to do.

Alexa, Siri, and other voice assistants

Similarly, voice assistants like Alexa and Siri are good examples of ANIs. While they can have a broad range of functions around your home, they're really just designed to answer questions, set timers, and turn your smart lights on and off.

Advanced algorithms used in many different fields

Recommendation algorithms, financial trading algorithms, and other incredibly complex algorithms created using machine learning or relying on neural networks generally meet the definition of an ANI. For example, Netflix's recommendation algorithm easily clears the bar.

What is artificial general intelligence (AGI)?

An artificial general intelligence (AGI), or strong AI, is an AI that exhibits human-like intelligence (or is "generally smarter than humans"). What this really means is up for debate, but it's generally taken to be something a lot more sci-fi than we have now. It's not trained for specific tasks; instead, it's able to do near enough anything it's asked to do.

While some people argue that ChatGPT shows early signs of being an AGI, most researchers don't agree. What is true is that ChatGPT is far better at understanding and generating text than anyone had reason to assume a pre-trained transformer model would be. It's exceptional and has the potential to be really useful, but I feel it's a stretch to call it AGI.

My favorite definition of AGI is Apple co-founder Steve Wozniak's coffee test: a true AGI would be able to enter a typical American home and work out how to make coffee. It could find the coffee machine, figure out how to add the coffee and water (and from where), select the correct settings, and serve it in a mug. Other people have proposed that an AGI would be able to act like a college student: "to enroll in a human university and take classes in the same way as humans, and get its degree." Or that it would be able to replace most humans in most jobs.

What all these definitions are trying to do is to fully capture the "human-like" aspect of intelligence. It's not just about a computer being able to identify the correct course of action in a new situation, identify objects from a distance, or remember important details about something. It's about being able to do all of those things without needing to be reprogrammed or retrained.

The G is the key: AGIs have to be generally intelligent—whatever that means.

What is artificial super intelligence (ASI)?

We know that most AI terms are very poorly defined, and there's some debate as to whether AGIs are meant to be as smart as humans, or smarter than humans. Which is where artificial super intelligence (ASI) comes in.

Strangely, these are almost the easiest to define. ASIs are AI systems that far outstrip human intelligence. They're your sentient sci-fi supercomputers. We're nowhere near developing one yet, and I'm sure there will be huge amounts of debate about what counts as an AGI and what counts as an ASI when we get closer to it, but for now, I don't have to entertain it—and neither do you.

When will we get AGI?

Depending on who you listen to, we already have AGIs, we will never get AGIs, or AGIs are coming in the next few weeks/months/years/decades. No one really agrees on a definition, though a lot of very smart people are trying to build one.

The term AGI is so prevalent now because tools like ChatGPT are forcing us to reconsider our definitions of AI. The latest LLMs easily pass the Turing test, the earliest attempt to define what it meant for computers to think. But for all their power, ChatGPT and company are still miles away from a sci-fi AI like Star Trek's Data.

So maybe that's my proposed definition of it: an AGI is an AI capable of being a character in a space opera.

Related reading: