Research Report

Research Report

Research Report

The AI transformation framework

The AI transformation framework

The AI transformation framework

A data-backed guide to scaling enterprise AI adoption

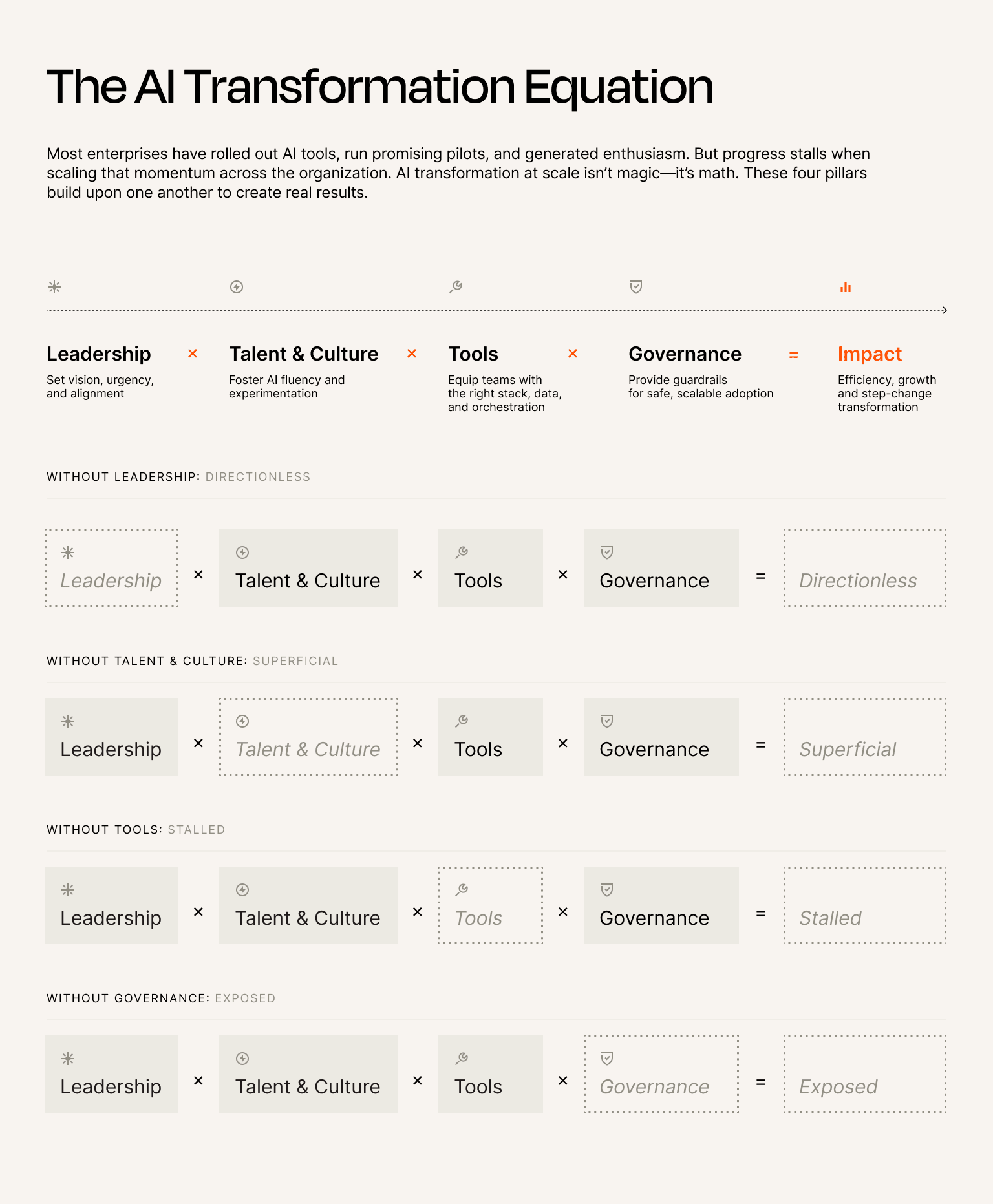

The equation

The equation

The equation

AI transformation at scale isn’t magic—it’s math. Four essential components multiply to create a measurable impact.

AI transformation at scale isn’t magic—it’s math. Four essential components multiply to create a measurable impact.

AI transformation at scale isn’t magic—it’s math. Four essential components multiply to create a measurable impact.

Each component builds upon the last, and the result is high-impact AI ROI. Remove one, and the entire equation collapses.

Each component builds upon the last, and the result is high-impact AI ROI. Remove one, and the entire equation collapses.

Each component builds upon the last, and the result is high-impact AI ROI. Remove one, and the entire equation collapses.

Leadership that fails to establish an AI culture will likely issue mandates no one follows. Culture without tools produces enthusiasm without execution. Tools without governance breed shadow AI and potential compliance nightmares. Governance without leadership becomes bureaucracy that kills momentum.

Transformation happens in loops

The most successful teams advance all four pillars together, progressing through stages of maturity:

Mobilize

Activate

Amplify

Sustain

Organizations start by mobilizing, building the foundation through vision, psychological safety, removing barriers, and setting clear guidelines.

They activate by scaling intentionally—naming accountable leaders, advocating for AI fluency, monitoring tool usage, and formalizing governance policies.

They amplify by optimizing and expanding, holding leaders accountable, staffing AI architects, consolidating tools, and embedding governance infrastructure.

Finally, these components sustain transformation by making it permanent. With this framework, you'll embed AI into core operations, update compensation models, create shared infrastructure, and integrate governance into board reporting.

So, whether you’re just starting or already deep into your AI journey, the equation remains the same. Get all four right, and AI becomes your competitive advantage.

Chapter 1

Leadership: Set vision, urgency, and alignment

Why this matters

26%

of leaders said more than half of their AI pilots scaled to production

83%

describe their AI execution goals as "realistic" or "ambitious-but-achievable"

Without dedicated leadership spearheading the charge, AI efforts are often scattered across disconnected experiments and never reach production. Teams launch pilots that show promise, then watch them die in procurement limbo or integration purgatory. Developers build brilliant prototypes that never leave the sandbox. Product managers identify transformative use cases that languish in the backlog. Not because the ideas were bad, but because no one with authority and budget made it their job to push them through.

The data from our recent research report reveals a striking confidence gap: only 26% of leaders said more than half of their AI pilots scaled to production, yet 83% describe their AI execution goals as “realistic" or "ambitious-but-achievable.”

In other words, leaders believe scaling is possible—they see peers doing it, they understand the potential, and they’ve approved the business cases. They just haven’t built the infrastructure to turn possibility into reality.

This gap exists because pilot-stage AI projects require different leadership than production-stage AI. Pilots need permission and a budget. Production needs ownership and accountability.

Leading through AI

Top executives from Zapier, NerdWallet, Webflow, and DoorDash share how they're turning AI mandates into real business transformation. Learn how leaders leverage Zapier's AI Transformation Framework to create urgency, build AI roadmaps, upskill teams, and scale efforts across their enterprise at our upcoming webinar.

What great AI leadership looks like

Activate

Name an accountable leader and prioritize strategic bets

Amplify

Hold leaders accountable for learnings and outcomes

Sustain

Embed AI into core operations

Mobilize: Establish a CEO-level call to action

Before a leader approves a budget, they must articulate why AI matters. When a CEO stands up and clearly explains what the organization is building, why it matters to the business, and why the timing is urgent, something shifts.

Leadership isn’t just about giving teams permission to experiment; it's about creating urgency without panic. Every employee should be able to articulate why AI isn’t optional for your organization—not because they memorized talking points, but because they genuinely understand the stakes.

When GPT-4 launched in March 2023, Zapier leadership realized the pace of AI improvement would inevitably lead to disruption. It could write API docs, generate working code, summarize tickets, and do real knowledge work—it was a wake-up call.

So, we issued Zapier's first-ever "Code Red." Not because we had all the answers, but because we knew standing still wasn't an option. We told the truth: AI was here, and it was going to change everything. That message wasn’t universally popular. But it was necessary to meet the moment—and we wanted the entire organization focused on that.

When teams understand purpose, they know why they need to push through obstacles. Without a clear “why now,” AI becomes another initiative competing for attention—something to fit in if there’s time.

GPT-4 can read and write code—and specifically API documentation. This has massive implications for Zapier and the entire software world. AI will disrupt every industry. Zapier is now in a race to find our footing in an AI-first world.

GPT-4 can read and write code—and specifically API documentation. This has massive implications for Zapier and the entire software world. AI will disrupt every industry. Zapier is now in a race to find our footing in an AI-first world.

GPT-4 can read and write code—and specifically API documentation. This has massive implications for Zapier and the entire software world. AI will disrupt every industry. Zapier is now in a race to find our footing in an AI-first world.

Wade Foster, CEO and Co-founder, in our AI Code Red announcement

Wade Foster, CEO and Co-founder, in our AI Code Red announcement

Activate: Name an accountable leader and prioritize strategic bets

The difference between AI initiatives that scale and those that stall often comes down to ownership. Our AI execution gap report revealed that most (81%) leaders say they can move from pilot to scale within 12 months, but 91% of practitioners encounter frequent pauses after pilots launch. That’s not a technology gap—it’s a leadership gap.

Success requires naming an AI transformation leader with actual authority to prioritize and allocate resources. Not a committee. Not a working group that meets monthly—a single individual who is responsible for AI transformation and has the power to make it happen. This leader should be able to:

The difference between AI initiatives that scale and those that stall often comes down to ownership. Our AI execution gap report revealed that most (81%) leaders say they can move from pilot to scale within 12 months, but 91% of practitioners encounter frequent pauses after pilots launch. That’s not a technology gap—it’s a leadership gap.

Success requires naming an AI transformation leader with actual authority to prioritize and allocate resources. Not a committee. Not a working group that meets monthly—a single individual who is responsible for AI transformation and has the power to make it happen. This leader should be able to:

Prioritize “bets” based on anticipated value creation rather than politics

Fund headcount plus services and tooling, not just pilots

Allocate budget for the unsexy-but-essential scaling infrastructure: data pipelines, integration work, and governance frameworks

Strategic AI users have figured this out. They’re nearly 2X more likely to dedicate 50%+ of their technology budgets to improving AI execution rather than just launching pilots. They’ve learned that the expensive part isn’t building the pilot—it’s building the infrastructure that makes scaling possible.

Prioritize “bets” based on anticipated value creation rather than politics

Fund headcount plus services and tooling, not just pilots

Allocate budget for the unsexy-but-essential scaling infrastructure: data pipelines, integration work, and governance frameworks

Strategic AI users have figured this out. They’re nearly 2X more likely to dedicate 50%+ of their technology budgets to improving AI execution rather than just launching pilots. They’ve learned that the expensive part isn’t building the pilot—it’s building the infrastructure that makes scaling possible.

I think it's going to be really hard for a company to make an AI transformation if the CEO and CFO, founder, executive team, if they're not just using it all the time.

I think it's going to be really hard for a company to make an AI transformation if the CEO and CFO, founder, executive team, if they're not just using it all the time.

I think it's going to be really hard for a company to make an AI transformation if the CEO and CFO, founder, executive team, if they're not just using it all the time.

Amplify: Hold leaders accountable for learnings and outcomes

As transformation matures, accountability shifts from “did you try?” to “what did you learn?” to “what impact did you deliver?” Leaders at this stage manage J-curve expectations, acknowledging that productivity may dip before it improves. They build two-week retrospectives into every phase, treating each milestone as a chance to course-correct rather than a deadline to hit. They celebrate “fast failures” that generate learning, and they track progress against clear success metrics.

As transformation matures, accountability shifts from “did you try?” to “what did you learn?” to “what impact did you deliver?” Leaders at this stage manage J-curve expectations, acknowledging that productivity may dip before it improves. They build two-week retrospectives into every phase, treating each milestone as a chance to course-correct rather than a deadline to hit. They celebrate “fast failures” that generate learning, and they track progress against clear success metrics.

The data tells an interesting story: 78% of practitioners view leadership timelines as realistic, but 75% believe leaders underestimate the difficulty of execution. Teams aren’t saying the timeline is wrong—they’re saying the path is harder than leadership expects. That’s why retrospectives matter. That’s why celebrating fast failures matters. Learning compounds faster than perfection.

We are in the middle of this absolutely transformative platform shift. The best analogy I can think of is when mobile took off. And there were companies that sort of dabbled in mobile, and there were companies that bet on it. And the companies that really bet on mobile just saw this decisive new market opening for them…The ones who dabbled kind of didn’t get that far.

We are in the middle of this absolutely transformative platform shift. The best analogy I can think of is when mobile took off. And there were companies that sort of dabbled in mobile, and there were companies that bet on it. And the companies that really bet on mobile just saw this decisive new market opening for them…The ones who dabbled kind of didn’t get that far.

We are in the middle of this absolutely transformative platform shift. The best analogy I can think of is when mobile took off. And there were companies that sort of dabbled in mobile, and there were companies that bet on it. And the companies that really bet on mobile just saw this decisive new market opening for them…The ones who dabbled kind of didn’t get that far.

Sustain: Embed AI into core operations

You can consider your AI transformation self-sustaining when you embed it into how the business actually operates: strategy sessions where AI capabilities shape what’s possible, budgeting and planning where AI investment is standard rather than exceptional, governance reviews where AI risk is monitored alongside other enterprise risks, and talent planning where AI fluency is a core competency.

You can consider your AI transformation self-sustaining when you embed it into how the business actually operates: strategy sessions where AI capabilities shape what’s possible, budgeting and planning where AI investment is standard rather than exceptional, governance reviews where AI risk is monitored alongside other enterprise risks, and talent planning where AI fluency is a core competency.

At this stage, every department head is accountable for AI impact in their domain. AI review becomes a standing agenda item in leadership meetings—not as a special project update, but as routine business. Transformation leaders shift from executing AI initiatives directly to providing the infrastructure that enables others to execute. When AI stops being a “program” and evolves into how work gets done, you’ve reached sustainability.

Leadership self-assessment

Do we have an executive sponsor who can dedicate 10%+ time to AI progress?

Can every employee articulate why AI matters to our business and why now?

Have we named an accountable leader with authority to prioritize and allocate resources?

Is our budget allocated for scaling infrastructure, not just pilots?

Next step: If you answered no to two or more, book time with one of our AI transformation experts to build leadership alignment.

Chapter 2

Talent and Culture: Foster AI fluency and experimentation

Why this matters

Without talent that knows how to use AI effectively—and a culture that makes space for hands-on experimentation—AI adoption stays superficial. Lots of licenses, little transformation. The tools sit idle while employees drown in busywork they could automate.

The data is stark: Our research shows that 95% of practitioners often report firefighting execution issues rather than making forward progress, and only 22% of organizations are “consistently proactive” with AI execution. This isn’t a tool problem—it’s a culture problem. When firefighting becomes the default mode, experimentation dies. There’s no space to learn new tools when you’re underwater fixing day-to-day issues.

95%

report firefighting execution issues rather than making forward progress

22%

of organizations are “consistently proactive” with AI execution

Breaking this cycle requires more than good intentions. It requires structural changes that protect time for experimentation, especially when teams feel busy.

Breaking this cycle requires more than good intentions. It requires structural changes that protect time for experimentation, especially when teams feel busy.

What great AI-first talent and culture look like

Mobilize

Build a culture of psychological safety and experimentation

Activate

Boost AI fluency with hands-on learning

Amplify

Select internal AI experts, then redesign roles and teams

Sustain

Update staffing ratios, incentives, and compensation

Mobilize: Build a culture of psychological safety and experimentation

Transformation begins with culture; specifically, a culture where it’s safe to try new things, give and receive feedback, and experiment without fear of career consequences. Organizations at this stage identify early adopters and actively encourage experimentation.

Transformation begins with culture; specifically, a culture where it’s safe to try new things, give and receive feedback, and experiment without fear of career consequences. Organizations at this stage identify early adopters and actively encourage experimentation.

This isn’t soft work—it’s foundational. Without psychological safety, people won't take risks. With it, they push boundaries. The difference compounds quickly.

This isn’t soft work—it’s foundational. Without psychological safety, people won't take risks. With it, they push boundaries. The difference compounds quickly.

I would say the number one difference that I see is a culture of experimentation and the willingness to tinker and play around with stuff. The companies that are actually seeing the most aggregate value created by AI are those that encourage this experimentation from every part of the business.

I would say the number one difference that I see is a culture of experimentation and the willingness to tinker and play around with stuff. The companies that are actually seeing the most aggregate value created by AI are those that encourage this experimentation from every part of the business.

I would say the number one difference that I see is a culture of experimentation and the willingness to tinker and play around with stuff. The companies that are actually seeing the most aggregate value created by AI are those that encourage this experimentation from every part of the business.

Activate: Boost AI fluency with hands-on learning

Webinars and broadly focused courses don’t build fluency. Teams learn how to use AI by using AI. The data confirms this: peer learning and hackathons rank as the most effective upskilling methods, cited by roughly 30% of both leaders and practitioners. Courses and certifications? They barely register.

Winning organizations create weekly two-hour “build blocks” where teams experiment with AI to tackle real work—not hypothetical use cases, but actual tasks they juggle in their day-to-day jobs. Organizations can also host peer learning sessions where employees share what they’ve built and maintain “show your workflow” Slack channels with 100+ examples that anyone can copy and adapt.

Most importantly, they embed AI fluency into hiring criteria and role competencies, signaling that this isn’t optional—companies expect new hires to have or rapidly develop AI fluency.

Webinars and broadly focused courses don’t build fluency. Teams learn how to use AI by using AI. The data confirms this: peer learning and hackathons rank as the most effective upskilling methods, cited by roughly 30% of both leaders and practitioners. Courses and certifications? They barely register.

Winning organizations create weekly two-hour “build blocks” where teams experiment with AI to tackle real work—not hypothetical use cases, but actual tasks they juggle in their day-to-day jobs. Organizations can also host peer learning sessions where employees share what they’ve built and maintain “show your workflow” Slack channels with 100+ examples that anyone can copy and adapt.

Most importantly, they embed AI fluency into hiring criteria and role competencies, signaling that this isn’t optional—companies expect new hires to have or rapidly develop AI fluency.

The measurement shift is telling: 50% of leaders now measure AI fluency through business outcomes—ROI, efficiency gains, productivity improvements—rather than self-reported usage and confidence. Three AI fluency models have emerged:

Impact-driven organizations measure fluency by efficiency gains, output quality, and financial impact.

Competence-driven organizations measure it through manager evaluations and verified proficiency.

Engagement-driven organizations measure it through confidence levels and participation rates.

What matters isn’t what employees know—it’s what they build. Track workflows built per employee instead of courses completed. Measure hours saved through AI and automation instead of license utilization. Tie bonuses to AI-driven outcomes rather than AI tool access. Our research shows that 46% of leaders say pay and promotions will depend on AI fluency in 2026.

The measurement shift is telling: 50% of leaders now measure AI fluency through business outcomes—ROI, efficiency gains, productivity improvements—rather than self-reported usage and confidence. Three AI fluency models have emerged:

Impact-driven organizations measure fluency by efficiency gains, output quality, and financial impact.

Competence-driven organizations measure it through manager evaluations and verified proficiency.

Engagement-driven organizations measure it through confidence levels and participation rates.

What matters isn’t what employees know—it’s what they build. Track workflows built per employee instead of courses completed. Measure hours saved through AI and automation instead of license utilization. Tie bonuses to AI-driven outcomes rather than AI tool access. Our research shows that 46% of leaders say pay and promotions will depend on AI fluency in 2026.

Across enterprises, three measurement models have emerged

Impact-driven AI fluency

where fluency is assessed by measurable outcomes. Fluency equals performance: demonstrated gains in efficiency, output quality, or financial impact tied directly to AI use.

Competence-driven AI fluency

where fluency is measured by observable behaviors and verified proficiency, typically through manager evaluations and formal certifications, or assessments that confirm consistent technical application.

Engagement-driven AI fluency

where fluency reflects confidence and participation that's tracked through employee self-reporting to understand comfort levels, adoption trends, and cultural traction.

We just piloted an AI enablement workshop in NYC, and engineers returned transformed in how they operate. One senior engineer on my team, upon his return from the workshop, is now trialing an entire milestone without writing code, purely through prompting. That’s the shift from AI-assisted to AI-first. The real value lies in getting two weeks away from work where you have the time and space to go deep and explore.

We just piloted an AI enablement workshop in NYC, and engineers returned transformed in how they operate. One senior engineer on my team, upon his return from the workshop, is now trialing an entire milestone without writing code, purely through prompting. That’s the shift from AI-assisted to AI-first. The real value lies in getting two weeks away from work where you have the time and space to go deep and explore.

We just piloted an AI enablement workshop in NYC, and engineers returned transformed in how they operate. One senior engineer on my team, upon his return from the workshop, is now trialing an entire milestone without writing code, purely through prompting. That’s the shift from AI-assisted to AI-first. The real value lies in getting two weeks away from work where you have the time and space to go deep and explore.

Amplify: Select internal AI experts, then redesign roles and teams

As transformation matures, organizations designate AI architects or builders—internal experts who help teams implement AI solutions. These aren’t external consultants parachuting in for three months. They’re employees who understand both the technology and the business context, and they're hired or promoted into formal roles with clear mandates.

Roles and teams get redesigned around new ways of working. Marketing teams include automation specialists. Finance teams have AI-powered analytics roles. Operations teams staff AI orchestration leads. Organizations dedicate 10% of sprint capacity to AI experiments, institute “no-meeting Fridays” for exploration, and enforce a three-sprint rule: if you do something manually three times, you automate it by sprint four.

As transformation matures, organizations designate AI architects or builders—internal experts who help teams implement AI solutions. These aren’t external consultants parachuting in for three months. They’re employees who understand both the technology and the business context, and they're hired or promoted into formal roles with clear mandates.

Roles and teams get redesigned around new ways of working. Marketing teams include automation specialists. Finance teams have AI-powered analytics roles. Operations teams staff AI orchestration leads. Organizations dedicate 10% of sprint capacity to AI experiments, institute “no-meeting Fridays” for exploration, and enforce a three-sprint rule: if you do something manually three times, you automate it by sprint four.

The data supports this approach. Based on our data, over the next year, leaders plan to invest heavily in people and processes to improve AI execution: 45% will retrain and upskill existing staff, and 24% are building internal AI champions. Practitioners echo this shift, with 48% saying they want investment in upskilling existing teams, while 33% want stronger internal champions. People don’t want to be replaced—they want to be equipped with the tools to have a greater impact.

[Supportive AI-forward leadership] looks like protecting space for experimentation while demanding rigor on measurement. My leadership gave me room to build geo-experimentation infrastructure that ultimately exposed $35 million in wasted ad spend—a finding that required rebuilding our entire growth strategy. That’s uncomfortable. AI-forward leadership means being willing to act on what AI reveals, not just celebrate the technology. It also means investing in the boring parts: observability, governance, and training.

[Supportive AI-forward leadership] looks like protecting space for experimentation while demanding rigor on measurement. My leadership gave me room to build geo-experimentation infrastructure that ultimately exposed $35 million in wasted ad spend—a finding that required rebuilding our entire growth strategy. That’s uncomfortable. AI-forward leadership means being willing to act on what AI reveals, not just celebrate the technology. It also means investing in the boring parts: observability, governance, and training.

[Supportive AI-forward leadership] looks like protecting space for experimentation while demanding rigor on measurement. My leadership gave me room to build geo-experimentation infrastructure that ultimately exposed $35 million in wasted ad spend—a finding that required rebuilding our entire growth strategy. That’s uncomfortable. AI-forward leadership means being willing to act on what AI reveals, not just celebrate the technology. It also means investing in the boring parts: observability, governance, and training.

Sustain: Update staffing ratios, incentives, and compensation

Winning organizations update compensation to reflect AI-driven impact, not just hours logged. Staffing ratios shift to reflect AI-augmented productivity—i.e., if automation lets three people do what five used to do, the organization doesn’t necessarily cut headcount. Then, they can redeploy those two people to higher-value work. Incentives reward impact from AI-augmented work, and compensation models evolve beyond time-based metrics.

Winning organizations update compensation to reflect AI-driven impact, not just hours logged. Staffing ratios shift to reflect AI-augmented productivity—i.e., if automation lets three people do what five used to do, the organization doesn’t necessarily cut headcount. Then, they can redeploy those two people to higher-value work. Incentives reward impact from AI-augmented work, and compensation models evolve beyond time-based metrics.

The cultural signal here is powerful. When people see their peers succeeding with AI, getting recognized, and compensated for AI-driven impact, adoption becomes organic. You stop needing to push—people start pulling.

Talent and Culture self-assessment

Do employees have dedicated, protected time for AI experimentation?

Are we measuring fluency through outcomes (what people build) vs. inputs (what they learn)?

Is AI fluency embedded in our hiring criteria and role competencies?

Have we redesigned roles and compensation to reflect AI-driven impact?

Next step: If you answered no to two or more, book some time with one of our AI transformation experts to embed hands-on learning into your culture.

Chapter 3

Tools: Equip teams with the right tech stack for full orchestration

Why this matters

Without the right tools and orchestration layer, even the most skilled teams stall at integration. They build brilliant workflows in isolation, then discover those workflows can’t talk to each other. They automate one process beautifully, then waste hours manually connecting it to the next step in the chain. Without integration, AI and automation remain trapped in isolated pockets rather than flowing across the enterprise.

The data confirms this: 46% of leaders say integration complexity and system sprawl are the most difficult barriers to overcome—nearly equal to the next three barriers combined. On the practitioners' side of things, they point to integration backlogs and policy delays as their biggest blockers. But here’s the revealing statistic: integration and workflow skills top leaders' list of workforce gaps at 32%, ahead of technical AI expertise at 22%. The bottleneck isn’t building AI—it’s connecting AI to everything else.

This is why tool selection matters more than most organizations realize. The difference between tools that integrate easily and tools that require custom engineering determines whether AI scales or stalls.

This is why tool selection matters more than most organizations realize. The difference between tools that integrate easily and tools that require custom engineering determines whether AI scales or stalls.

46%

of leaders say integration complexity and system sprawl are the most difficult barriers to overcome

What great AI tooling looks like

Mobilize

Ease barriers to purchase AI tools, then evaluate data readiness

Activate

Monitor tools and models in use

Amplify

Establish an AI tooling scorecard

Sustain

Create shared infrastructure, then consolidate tools and connect teams

Mobilize: Ease barriers to purchase AI tools, then evaluate data readiness

In the early stages of AI maturity, the goal is to remove friction and understand your foundation. Teams experiment with different AI tools independently—Zapier, ChatGPT, Claude, Cursor, and various copilots.

Organizations streamline budgeting and procurement so experiments don’t die waiting for approval. They evaluate data readiness: where data lives, how clean it is, and what governance currently exists. Learning takes priority over standardization at this stage. There’s no centralized inventory yet—the focus is on enabling experimentation.

In the early stages of AI maturity, the goal is to remove friction and understand your foundation. Teams experiment with different AI tools independently—Zapier, ChatGPT, Claude, Cursor, and various copilots.

Organizations streamline budgeting and procurement so experiments don’t die waiting for approval. They evaluate data readiness: where data lives, how clean it is, and what governance currently exists. Learning takes priority over standardization at this stage. There’s no centralized inventory yet—the focus is on enabling experimentation.

This matters because even the best AI tools fail if your data is messy, siloed, or inaccessible. Better to discover data problems during early experimentation than after you’ve standardized on tools that can’t access what they need.

This matters because even the best AI tools fail if your data is messy, siloed, or inaccessible. Better to discover data problems during early experimentation than after you’ve standardized on tools that can’t access what they need.

Activate: Monitor tools and models in use

As teams start using AI, visibility becomes critical. Organizations create a central inventory of AI tools and usage patterns, monitoring what tools are being used, which models, by whom, and for what purposes. They evaluate which tools are delivering value versus sitting idle, and they identify integration bottlenecks early—before they become architectural problems.

As teams start using AI, visibility becomes critical. Organizations create a central inventory of AI tools and usage patterns, monitoring what tools are being used, which models, by whom, and for what purposes. They evaluate which tools are delivering value versus sitting idle, and they identify integration bottlenecks early—before they become architectural problems.

25% of leaders cite standardizing tooling and platforms as their most effective execution strategy. This is the stage where standardization begins, driven by data about actual usage rather than vendor promises.

25% of leaders cite standardizing tooling and platforms as their most effective execution strategy. This is the stage where standardization begins, driven by data about actual usage rather than vendor promises.

Amplify: Establish an AI tooling scorecard

Organizations create formal scorecards that track which tools justify continued investment. They track usage, reliability, and ROI for each tool. Then they identify which tools to standardize as part of their tech stack and which to sunset. From there, teams consolidate overlapping tools and choose 1-2 core AI platforms rather than accumulating 10+. They select the orchestration layers that connect systems, deprecate tools that don’t integrate, and create a “tool governance board” to prevent sprawl.

Organizations create formal scorecards that track which tools justify continued investment. They track usage, reliability, and ROI for each tool. Then they identify which tools to standardize as part of their tech stack and which to sunset. From there, teams consolidate overlapping tools and choose 1-2 core AI platforms rather than accumulating 10+. They select the orchestration layers that connect systems, deprecate tools that don’t integrate, and create a “tool governance board” to prevent sprawl.

The correlation is striking: self-described strategic AI users—those who design and optimize AI-driven workflows with measurable business impact—are 3.8X more likely to describe their organizations as consistently proactive. Tool discipline enables execution discipline.

Sustain: Create shared infrastructure, then consolidate tools and connect teams

The sustain phase is about moving from fragmentation to orchestration. Organizations can consolidate into 1-2 core platforms, then embed AI into existing workflows rather than creating separate portals—think AI triggers inside Slack, automation embedded in CRM, and AI summaries delivered via email. They choose a composable architecture over monolithic platforms, building with modular tools rather than all-in-one suites, and favoring APIs and integrations over vendor lock-in.

The sustain phase is about moving from fragmentation to orchestration. Organizations can consolidate into 1-2 core platforms, then embed AI into existing workflows rather than creating separate portals—think AI triggers inside Slack, automation embedded in CRM, and AI summaries delivered via email. They choose a composable architecture over monolithic platforms, building with modular tools rather than all-in-one suites, and favoring APIs and integrations over vendor lock-in.

Orchestration platforms like Zapier are key here because they connect thousands of apps without custom code; automate complex workflows with no-code, low-code, or full-code approaches; deploy intelligent systems across every function; and scale integration without scaling engineering headcount. When integration becomes infrastructure rather than project work, full orchestration across your organization becomes achievable.

We standardize at the 'interface and primitives' layer rather than betting the farm on one vendor. The goal is provider flexibility, and an integration tax that is paid once via shared infrastructure, not paid repeatedly in every product. If a new tool cannot plug into our core primitives cleanly, support opt-in usage, and meet expectations around transparency and data responsibility, it is not worth introducing to millions of sites.

We standardize at the 'interface and primitives' layer rather than betting the farm on one vendor. The goal is provider flexibility, and an integration tax that is paid once via shared infrastructure, not paid repeatedly in every product. If a new tool cannot plug into our core primitives cleanly, support opt-in usage, and meet expectations around transparency and data responsibility, it is not worth introducing to millions of sites.

We standardize at the 'interface and primitives' layer rather than betting the farm on one vendor. The goal is provider flexibility, and an integration tax that is paid once via shared infrastructure, not paid repeatedly in every product. If a new tool cannot plug into our core primitives cleanly, support opt-in usage, and meet expectations around transparency and data responsibility, it is not worth introducing to millions of sites.

AI tooling self-assessment

Have we removed procurement barriers so teams can experiment?

Do we have visibility into which AI tools and models teams use?

Have we established scorecards tracking usage, reliability, and ROI?

Are we consolidating into shared infrastructure that enables connection without bottlenecks?

Next step: If you answered no to two or more, book some time with one of our AI transformation experts to audit your stack and build an orchestration strategy.

Chapter 4

Governance: Provide guardrails for safe, scalable adoption

Why this matters

63%

of practitioners admit to using AI tools without formal approval

58%

say governance slows execution more than it enables it

85%

of leaders aren’t fully confident they can monitor AI usage in real time

Without governance built into workflows, AI adoption creates risk faster than ROI. But governance that’s too restrictive kills innovation before it starts. This tension is tangible and measurable: 58% of practitioners say governance slows execution more than it enables it, yet 73% of leaders believe governance policies that are too restrictive cause more harm than policies that are too loose. Both groups agree governance matters—they just can’t agree on what good governance looks like.

Meanwhile, 63% of practitioners admit to using AI tools without formal approval, and 85% of leaders aren’t fully confident they can monitor AI usage in real time. Shadow AI thrives precisely because official channels feel too slow, and leaders don't even know how much shadow AI exists because they lack visibility into it.

The answer isn’t less governance—it’s faster governance. It’s governance that says “yes, and here’s how to do it safely” rather than saying “maybe, let me escalate this and get back to you in three weeks.”

Meanwhile, 63% of practitioners admit to using AI tools without formal approval, and 85% of leaders aren’t fully confident they can monitor AI usage in real time. Shadow AI thrives precisely because official channels feel too slow, and leaders don't even know how much shadow AI exists because they lack visibility into it.

The answer isn’t less governance—it’s faster governance. It’s governance that says “yes, and here’s how to do it safely” rather than saying “maybe, let me escalate this and get back to you in three weeks.”

Shadow AI is usually a symptom of friction: people are trying to get work done, and the approved path is too slow or unclear. I lean into enablement with clear boundaries. Publish or directly give guidance that people can follow, make the safe tooling easy to access, and be blunt about what data should never go into external tools. You do not eliminate shadow behavior with scolding; you eliminate it by making the right thing the easiest thing.

Shadow AI is usually a symptom of friction: people are trying to get work done, and the approved path is too slow or unclear. I lean into enablement with clear boundaries. Publish or directly give guidance that people can follow, make the safe tooling easy to access, and be blunt about what data should never go into external tools. You do not eliminate shadow behavior with scolding; you eliminate it by making the right thing the easiest thing.

Shadow AI is usually a symptom of friction: people are trying to get work done, and the approved path is too slow or unclear. I lean into enablement with clear boundaries. Publish or directly give guidance that people can follow, make the safe tooling easy to access, and be blunt about what data should never go into external tools. You do not eliminate shadow behavior with scolding; you eliminate it by making the right thing the easiest thing.

What great AI governance looks like

Mobilize

Establish guidelines for AI use

Activate

Create an AI task force and formalize foundational policies

Amplify

Establish a governance center of excellence

Sustain

Integrate AI governance into exec and board-level reporting

Mobilize: Establish guidelines for AI use

The first step is clarity: what’s safe to use, what’s restricted, and what requires review? At this stage, teams are experimenting independently with various AI tools, and shadow AI is emerging—not because people are reckless, but because they’re trying to get work done. Organizations define guidelines that clarify what’s safe rather than restricting what’s possible. They define risk tiers and encourage experimentation within risk tolerance. They make InfoSec reviews easy, so teams aren’t pushed toward shadow AI.

The first step is clarity: what’s safe to use, what’s restricted, and what requires review? At this stage, teams are experimenting independently with various AI tools, and shadow AI is emerging—not because people are reckless, but because they’re trying to get work done. Organizations define guidelines that clarify what’s safe rather than restricting what’s possible. They define risk tiers and encourage experimentation within risk tolerance. They make InfoSec reviews easy, so teams aren’t pushed toward shadow AI.

Clear guidelines give teams confidence to move forward, not obstacles that force workarounds. When people know the boundaries, they can operate freely within them.

Clear guidelines give teams confidence to move forward, not obstacles that force workarounds. When people know the boundaries, they can operate freely within them.

Activate: Create an AI task force and formalize foundational policies

As AI adoption and usage scale, governance needs a structure. Organizations can form a cross-functional governance council or governance working group that includes the AI transformation leader, legal, security, compliance, and business stakeholders. The council can fast-track low-risk tools so teams aren’t waiting weeks for approval, and then rigorously evaluate high-risk tools that touch customer data or automate crucial decisions. They scale self-serve capabilities, enabling teams to build safely within a governed framework.

Most importantly, governance groups formalize foundational policies. They define what’s acceptable, what’s restricted, and how data flows. They document data-handling expectations and create accessible policy documentation that isn't buried deep in Slack threads where no one can find it.

As AI adoption and usage scale, governance needs a structure. Organizations can form a cross-functional governance council or governance working group that includes the AI transformation leader, legal, security, compliance, and business stakeholders. The council can fast-track low-risk tools so teams aren’t waiting weeks for approval, and then rigorously evaluate high-risk tools that touch customer data or automate crucial decisions. They scale self-serve capabilities, enabling teams to build safely within a governed framework.

Most importantly, governance groups formalize foundational policies. They define what’s acceptable, what’s restricted, and how data flows. They document data-handling expectations and create accessible policy documentation that isn't buried deep in Slack threads where no one can find it.

45%

of organizations say they've fully documented their AI policies

Only 45% of organizations say they've fully documented their AI policies; 50% describe alignment as “somewhat informal.” This informality creates confusion and slows execution. Documentation isn’t bureaucracy—it’s clarity.

Only 45% of organizations say they've fully documented their AI policies; 50% describe alignment as “somewhat informal.” This informality creates confusion and slows execution. Documentation isn’t bureaucracy—it’s clarity.

Governance should enable observability, not create unnecessary bureaucracy. My approach: standardize enough to see what’s happening across teams, but don’t prescribe how people use the tools. We need governance for security training, for preventing duplicate work, for ensuring what we build can actually scale. But the goal is shared visibility, not control. When I can see what experiments are running across growth teams, we learn from each other. When everyone’s operating in silos, we’re just burning money on parallel discoveries. Light governance, strong observability.

Governance should enable observability, not create unnecessary bureaucracy. My approach: standardize enough to see what’s happening across teams, but don’t prescribe how people use the tools. We need governance for security training, for preventing duplicate work, for ensuring what we build can actually scale. But the goal is shared visibility, not control. When I can see what experiments are running across growth teams, we learn from each other. When everyone’s operating in silos, we’re just burning money on parallel discoveries. Light governance, strong observability.

Governance should enable observability, not create unnecessary bureaucracy. My approach: standardize enough to see what’s happening across teams, but don’t prescribe how people use the tools. We need governance for security training, for preventing duplicate work, for ensuring what we build can actually scale. But the goal is shared visibility, not control. When I can see what experiments are running across growth teams, we learn from each other. When everyone’s operating in silos, we’re just burning money on parallel discoveries. Light governance, strong observability.

Amplify: Establish a governance center of excellence

At this stage, governance becomes embedded infrastructure with dedicated expertise. From here, organizations establish a governance center of excellence: a dedicated team focused on AI governance best practices. They automate governance where possible: pre-approved AI models embedded directly in workflows, automated policy checks before deployment rather than manual reviews, real-time usage dashboards visible to IT and leadership, and permissions enforced at the platform level.

It's crucial to build complete visibility into every deployment, so pre-launch checklists should include observability setup. Every AI workflow has a clear owner and escalation path, and automated alerts flag policy exceptions immediately.

At this stage, governance becomes embedded infrastructure with dedicated expertise. From here, organizations establish a governance center of excellence: a dedicated team focused on AI governance best practices. They automate governance where possible: pre-approved AI models embedded directly in workflows, automated policy checks before deployment rather than manual reviews, real-time usage dashboards visible to IT and leadership, and permissions enforced at the platform level.

It's crucial to build complete visibility into every deployment, so pre-launch checklists should include observability setup. Every AI workflow has a clear owner and escalation path, and automated alerts flag policy exceptions immediately.

99%

of leaders learn about project failures only after the fact

The data here is sobering: 99% of leaders learn about project failures only after the fact through manager escalations or informal feedback, not through live dashboards or automated alerts. By the time leadership hears about a problem, the damage is done.

Sustain: Integrate AI governance into exec and board-level reporting

Governance becomes strategic when it’s integrated into leadership conversations. AI risk, usage patterns, and policy exceptions become standard items on the board agenda. Monthly governance reviews use actual usage data, not anecdotes, and these governance metrics are tied to leadership accountability.

Organizations create flexible frameworks with defined “safe zones” where teams can experiment without approval, tiered approval processes based on actual risk (low-risk auto-approved, medium-risk requires manager approval, high-risk goes to governance board), and clearly documented policies with no informal assumptions.

Governance becomes strategic when it’s integrated into leadership conversations. AI risk, usage patterns, and policy exceptions become standard items on the board agenda. Monthly governance reviews use actual usage data, not anecdotes, and these governance metrics are tied to leadership accountability.

Organizations create flexible frameworks with defined “safe zones” where teams can experiment without approval, tiered approval processes based on actual risk (low-risk auto-approved, medium-risk requires manager approval, high-risk goes to governance board), and clearly documented policies with no informal assumptions.

When the board asks about AI governance quarterly, leadership pays attention. Governance stops being optional—it becomes strategic. It’s no longer the team that says no; it’s the team that figures out how to say "yes" safely.

Governance self-assessment

Do we have clear, documented guidelines for what’s safe vs. what requires review?

Have we created an AI governance working group or council?

Can teams build safely within governed frameworks without manual approvals for everything?

Is AI governance integrated into executive and board-level reporting?

Next step: If you answered no to two or more, book some time with an AI transformation expert to build governance that enables speed.

Chapter 5

The Outcome: Impact

When all four components align, transformation becomes measurable

Remove any of these four variables, and AI ROI becomes negligible. Get all four right and the positive outcomes multiply.

Impact looks different depending on organizational maturity. Some enterprises are still proving adoption—can we get people to use AI at all? Others are demonstrating productivity gains—is AI saving time and money? The most mature organizations are showing measurable business outcomes tied directly to AI—did we accelerate revenue, reduce churn, improve margins, or enable growth that wouldn’t have been possible otherwise?

Leadership

Talent and Culture

Tools

Governance

Impact

What impact looks like

Enterprise leaders' preferred AI success metrics

30% — Deliver measurable business outcomes (ROI, efficiency, cost savings)

27% — Automate a higher percentage of workflows with AI

19% — Expand employee AI adoption

19% — Increase pilots that reach scaled production

5% — Achieve governance milestones

When leaders describe what success looks like next, they point squarely toward measurable outcomes and scale. The top success metric leaders prioritized was measurable ROI (30%). These aren’t vanity metrics—they’re board-level numbers that can withstand scrutiny. Some of the ways you can measure impact include:

Revenue acceleration because sales teams close deals faster

Cost reduction because support teams resolve tickets without human intervention

Efficiency gains that translate to either headcount savings or capacity expansion

Effective impact metrics shift from inputs—adoption rates, tool access, training completion—to outputs like revenue, cost savings, hours reclaimed, and quality improvements.

When leaders describe what success looks like next, they point squarely toward measurable outcomes and scale. The top success metric leaders prioritized was measurable ROI (30%). These aren’t vanity metrics—they’re board-level numbers that can withstand scrutiny. Some of the ways you can measure impact include:

Revenue acceleration because sales teams close deals faster

Cost reduction because support teams resolve tickets without human intervention

Efficiency gains that translate to either headcount savings or capacity expansion

Effective impact metrics shift from inputs—adoption rates, tool access, training completion—to outputs like revenue, cost savings, hours reclaimed, and quality improvements.

As shown in the graph above, 27% of leaders prioritize automating additional workflows, indicating a shift from isolated pilots to enterprise-wide orchestration. This is the shift from “we automated this one process” to “we automated 40% of our workflows across sales, support, and operations, and they all connect to each other.” It’s the point where automation stops being exceptional and starts being normal.

Another 19% of leaders prioritize expanding employee AI adoption. While this might seem like an input metric, it's actually an outcome metric for culture. When AI fluency becomes a core competency rather than a specialist skill—for example, when marketing builds its own automated workflows, or when finance deploys its own analytics—the organization has fundamentally transformed.

As shown in the graph above, 27% of leaders prioritize automating additional workflows, indicating a shift from isolated pilots to enterprise-wide orchestration. This is the shift from “we automated this one process” to “we automated 40% of our workflows across sales, support, and operations, and they all connect to each other.” It’s the point where automation stops being exceptional and starts being normal.

Another 19% of leaders prioritize expanding employee AI adoption. While this might seem like an input metric, it's actually an outcome metric for culture. When AI fluency becomes a core competency rather than a specialist skill—for example, when marketing builds its own automated workflows, or when finance deploys its own analytics—the organization has fundamentally transformed.

Investment signals from high performers

Where winning organizations invest reveals what drives impact. 45% will retrain and upskill existing staff, recognizing that transformation requires workforce capability, not just workforce headcount. 24% are building internal AI champions, creating peer-to-peer networks that drive organic adoption. 56% invest at least a quarter of their technology budgets in AI execution—not just purchasing tools but building the systems around them.

Where winning organizations invest reveals what drives impact. 45% will retrain and upskill existing staff, recognizing that transformation requires workforce capability, not just workforce headcount. 24% are building internal AI champions, creating peer-to-peer networks that drive organic adoption. 56% invest at least a quarter of their technology budgets in AI execution—not just purchasing tools but building the systems around them.

The pattern is clear: organizations that invest in their people, not just their tools, see the highest returns.

The pattern is clear: organizations that invest in their people, not just their tools, see the highest returns.

Common factors across successful AI projects

When practitioners were asked to describe what made their AI projects succeed, four patterns emerged consistently:

Clarity. Clear objectives from day one, defined use cases rather than vague mandates to “use more AI,” and transparent success metrics that everyone understands and agrees on before starting.

Collaboration. Cross-functional teams rather than siloed efforts, where engineering builds something, and operations figures out how to use it later; leadership support throughout the lifecycle rather than just at kickoff; and peer learning embedded into the process.

Process. Start small and iterate fast, monitoring and feedback loops built in from the beginning rather than bolted on after launch, and realistic expectations set early and adjusted as learning happens.

Foundations. Clean data and strong data quality, proper governance frameworks that define what’s allowed before you build, and integration planned before build—figuring out how systems will connect before you build the thing that needs connecting.

When practitioners were asked to describe what made their AI projects succeed, four patterns emerged consistently:

Clarity. Clear objectives from day one, defined use cases rather than vague mandates to “use more AI,” and transparent success metrics that everyone understands and agrees on before starting.

Collaboration. Cross-functional teams rather than siloed efforts, where engineering builds something, and operations figures out how to use it later; leadership support throughout the lifecycle rather than just at kickoff; and peer learning embedded into the process.

Process. Start small and iterate fast, monitoring and feedback loops built in from the beginning rather than bolted on after launch, and realistic expectations set early and adjusted as learning happens.

Foundations. Clean data and strong data quality, proper governance frameworks that define what’s allowed before you build, and integration planned before build—figuring out how systems will connect before you build the thing that needs connecting.

These aren’t revolutionary insights—they’re the unglamorous fundamentals that high-impact organizations execute on consistently. The difference between success and failure often comes down to discipline, not brilliance.

Learn how top enterprise leaders are transforming their teams

Leadership is the first pillar in our framework for a reason: AI impact scales when dedicated internal champions set direction, create urgency, and align AI to real business goals. But your organization needs more than a mandate.

Join us on Wednesday, March 4, for our webinar, "Leading through AI: How top executives are turning AI mandates into real business transformation." Learn how enterprise leaders from DoorDash, NerdWallet, and Webflow are creating a clear vision for AI, a guiding coalition, and a culture that rewards learning over perfection.

Leading through AI: How top executives are turning AI mandates into real business transformation

Wednesday, March 4

10 AM PT

▤▦▩▨

Research Reports • 2026

Research Reports • 2026

Research Reports • 2026